Delving into ibm 1401 computer machine language, this introduction immerses readers in a journey through the early days of computing, where innovation and perseverance paved the way for the machines we use today. The IBM 1401, unveiled in the late 1950s, was a marvel of its time, boasting a powerful architecture and a user-friendly interface that made it accessible to a wider audience.

As we delve deeper into the world of IBM 1401 computer machine language, we will explore the design principles that defined this groundbreaking system, from its central processing unit (CPU) to its memory components. We will also examine the challenges of programming the IBM 1401 in machine language, and the tools available to overcome these challenges.

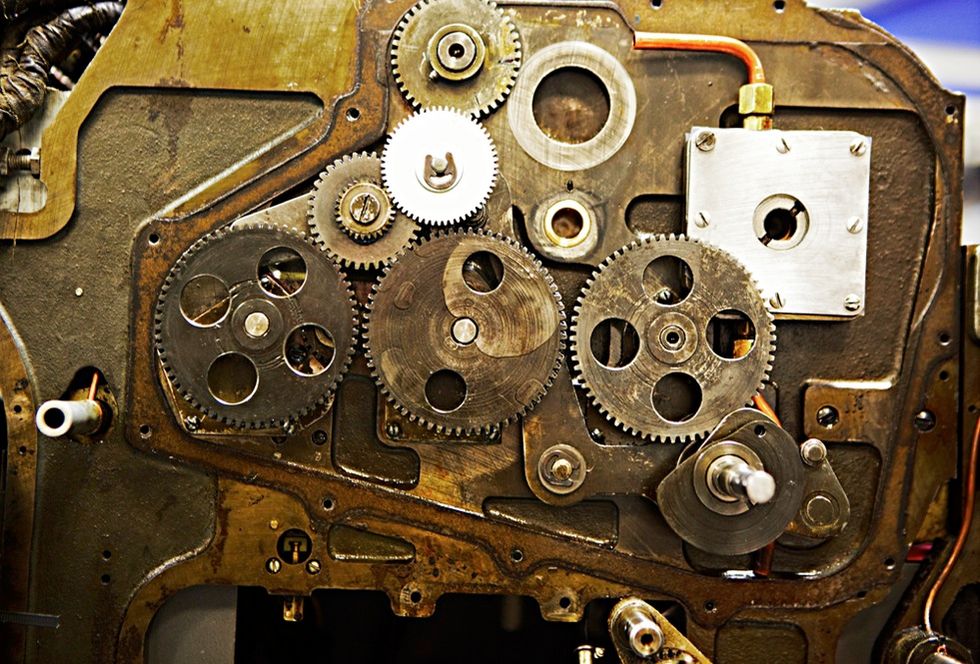

IBM 1401 Computer Architecture

The IBM 1401 computer, introduced in 1959, was a pioneering innovation in the field of computing. Its design principles and architecture significantly impacted early computing systems, setting the stage for future advancements.

The IBM 1401 was designed to meet the growing demands of businesses and governments for reliable, efficient, and affordable computing solutions. Its architecture was built around the concept of speed, memory, and data processing.

Central Processing Unit (CPU)

The IBM 1401’s CPU was the heart of the system, responsible for executing instructions and performing calculations. It employed a binary-coded decimal (BCD) architecture, which facilitated faster data processing and reduced errors.

The CPU consisted of several key components:

- The Arithmetical Unit (AU) performed arithmetic and logical operations.

- The Control Unit (CU) managed the flow of data and instructions between memory and peripherals.

- The Storage Control Unit (SCU) coordinated data transfer between memory and input/output devices.

The CPU’s BCD architecture allowed for faster data processing and reduced errors, making the IBM 1401 a reliable and efficient computing solution.

Memory Components

The IBM 1401’s memory consisted of three main components:

- Card Read-Only Memory (ROM): Containing the machine code and initial boot sequence.

- Card Read/Write Memory (R/W): Allowing storage and retrieval of data in card form.

- Magnetic Drum Storage (MD): Providing temporary storage for data being processed.

The combination of these memory components enabled the IBM 1401 to store and retrieve data efficiently, facilitating smooth operation of the system.

Comparison with Other Early Computers

The IBM 1401 was a significant improvement over earlier computers, offering improved performance, reliability, and cost-effectiveness. Compared to other early computers of its time, the IBM 1401:

- Had a faster clock speed and better processing performance.

- Used BCD arithmetic, reducing errors and increasing efficiency.

- Introduced the concept of a unified memory structure, making it easier to manage data.

These innovations solidified the IBM 1401’s position as a leader in the early computing landscape, influencing the design of future computing systems.

Impact on Computing

The IBM 1401’s impact on computing cannot be overstated. Its innovative design and architecture paved the way for future advancements in:

- Miniaturization: Enabling smaller, more portable computers.

- Memory and Storage: Improving data storage and retrieval capabilities.

- Processing Speed: Accelerating data processing and execution.

As computing technology evolved, the IBM 1401’s legacy continued to inspire innovation, shaping the modern computer architectures we use today.

Data Processing and Input/Output Operations

The IBM 1401 was a pioneering computer that played a significant role in the development of modern computing. Its innovative architecture and capabilities paved the way for future generations of computers. One of the key aspects of the IBM 1401 was its processing power and efficiency, which enabled it to perform a wide range of tasks with remarkable speed and accuracy.

IBM 1401’s Input/Output Devices

The IBM 1401 featured a range of input/output devices that facilitated data transfer and communication with operators. Some of the notable input/output devices included in the IBM 1401 were:

- Card Reader: The card reader was a crucial device that allowed operators to feed punch cards into the computer. The cards contained data that was encoded in the form of punched holes, which the computer could then read and process.

- Printer: The printer was responsible for producing printouts of the data processed by the computer. It was an essential device that enabled operators to visualize and interpret the data generated by the IBM 1401.

- Keyboard (Input Station): The keyboard was an input device that allowed operators to enter data directly into the computer. It was a simple yet effective device that facilitated user interaction with the IBM 1401.

How the IBM 1401 Processes Data

The IBM 1401 processed data by executing a set of instructions and performing arithmetic and logical operations. The computer used a combination of registers and the Arithmetic Logic Unit (ALU) to perform calculations and manipulate data. This led to the execution of instructions, which were stored in the Control Unit. The Control Unit fetched instructions, executed them, and handled data transfer between different parts of the computer.

Role of the Card Reader in Data Processing

The card reader played a vital role in the data processing capabilities of the IBM 1401. Punch cards were used as a medium of data transfer, and the card reader was responsible for reading and interpreting the data stored on the cards. The punched cards contained data in the form of holes, which the card reader converted into electrical signals that the computer could understand. This enabled the IBM 1401 to process a wide range of data, from simple arithmetic operations to complex calculations and data analysis.

The card reader used a mechanical reader head to scan the punch cards and detect the presence or absence of holes. The presence of a hole indicated a binary ‘1’, while the absence of a hole indicated a binary ‘0’. The card reader read the data from the cards at a rate of 75 cards per minute, which was an impressive feat for a computer in the 1960s.

The card reader format was standardized, with each card containing 80 columns of data. The cards were punched with holes in specific locations, which corresponded to specific data values or instructions. The computer read the data from the cards and processed it accordingly, making the card reader an essential component of the IBM 1401’s data processing capabilities.

Memory and Storage

The IBM 1401’s memory and storage system were crucial components of its architecture, responsible for temporarily holding and long-term storing data. This memory and storage hierarchy included core memory, disk storage, and tape storage, which worked together to ensure efficient data processing.

Memory Hierarchy

The IBM 1401 used a three-level memory hierarchy: registers, core memory, disk storage, and tape storage. The registers were the fastest storage location, followed by core memory, and then disk storage and tape storage, which were slower but had higher capacities. This hierarchy was designed to minimize the time it took to access data, making the system more efficient.

- Registers: The IBM 1401 had four general-purpose registers and several special-purpose registers, which held binary values or addresses. These registers were the fastest way to access data and were used extensively throughout the system.

- Core Memory: The IBM 1401 used magnetic core memory, which stored data in magnetic cores. This memory was relatively fast and had a capacity of 16,384 words.

Data Transfer Mechanisms

The IBM 1401 transferred data between memory and storage devices using a variety of mechanisms. One of the key mechanisms was the use of a memory buffer, which temporarily stored data being transferred between core memory and disk storage or tape storage.

- Memory Buffer: The IBM 1401 used a memory buffer to transfer data between core memory and disk storage or tape storage. This buffer ensured that data was transferred in a continuous stream, minimizing disruptions and errors.

- Tape Transfer: The IBM 1401 used a tape transport mechanism to transfer data between core memory and tape storage. This mechanism was relatively slow but was used for long-term data storage.

- Disk Transfer: The IBM 1401 used a disk drive to transfer data between core memory and disk storage. This mechanism was faster than tape transfer but slower than memory buffer transfer.

Memory and Storage Management

The IBM 1401 managed its memory and storage resources using a combination of hardware and software mechanisms. One of the key mechanisms was the use of a memory allocation system, which allocated memory blocks to programs.

- Memory Allocation: The IBM 1401 used a memory allocation system to allocate memory blocks to programs. This system ensured that each program had enough memory to run and that memory was not wasted.

- Core Memory Management: The IBM 1401 used a core memory management system to manage core memory. This system ensured that core memory was used efficiently and that memory was not wasted.

Data Integrity and Error Handling

The IBM 1401 used a variety of mechanisms to ensure data integrity and handle errors.

- Error Detection: The IBM 1401 used checksums and parity checks to detect errors during data transfer.

- Error Correction: The IBM 1401 used error correction codes to correct errors during data transfer.

This was the core part of IBM 1401’s storage system. They provided a comprehensive system for managing data and ensuring efficiency in their machine.

Programming Languages and Development Tools

The IBM 1401 computer, despite being an older model, had an impressive array of programming languages and development tools at its disposal. One of the primary programming languages used for the IBM 1401 was assembly language, which allowed programmers to write code that was directly executed by the computer’s central processing unit (CPU). This level of control made it an ideal choice for many applications.

Assembly Language

Assembly language was the primary programming language for the IBM 1401 computer. It consisted of a series of mnemonic instructions that represented specific CPU operations. Assembly language programs were written in assembly language statements, which were then translated into machine code by an assembler. The assembler converted the assembly language statements into a binary format that the computer’s CPU could execute directly. This process made assembly language an attractive choice for many programmers, as it provided fine-grained control over the computer’s operations.

- Assembly language was used for many applications, including business data processing, scientific simulations, and engineering calculations.

- Programmers could use assembly language to optimize their code for performance, as they had direct access to the CPU’s registers and instruction set.

Early High-Level Languages, Ibm 1401 computer machine language

In addition to assembly language, several high-level programming languages were also developed for the IBM 1401 computer. These languages allowed programmers to write code that was closer to natural language and provided a higher level of abstraction than assembly language. Two notable examples of early high-level languages for the IBM 1401 were Fortran and COBOL.

- Fortran was a high-level language developed for scientific and engineering applications, particularly for solving numerical problems.

- COBOL was a business-oriented language that focused on data processing and report generation, making it a popular choice for business applications.

Development Tools

In addition to programming languages, the IBM 1401 computer had a range of development tools that supported its programming effort. These tools included compilers, assemblers, and debuggers, which helped programmers write, test, and maintain their code.

| Development Tool | Description |

|---|---|

| Compiler | Transformed high-level language code into machine code that the computer’s CPU could execute. |

| Assembler | Translated assembly language code into machine code that the computer’s CPU could execute. |

| Debugger | Aided programmers in identifying and fixing errors in their code by providing information about the program’s execution and memory state. |

Debugging Procedures and Techniques

Debugging was an essential part of the programming process on the IBM 1401 computer. Programmers used a range of techniques, including print statements, console input/output, and memory analysis, to identify and fix errors in their code.

“Debugging is twice as hard as writing the code in the first place. So, if you write the code as cleverly as possible, you are not going to have the future of bugs.”

– Brian Kernighan

- Print statements were used to output information about the program’s execution, such as variable values, function calls, and error messages.

- Console input/output was used to interact with the program, such as entering data, displaying results, and receiving user input.

- Memory analysis was used to inspect the program’s memory state, including variable values, stack frames, and memory leaks.

Legacy and Impact

The IBM 1401 computer, despite being released in the 1950s, continued to be used well into the 1970s due to its robust design, ease of use, and affordability. This is a testament to the innovative design and engineering that went into creating this computer system. One of the key factors contributing to its popularity and longevity was its ability to perform a wide range of tasks, from simple calculations to complex data processing, all within a compact and relatively inexpensive package.

Contributing Factors to Popularity and Longevity

The IBM 1401’s popularity and longevity can be attributed to several factors, including its compact size, low power consumption, ease of use, and affordability. The computer’s small footprint made it ideal for use in small to medium-sized businesses, government agencies, and educational institutions. Additionally, its low power consumption and ease of use made it an attractive option for those who needed to perform complex calculations without breaking the bank.

- Compact Size and Low Power Consumption

- Ease of Use and Affordability

The IBM 1401’s compact size and low power consumption made it an attractive option for those who needed to perform complex calculations without sacrificing valuable office space. The computer’s small footprint and ability to operate on standard 110-120V power made it easy to integrate into existing office environments.

The IBM 1401’s ease of use and affordability made it an attractive option for those who needed to perform complex calculations without breaking the bank. The computer’s user-friendly interface and relatively low cost of ownership made it an attractive option for small to medium-sized businesses, government agencies, and educational institutions.

Impact on Later Computer Systems and Programming Languages

The IBM 1401’s impact on later computer systems and programming languages cannot be overstated. The computer’s innovative design and engineering influenced the development of subsequent computer systems, including the IBM System/360 and System/370 mainframe computers. The IBM 1401’s influence can also be seen in the development of programming languages, such as COBOL and FORTRAN, which were specifically designed to take advantage of the computer’s capabilities.

Examples of Influence on Subsequent Computers

The IBM 1401’s influence on subsequent computers can be seen in several areas, including its use of peripheral I/O devices, its support for multiple programming languages, and its ability to perform complex calculations.

- Peripheral I/O Devices

- Programming Languages

- Ability to Perform Complex Calculations

The IBM 1401’s use of peripheral I/O devices, such as magnetic tape drives and punch cards, influenced the development of subsequent computer systems. These devices enabled users to input and output data to and from the computer, making it easier to perform complex calculations and data processing tasks.

The IBM 1401’s support for multiple programming languages, including COBOL and FORTRAN, influenced the development of subsequent programming languages. These languages were specifically designed to take advantage of the computer’s capabilities and were widely adopted in the industry.

The IBM 1401’s ability to perform complex calculations influenced the development of subsequent computer systems. The computer’s innovative design and engineering enabled it to perform complex calculations at speeds previously unimaginable, making it an attractive option for those who needed to perform large-scale data processing tasks.

Legacy and Continued Use

Despite being discontinued in the 1970s, the IBM 1401 continued to be used in various industries, including finance, government, and education. The computer’s legacy can still be seen in modern computer architectures, programming languages, and applications, making it a significant milestone in the development of computer technology.

The IBM 1401’s influence can still be seen in modern computer architectures, programming languages, and applications, making it a significant milestone in the development of computer technology.

Last Point

As we conclude our journey through the world of IBM 1401 computer machine language, it is clear that this system played a significant role in shaping the course of computing history. Its impact can still be felt today, and its legacy continues to inspire new generations of developers and researchers. The IBM 1401 may be a relic of the past, but its legacy remains very much alive, and its influence can be seen in the machines we use every day.

Key Questions Answered: Ibm 1401 Computer Machine Language

What was the primary function of the IBM 1401 computer?

The primary function of the IBM 1401 computer was to process data, including executing instructions and transferring data to and from memory and storage devices.

How did the IBM 1401 improve upon earlier computer systems?

The IBM 1401 improved upon earlier computer systems with its user-friendly interface and powerful architecture, making it more accessible to a wider audience.

What programming languages were available for the IBM 1401 computer?

Assembly language and early high-level languages were available for the IBM 1401 computer.