Senior Machine Learning Engineer is a professional at the pinnacle of machine learning engineering, equipped with the skills and expertise to develop and deploy complex machine learning models that drive business success. As a senior leader, they are responsible for leading cross-functional teams to develop and implement machine learning solutions that meet business needs.

With a strong background in statistics, computer science, and software engineering, senior machine learning engineers design, develop, and deploy machine learning models that can predict customer behavior, detect anomalies, and make data-driven decisions.

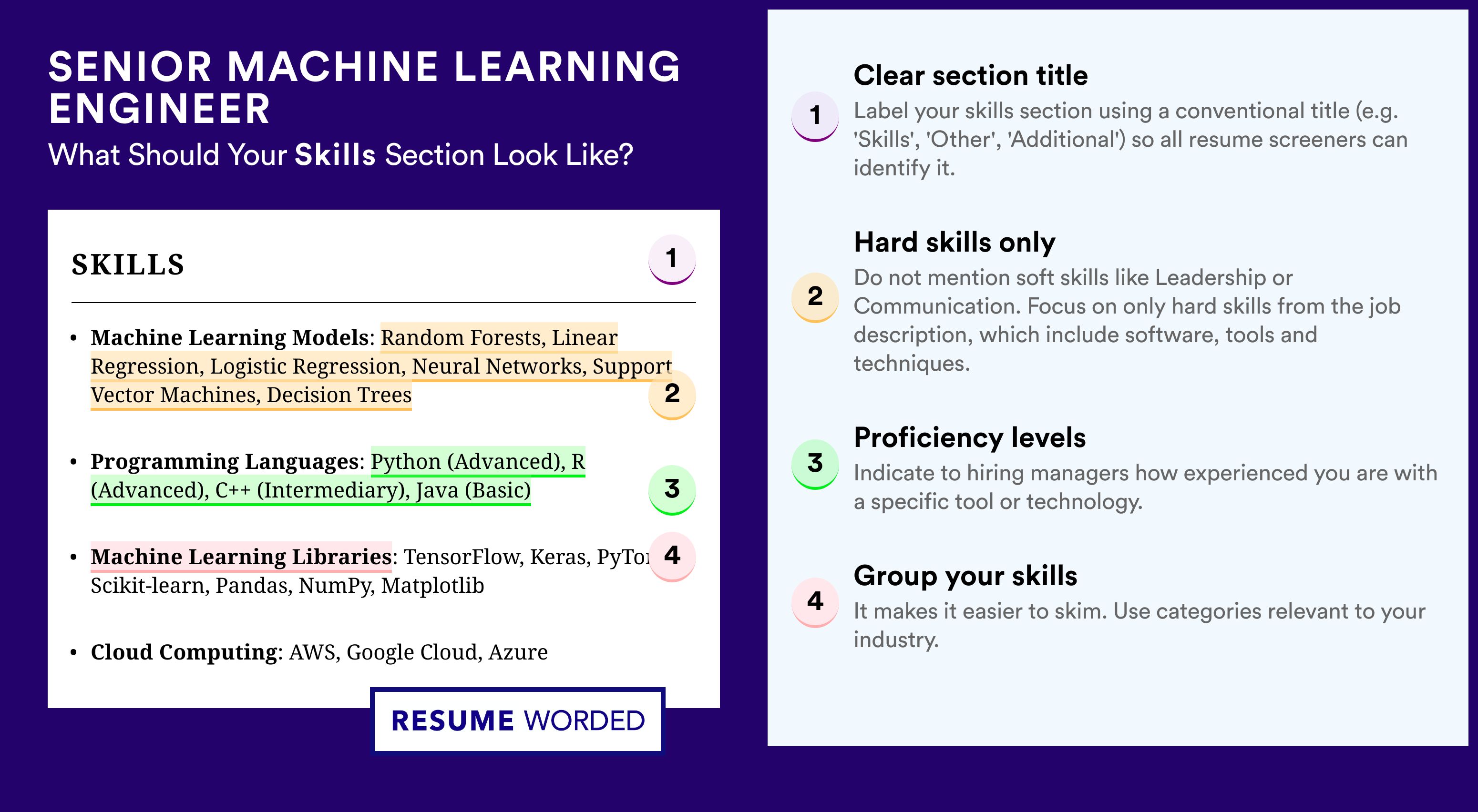

Key Technical Skills and Competencies

A senior machine learning engineer’s toolkit is a crucial component of their success in the field. This section focuses on the must-have programming languages and tools, as well as the role of deep learning frameworks in a senior machine learning engineer’s work.

A senior machine learning engineer should be proficient in programming languages that are widely used in the data science community. These languages include:

- Python: Python is a popular choice among data scientists and machine learning engineers due to its simplicity and extensive libraries, such as NumPy, pandas, and scikit-learn.

- R: R is another widely used programming language in data science, especially for statistical modeling and data visualization.

- Java: Java is a versatile language used in various applications, including machine learning and data science.

- Haskell: Haskell is a functional programming language used in various applications, including machine learning and data science.

Deep learning frameworks play a significant role in a senior machine learning engineer’s work, as they provide pre-built functions and modules for tasks like data preprocessing, model selection, and hyperparameter tuning. Some popular deep learning frameworks include:

- TensorFlow: TensorFlow is an open-source framework developed by Google for large-scale machine learning and neural networks.

- PyTorch: PyTorch is an open-source framework developed by Facebook for dynamic computation graphs and rapid prototyping.

- Keras: Keras is an open-source library for deep learning that provides an easy-to-use interface for building and training neural networks.

- Caffe: Caffe is an open-source framework for deep learning and computer vision.

Some of the must-have tools for a senior machine learning engineer’s toolkit include:

- Jupyter Notebook: Jupyter Notebook is an interactive web-based interface for working with data and code, allowing for rapid prototyping and experimentation.

- Matplotlib and Seaborn: Matplotlib and Seaborn are popular data visualization libraries used for creating informative and attractive visualizations.

- Tableau: Tableau is a data visualization tool used for creating interactive and dynamic visualizations.

- Git: Git is a version control system used for tracking changes and collaborations in projects.

A senior machine learning engineer should also be familiar with cloud platforms and distributed computing frameworks, such as:

- Amazon Web Services (AWS): AWS is a cloud platform that provides a wide range of services for machine learning, data science, and AI.

- Microsoft Azure: Microsoft Azure is a cloud platform that provides a wide range of services for machine learning, data science, and AI.

- Apache Spark: Apache Spark is a distributed computing framework used for large-scale data processing.

- Hadoop: Hadoop is a distributed computing framework used for large-scale data processing.

Collaboration and Communication in a Cross-Functional Team

As a senior machine learning engineer, effective collaboration and communication with non-technical stakeholders, data scientists, product managers, and software engineers are crucial for the success of a project. In this role, you will be expected to facilitate data-driven decision-making and ensure that complex machine learning concepts are communicated clearly to all stakeholders.

Communicating Complex Machine Learning Concepts to Non-Technical Stakeholders, Senior machine learning engineer

Communicating complex technical concepts to non-technical stakeholders can be challenging, but there are several strategies that can be employed to make it easier. Firstly, use simple language and avoid using jargon or technical terms that may be unfamiliar to non-technical stakeholders. Secondly, use visual aids such as diagrams, flowcharts, and infographics to help illustrate complex concepts. Finally, practice active listening and ask questions to ensure that you understand the needs and concerns of non-technical stakeholders.

- Use simple language: Avoid using technical terms or jargon that may be unfamiliar to non-technical stakeholders.

- Use visual aids: Use diagrams, flowcharts, and infographics to help illustrate complex concepts.

- Practice active listening: Ask questions to ensure that you understand the needs and concerns of non-technical stakeholders.

Collaborating with Data Scientists, Product Managers, and Software Engineers

Collaboration with data scientists, product managers, and software engineers is essential for the successful implementation of machine learning projects. Data scientists can provide valuable insights into the data and help to identify trends and patterns. Product managers can provide valuable input on the business requirements and ensure that the project is aligned with the company’s goals. Software engineers can provide valuable input on the technical feasibility of the project and ensure that the solution is scalable and maintainable.

- Collaborate with data scientists: Work closely with data scientists to ensure that the project is grounded in data-driven insights.

- Collaborate with product managers: Work closely with product managers to ensure that the project is aligned with business requirements.

- Collaborate with software engineers: Work closely with software engineers to ensure that the solution is scalable and maintainable.

Facilitating Data-Driven Decision-Making

Facilitating data-driven decision-making is crucial for the success of a machine learning project. This involves using data to inform decisions and provide actionable insights. As a senior machine learning engineer, you will be expected to facilitate data-driven decision-making by providing data-driven insights and recommendations. This involves identifying key performance indicators (KPIs) and using data to track progress and identify areas for improvement.

- Identify KPIs: Identify key performance indicators (KPIs) that will measure the success of the project.

- Use data to track progress: Use data to track progress and identify areas for improvement.

- Provide actionable insights: Provide actionable insights and recommendations that will inform data-driven decision-making.

Model Deployment and Maintenance

Model deployment and maintenance are crucial aspects of machine learning, ensuring that models are delivered to production and perform optimally over time. A well-maintained model not only improves the user experience but also enhances the overall efficiency and reliability of an application.

Steps Involved in Deploying a Machine Learning Model to Production

Deploying a machine learning model to production involves several key steps, including model serialization, model serving, model monitoring, and model retraining.

- Model Serialization: Convert the machine learning model into a format that can be easily stored and loaded, such as a file or a database entry.

- Model Serving: Implement a robust and scalable infrastructure to serve the deployed model, receiving new data and making predictions.

- Model Monitoring: Continuously evaluate the model’s performance by tracking metrics such as accuracy, precision, and recall, to identify any potential issues.

- Model Retraining: Regularly update the model with new data to adapt to changing environments, ensuring it remains accurate and effective.

Strategies for Model Maintenance, Update, and Monitoring

Effective model maintenance involves continuously evaluating and improving the model to maintain its performance over time. This includes updating the model with new data, adjusting hyperparameters, and using techniques such as ensemble methods and transfer learning to improve its accuracy.

- Regular Data Updates: Continuously collect and integrate new data to adapt to changing environments and maintain the model’s accuracy.

- Hyperparameter Tuning: Regularly adjust the model’s hyperparameters to optimize its performance and address any issues.

- Ensemble Methods: Combine multiple models with different strengths to improve overall performance and accuracy.

- Transfer Learning: Leverage pre-trained models on related tasks to improve the model’s performance and adapt to new data.

The Importance of Data Drift and Concept Drift in Model Performance

Data drift and concept drift are two critical aspects that can significantly impact a model’s performance over time. Data drift refers to changes in the distribution of the data, while concept drift refers to changes in the underlying relationships between variables.

| Term | Description |

|---|---|

| Data Drift | Changes in the distribution of the data, such as shifts in mean or variance. |

| Concept Drift | Changes in the underlying relationships between variables, often due to changes in the environment or system. |

Data drift and concept drift can be addressed through techniques such as online learning, where the model adapts to new data in real-time, and through regular evaluation of the model’s performance against new data to detect any changes.

Data drift and concept drift are major concerns in model maintenance, as they can significantly impact the model’s performance and accuracy over time. Regular evaluation and adaptation to new data are essential to maintain the model’s effectiveness and maintain user trust.

Ethical Considerations and Responsible AI Development

In the dynamic realm of machine learning, the creation of AI systems has reached an unprecedented level of sophistication. However, this complexity comes with inherent risks and challenges that necessitate a strong focus on responsible AI development. One critical aspect is the need to consider the ethical implications of AI and machine learning models, ensuring that they are fair, transparent, and accountable.

The importance of fairness, transparency, and accountability in machine learning models lies in their profound impact on the world. AI systems are being increasingly integrated into various sectors, such as healthcare, finance, and education, to name a few. These systems, while capable of immense good, can also perpetuate biases and exacerbate existing social issues if not carefully designed and implemented. Therefore, it is crucial to embed ethical considerations throughout the development process.

Fairness and Bias in AI

Fairness in AI models refers to their ability to treat all individuals with equal consideration, without discriminating based on any characteristic such as race, gender, age, or socioeconomic status. However, the presence of bias in AI systems can lead to discriminatory outcomes, resulting in unfair treatment of certain groups. This can manifest in various ways, including:

- Bias in data collection: AI systems can perpetuate existing biases if the data used to train them is biased or incomplete.

- Cultural insensitivity: AI models can be designed without consideration for local cultural nuances, leading to unintended consequences.

- Exclusionary decision-making: AI systems can exclude certain groups from accessing services or opportunities based on biased decision-making processes.

Ensuring fairness in AI development involves implementing robust strategies for detecting and mitigating bias. This includes using diverse datasets, testing for bias, and continuously monitoring the performance of AI systems in real-world environments.

Transparency and Explainability in AI

Transparency and explainability in AI models refer to their ability to provide clear and understandable information about their functioning and decision-making processes. This is essential for building trust in AI systems among stakeholders, including users and regulators.

- Model interpretability: AI models should be designed to provide clear and concise explanations of their decisions and outcomes.

- Transparency in decision-making: AI systems should offer transparent information about the factors that influence their decisions.

- Ongoing monitoring and evaluation: AI systems should be continuously monitored and evaluated to ensure they remain fair and transparent over time.

Strategies for ensuring model interpretability and transparency include using techniques such as feature importance, partial dependence plots, and SHAP values.

Accountability in AI Development

Accountability in AI development refers to the responsibility of individuals and organizations involved in creating and deploying AI systems. This includes ensuring that AI systems are designed and implemented in a way that respects human rights, dignity, and well-being.

- Designing for accountability: AI systems should be designed with accountability in mind, including features that allow for tracking and auditing.

- Cultivating a culture of responsibility: Organizations should foster a culture of responsibility and accountability among their employees and stakeholders.

- Engaging in ongoing dialogue: AI developers should engage in ongoing dialogue with stakeholders, including regulators, users, and experts, to ensure that AI systems meet the highest standards of responsibility and accountability.

Ensuring accountability in AI development involves implementing robust strategies for identifying and mitigating potential risks and negative consequences. This includes using techniques such as risk assessments, impact evaluations, and scenario planning.

Emerging Trends and Future Developments in Machine Learning: Senior Machine Learning Engineer

Machine learning is an ever-evolving field, with new breakthroughs and innovations emerging regularly. From improving the performance of deep learning models to addressing concerns around bias, fairness, and explainability, the future of machine learning holds great promise. This section delves into the recent advancements, current trends, and future developments that will shape the landscape of machine learning.

Transfer Learning Advancements

Transfer learning is a technique that enables machine learning models to leverage pre-trained models and adapt them to new tasks with minimal training. Recent advancements in transfer learning have led to significant improvements in model performance and efficiency. For instance, the concept of “feature distillation” allows for the transfer of knowledge from a pre-trained model to a new task by optimizing the feature representations. This approach has shown promising results in various applications, including natural language processing, computer vision, and speech recognition.

- Feature distillation allows for the transfer of knowledge from a pre-trained model to a new task by optimizing the feature representations.

- Methodologies like “adapter-based” transfer learning enable the adaptation of pre-trained models to new tasks with minimal training.

- Self-supervised learning techniques have emerged as a powerful tool for pre-training models, enabling them to learn from unlabeled data and adapt to new tasks.

Attention Mechanisms

Attention mechanisms have become a crucial component of machine learning models, allowing them to selectively focus on relevant parts of the input data. Recent advancements in attention mechanisms have led to improved performance in tasks such as natural language processing, video analysis, and question-answering. The use of self-attention mechanisms, multi-head attention, and hierarchical attention has enabled models to capture long-range dependencies and complex patterns in data.

- Self-attention mechanisms enable models to capture long-range dependencies and complex patterns in data.

- Multi-head attention allows models to selectively focus on relevant parts of the input data, improving performance in tasks such as language translation and text summarization.

- Hierarchical attention enables models to capture dependencies between different levels of abstraction, improving performance in tasks such as image analysis and speech recognition.

Generative Models

Generative models have revolutionized the field of machine learning, enabling the creation of realistic synthetic data, images, and videos. Recent advancements in generative models have led to significant improvements in model performance and diversity. The use of generative adversarial networks (GANs), variational autoencoders (VAEs), and generative adversarial networks based on conditional random fields (CRFs) has enabled the creation of high-quality synthetic data, images, and videos.

- GANs enable the creation of realistic synthetic data, images, and videos, improving performance in tasks such as image and video generation, data augmentation, and anomaly detection.

- VAEs enable the efficient representation of complex data distributions, improving performance in tasks such as image and speech recognition, clustering, and dimensionality reduction.

- CRFs-based GANs enable the creation of high-quality synthetic data, images, and videos, improving performance in tasks such as image and video generation, data augmentation, and anomaly detection.

Explainable AI

Explainable AI (XAI) has become a crucial component of machine learning, enabling the understanding of model behavior and decision-making. Recent advancements in XAI have led to significant improvements in model interpretability and accountability. The use of techniques such as SHAP (SHapley Additive exPlanations), LIME (Local Interpretable Model-agnostic Explanations), and saliency maps has enabled the attribution of model predictions to input features, improving transparency and trust.

| Method | Description |

|---|---|

| SHAP | SHAP assigns a value to each feature for a specific prediction, enabling the attribution of model predictions to input features. |

| LIME | LIME provides a model-agnostic explanation of a prediction by generating a simplified interpretable model locally around the prediction. |

| Saliency Maps | Saliency maps highlight the most important features contributing to a model’s prediction, enabling the attribution of model predictions to input features. |

Autonomous Decision-Making

Autonomous decision-making has become a crucial component of machine learning, enabling the creation of systems that make decisions without human intervention. Recent advancements in autonomous decision-making have led to significant improvements in model performance and efficiency. The use of techniques such as reinforcement learning, deep Q-learning, and transfer learning has enabled the creation of autonomous systems that adapt to changing environments and make decisions based on contextual information.

Autonomous decision-making systems will play a crucial role in shaping the future of machine learning, enabling the creation of systems that adapt to changing environments and make decisions based on contextual information.

Trends Shaping the Future of Machine Learning and AI

The future of machine learning and AI holds great promise, with emerging trends such as edge AI, explainable AI, and autonomous decision-making set to revolutionize the way we interact with technology. The use of techniques such as reinforcement learning, transfer learning, and deep learning will enable the creation of advanced AI systems that adapt to changing environments and make decisions based on contextual information.

- Edge AI will enable the deployment of AI models on edge devices, reducing latency and improving performance in applications such as robotics, autonomous vehicles, and Internet of Things (IoT).

- Explainable AI will enable the understanding of AI model behavior and decision-making, improving transparency and trust in AI systems.

- Autonomous decision-making will enable the creation of systems that adapt to changing environments and make decisions based on contextual information, improving performance in applications such as robotics, autonomous vehicles, and IoT.

Final Wrap-Up

In conclusion, senior machine learning engineers play a critical role in shaping the future of machine learning and AI. By combining technical expertise with business acumen, they drive innovation and growth for their organizations. As the field of machine learning continues to evolve, the demand for senior machine learning engineers is expected to grow exponentially.

Popular Questions

Q: What skills are required to become a senior machine learning engineer?

A: To become a senior machine learning engineer, one should have a strong foundation in programming languages such as Python, R, and SQL, as well as expertise in machine learning frameworks like TensorFlow, PyTorch, and Scikit-learn. Additionally, they should have a deep understanding of data structures, algorithms, and software engineering principles.

Q: What is the difference between machine learning and deep learning?

A: Machine learning is a broad field of study that involves creating algorithms that can learn from data, while deep learning is a subset of machine learning that uses neural networks to analyze and interpret data.

Q: Can a senior machine learning engineer work in both research and industry?

A: Yes, senior machine learning engineers can work in both research and industry, depending on their interests and career goals.

Q: What are some of the challenges that senior machine learning engineers face?

A: Senior machine learning engineers often face challenges such as handling large datasets, dealing with data quality issues, and ensuring model interpretability and explainability.

Q: What are some of the emerging trends in machine learning?

A: Some of the emerging trends in machine learning include transfer learning, attention mechanisms, and generative models.