Automatic differentiation in machine learning: a survey – Automatic Differentiation in Machine Learning Survey is the key to unlocking a world of optimization opportunities. With the ever-growing demand for AI solutions in robotics, computer vision, and game development, understanding Automatic Differentiation (AD) has become a fundamental requirement for machine learning engineers. In this comprehensive survey, we will delve into the world of AD, exploring its importance in gradient-based optimization, various methods used for gradient computation, and the software libraries that make it all possible.

The importance of gradient-based optimization in machine learning cannot be overstated. It is the driving force behind many machine learning algorithms, enabling them to learn from data and improve their performance over time. However, calculating gradients can be computationally expensive, making it a challenging task. That’s where Automatic Differentiation comes in – a technique that simplifies the process of computing gradients, making it possible to optimize machine learning models efficiently and effectively.

Introduction to Automatic Differentiation in Machine Learning

Automatic differentiation (AD) has become a crucial aspect of modern machine learning (ML) due to its ability to efficiently compute gradients. However, the importance of gradient-based optimization in machine learning cannot be overstated. Machine learning models are trained using optimization algorithms, which rely on computing the gradient of a loss function with respect to model parameters. This computation is fundamental for various ML tasks, including supervised learning, where the goal is to minimize the loss between predicted and true outputs.

Machine learning tasks often involve complex models, and the manual computation of gradients for these models is a time-consuming and error-prone process. To address this issue, various methods have been developed for gradient computation. These methods can be broadly categorized into three main groups: Symbolic Computation, Numerical Computation, and Automatic Differentiation.

Symbolic Computation

Symbolic computation methods involve computing the derivatives using mathematical algebra. This can be done using computer algebra systems (CAS), such as Mathematica or Sympy. CAS tools can manipulate mathematical expressions and compute their derivatives. However, the computational complexity of this approach increases rapidly with the size of the expression, making it less efficient for large models. Furthermore, this method can be computationally expensive, making it unsuitable for real-time applications.

- Advantages: symbolic computation provides exact derivatives and can be used for complex models

- Disadvantages: computationally expensive and less efficient for large models

Numerical Computation

Numerical computation methods approximate the derivatives using finite differences. This approach works by perturbing the input values slightly and measuring the change in the output. The derivative is then approximated as the ratio of these two values.

Numerical computation has become a popular choice due to its speed and ease of implementation. However, this approach suffers from several limitations. First, it can be computationally expensive, especially for large models. Second, numerical differentiation is sensitive to the choice of step size, which can lead to inaccurate results.

- Advantages: fast and easy to implement

- Disadvantages: computationally expensive and sensitive to step size

Automatic Differentiation

Automatic differentiation, also known as AD, is a method that computes the derivative of a function using its original source code. This approach is faster and more accurate than numerical differentiation and can handle complex models easily.

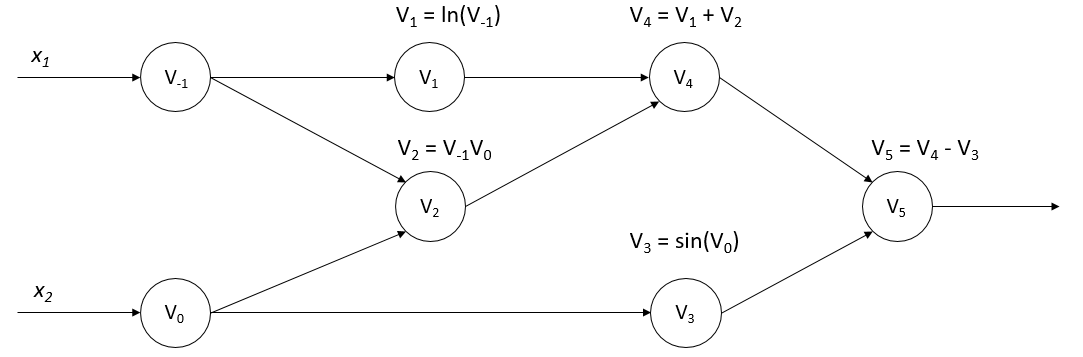

Automatic differentiation can be applied to both forward and backward passes of a model. The forward pass involves passing input values through the model to compute the output. The backward pass involves computing the derivatives of the output with respect to the input values. AD algorithms work by traversing the computational graph of the model, identifying operators, and computing their derivatives on the fly.

Automatic differentiation has become a popular choice in machine learning due to its speed, accuracy, and ease of implementation. Many deep learning frameworks, including TensorFlow and PyTorch, provide built-in support for automatic differentiation.

- Advantages: fast, accurate, and easy to implement

- Disadvantages: complex implementation for certain models

AD algorithms can be classified into two main categories: forward-mode AD and reverse-mode AD

In forward-mode AD, the algorithm traverses the computational graph from input to output, computing the derivatives of each operator along the way.

In reverse-mode AD, the algorithm traverses the computational graph from output to input, computing the derivatives of each operator in reverse order.

Automatic differentiation has numerous applications in machine learning, including neural network training, reinforcement learning, and optimization. It has become an essential tool for modern machine learning and is widely used in various domains, including image recognition, natural language processing, and speech recognition.

Types of Automatic Differentiation

The primary concern in machine learning is identifying the derivatives of functions to optimize and improve models. With the emergence of various automatic differentiation (AD) techniques, researchers and practitioners can efficiently compute these derivatives without tedious manual calculations or relying heavily on symbolic differentiation. In this section, two main types of AD are explored: forward and reverse mode automatic differentiation. These techniques enable the computation of derivatives for complex functions by leveraging the chain rule, a fundamental concept in calculus.

Forward Mode Automatic Differentiation, Automatic differentiation in machine learning: a survey

Forward mode AD, also known as forward mode, is a method of computing the derivative of a function while traversing the function call tree in the same direction as the function evaluation. It involves evaluating the function and its derivatives simultaneously, starting from the leaf nodes (inputs) and moving towards the root node (output). The technique is often used in the context of optimization algorithms and gradient-based methods, such as stochastic gradient descent.

Forward mode AD has several advantages:

- It is particularly useful when the derivative of the output with respect to a single input is needed, such as in scalar optimization problems.

- It can be implemented efficiently using a small amount of additional memory and computational resources.

- It can handle a wide range of mathematical operations, including vector and matrix operations.

However, forward mode AD also has some limitations:

- It becomes less efficient when dealing with functions that have a large number of inputs or require a large number of function evaluations.

- It is not well-suited for computing the derivative of a function with respect to multiple inputs simultaneously.

Unlike forward mode AD, reverse mode AD, also known as reverse mode, computes the derivative of a function by traversing the function call tree in the reverse direction. It starts from the root node (output) and moves towards the leaf nodes (inputs). This approach is typically used when the derivative of the output with respect to multiple inputs is needed, such as in vectorized optimization problems.

Reverse mode AD has several advantages:

- It is particularly useful when the derivative of the output with respect to multiple inputs is needed.

- It can handle complex functions that involve multiple inputs and outputs.

- It can be used in conjunction with forward mode AD to compute the derivative of a function in a more efficient manner.

Reverse mode AD involves a process called adjoint sensitivity analysis. The adjoint variables are used to represent the derivative of the output with respect to the inputs. The adjoint variables are then used to compute the derivative of the output with respect to the inputs.

Adjoint Sensitivity Analysis

Adjoint sensitivity analysis is a technique used in reverse mode AD to compute the derivative of the output with respect to the inputs. The adjoint variables are used to represent the derivative of the output with respect to the inputs. The adjoint variables are then used to compute the derivative of the output with respect to the inputs.

The adjoint variables are defined as follows:

*

∂f = ∂y/∂x ( adjoint variables )

In this equation,

f

represents the function being differentiated,

y

represents the output of the function, and

x

represents the input to the function. The adjoint variables are used to represent the derivative of the output with respect to the inputs.

Comparison of Forward and Reverse Mode AD

Forward and reverse mode AD are two distinct approaches to computing the derivative of a function. Forward mode AD is useful when the derivative of the output with respect to a single input is needed. Reverse mode AD, on the other hand, is useful when the derivative of the output with respect to multiple inputs is needed.

The choice of approach depends on the specific problem being solved. If the problem involves computing the derivative of a single input, forward mode AD is a good choice. If the problem involves computing the derivative of multiple inputs, reverse mode AD is a better option.

In conclusion, forward and reverse mode AD are two powerful techniques for computing the derivative of a function. Forward mode AD is useful for computing the derivative of a single input, while reverse mode AD is useful for computing the derivative of multiple inputs. The choice of approach depends on the specific problem being solved.

AD Implementation Strategies: Automatic Differentiation In Machine Learning: A Survey

Auto-differentiation has become a crucial component in modern machine learning frameworks, enabling efficient and accurate computation of gradients. Various software libraries and frameworks have adopted auto-differentiation to simplify the development of deep learning models. This section explores the design of autodiff-enabled deep learning frameworks, discusses software libraries using auto-differentiation, and shares methods to optimize automatic differentiation computation.

Software Libraries Using Auto-differentiation

Several renowned software libraries and frameworks have integrated auto-differentiation to simplify the development of deep learning models. These libraries enable developers to focus on model architecture and loss functions, rather than gradient computation.

- TensorFlow: TensorFlow is a popular open-source machine learning framework developed by Google. It incorporates auto-differentiation to compute gradients efficiently, making it a widely used library in the industry.

- PyTorch: PyTorch is an open-source machine learning framework developed by Facebook. It utilizes auto-differentiation to compute gradients, providing a dynamic computation graph that makes it easier to implement complex models.

- Theano: Theano is a popular open-source Python library used for computing and learning complex mathematical expressions, particularly well-suited for deep learning. It incorporates auto-differentiation to compute gradients.

Each of these libraries has its own strengths and weaknesses, and developers often choose the one that best suits their project’s requirements.

Design of Autodiff-enabled Deep Learning Frameworks

Autodiff-enabled deep learning frameworks typically employ a combination of techniques to optimize automatic differentiation computation. These include:

-

Operator Overloading

: Many frameworks overload operators like +, -, \*, /, and = to enable auto-differentiation. This allows users to define complex mathematical expressions using standard syntax.

-

Dynamic Computation Graph

: Frameworks like PyTorch and TensorFlow employ dynamic computation graphs, which are modified and updated during the execution of the program. This approach allows for efficient computation of gradients.

-

Automatic Graph Construction

: Some frameworks automatically construct the computation graph based on the user-defined model architecture and loss function. This simplifies the development process and eliminates the need for manual graph construction.

The design of autodiff-enabled deep learning frameworks aims to strike a balance between ease of use, computational efficiency, and flexibility.

Optimizing Automatic Differentiation Computation

To optimize automatic differentiation computation, developers can employ several techniques:

-

Fusion of Operations

: Combining multiple operations into a single computation can reduce the number of intermediate results and improve performance.

-

In-memory computation

: Computing operations in memory can be faster than computing them on the GPU or CPU.

-

Graph Pruning

: Removing unnecessary nodes and edges from the computation graph can reduce the computational overhead and improve performance.

These techniques can help optimize automatic differentiation computation, leading to faster model training and inference.

Common Pitfalls When Implementing AD

Developers should be aware of the following common pitfalls when implementing auto-differentiation:

-

Incorrect use of operator overloading

: Misusing operator overloading can lead to incorrect computation of gradients or crashes.

-

Unnecessary computation

: Failing to optimize the computation graph or not using fusion of operations can result in inefficient computation of gradients.

-

Insufficient error checking

: Ignoring error checking can lead to incorrect results or crashes during model training or inference.

By understanding these common pitfalls, developers can avoid potential issues and create robust and efficient auto-differentiation implementations.

Gradient Computation Methods

Gradient computation is a crucial component of machine learning, as it enables the optimization of complex models by determining the direction and magnitude of the error surface. In this section, we will explore the various methods employed to compute gradients, with a focus on direct differentiation rules, adjoint sensitivity analysis, and adjoint-based gradient computation in reverse mode AD.

Direct Differentiation Rules

Direct differentiation rules are a fundamental concept in automatic differentiation, enabling the computation of gradients through the application of mathematical rules and identities. These rules provide a systematic approach to computing gradients, based on the composition of functions and the properties of derivatives.

∂(f(g(x)))/∂x = (∂f/∂g) \* (∂g/∂x )

This rule illustrates the chain rule for differentiation, where the derivative of a composite function is computed by the product of the derivatives of the individual components.

Adjoint Sensitivity Analysis

Adjoint sensitivity analysis is a mathematical framework for analyzing the sensitivity of a system to changes in its inputs. This approach is particularly useful in the context of automatic differentiation, where it enables the computation of gradients through the identification of adjoint variables.

The adjoint sensitivity analysis is based on the following equation:

δF/δu = ∫[∂F/∂u]dt

where δF/δu represents the variation of the objective function F with respect to the input u, and ∂F/∂u is the derivative of F with respect to u.

Adjoint-Based Gradient Computation

Adjoint-based gradient computation is a method for computing gradients through the use of adjoint variables. This approach is particularly efficient in the context of reverse mode AD, where it enables the computation of gradients by following the computation flow backwards.

In adjoint-based gradient computation, the gradient of the objective function F with respect to the input u is computed as follows:

δF/δu = ∑[∂F/∂u_i]

where ∂F/∂u_i represents the derivative of F with respect to the ith input variable u_i.

Efficient Gradient Calculation

Efficient gradient calculation is a critical aspect of machine learning, as it affects the convergence speed and accuracy of optimization algorithms. Several methods have been proposed to improve gradient calculation, including:

- Approximate gradient calculation: This approach involves approximating the gradient of the objective function F with respect to the input u by replacing it with a simpler expression, such as a linear or quadratic approximation.

- Gradient compression: This approach involves compressing the gradient update vector to reduce the communication overhead in distributed optimization settings.

- Quantization: This approach involves quantizing the gradient update vector to reduce the memory requirements and improve the stability of optimization algorithms.

These methods can be used in conjunction with adjoint-based gradient computation to improve the efficiency of gradient calculation.

Implementation of Adjoint Algorithms

The implementation of adjoint algorithms is a critical aspect of machine learning, as it affects the accuracy and efficiency of gradient computation. Several computational tools have been developed to implement adjoint algorithms, including:

- TensorFlow: This is an open-source machine learning framework developed by Google, which provides a range of tools and libraries for implementing adjoint algorithms.

- Singa: This is an open-source deep learning framework developed by the Singapore-MIT Alliance for Research and Technology (SMART), which provides a range of tools and libraries for implementing adjoint algorithms.

- Autograd: This is an open-source automatic differentiation library developed by the University of California, Berkeley, which provides a range of tools and libraries for implementing adjoint algorithms.

These computational tools provide a range of features and libraries for implementing adjoint algorithms, including automatic differentiation, gradient computation, and optimization.

Computational Tools for High-Dimensional Data

High-dimensional data poses significant challenges in machine learning due to its complex structure and computational requirements. Specialized libraries and efficient algorithms are essential for handling such data, enabling accurate predictions and robust models. In this section, we explore the computational tools and strategies that facilitate the analysis of high-dimensional data.

Specialized Libraries for Numerical Computation and Optimization

Several libraries have been developed to address the specific needs of high-dimensional data. These libraries provide optimized implementations of numerical computation and optimization algorithms, allowing for efficient execution on parallel architectures. Some notable examples include:

- TensorFlow and PyTorch

- Scikit-learn

- SciPy

Two popular deep learning frameworks that provide an extensive range of numerical computation and optimization tools. Their implementation of automatic differentiation, in particular, enables seamless integration with high-dimensional data.

A widely used library for machine learning that incorporates various optimization algorithms, including stochastic gradient descent and conjugate gradient. Scikit-learn’s optimization tools have been optimized for performance and memory efficiency.

A scientific computing library that offers a range of optimization algorithms, including linear and nonlinear least squares. SciPy’s optimization tools are designed to handle high-dimensional data and are often used in conjunction with other libraries.

Efficient Gradient Approximation Strategies

Given the sensitivity of optimization algorithms to gradients, efficient gradient approximation is crucial in high-dimensional data analysis. Several strategies can be employed to improve gradient approximation:

- Stochastic Gradient Descent (SGD)

- Batch Gradient Descent

- Quasi-Newton Methods

A popular optimization algorithm that uses stochastic gradient approximation. SGD is particularly effective in high-dimensional data due to its ability to adapt to non-linear relationships.

A classic optimization algorithm that uses batch gradient approximation. While less efficient than SGD, batch gradient descent can be effective for smaller datasets or when computational resources are limited.

Quasi-Newton methods, such as the Broyden-Fletcher-Goldfarb-Shanno (BFGS) algorithm, approximate the Hessian matrix using a limited history of gradient evaluations. This approach can significantly reduce computational overhead while maintaining convergence speed.

Application of Quasi-Newton Methods

Quasi-Newton methods have been widely adopted in machine learning due to their ability to balance convergence speed and computational overhead. These methods have been particularly effective in high-dimensional data analysis, where the number of model parameters can lead to prohibitive computational costs.

The BFGS algorithm, a quasi-Newton method, has been shown to outperform traditional Newton’s method in high-dimensional optimization tasks. This is because the BFGS algorithm adaptively updates the Hessian matrix approximation, allowing it to capture non-linear relationships in the data.

Numerical Libraries for High-Dimensional Data

In addition to the libraries mentioned earlier, there are several other numerical libraries that can handle high-dimensional data:

- Numpy

- PyCUDA

A fundamental library for numerical computing in Python, providing efficient implementations of array operations and linear algebra functions.

A library that provides a Python interface to the CUDA GPU architecture, enabling parallelization of numerical computations and accelerated performance.

These libraries have been optimized for performance and memory efficiency, making them ideal for high-dimensional data analysis.

Optimization of Automatic Differentiation Computation

Automatic differentiation (AD) is a powerful tool for computing gradients in machine learning, but its computational cost can be significant, especially for high-dimensional or complex models. To mitigate this, researchers and practitioners have developed various strategies for optimizing AD computations, which are discussed below.

Parallelization of AD on Distributed Environments

Parallelizing AD computations on distributed environments can significantly speed up gradient computations. This can be achieved through various strategies:

- Model parallelism: dividing the model into smaller sub-modules and computing their gradients in parallel.

- Data parallelism: dividing the training data into smaller batches and computing their gradients in parallel.

- Distributed memory parallelism: using multiple machines to store and compute gradients in parallel.

- Synchronized parallelism: using synchronization primitives to ensure that all processors finish computing gradients before updating the model.

Each of these strategies has its strengths and weaknesses, and selecting the best approach depends on the specific use case and available computational resources.

Model parallelism, for example, is particularly suitable for large models that cannot fit in memory on a single processor. By dividing the model into smaller sub-modules, each processor can compute the gradients of its local sub-module in parallel, which can significantly speed up the overall computation. However, this approach requires careful synchronization to ensure that the gradients from each sub-module are correctly combined to compute the overall gradient.

Reducing Gradient Computations in Sequential Settings

In many machine learning applications, gradients need to be computed sequentially, either due to memory constraints or computational resource limitations. In such cases, reducing the number of gradient computations can be beneficial. Several strategies can be employed to achieve this:

- Checkpointing: storing the model’s state at intermediate computation points, so that gradients can be computed from the checkpointed state instead of the entire model.

- Pipelining: splitting the computation of gradients into smaller, independent tasks that can be executed in parallel.

- Gradient approximation: approximating the gradients using techniques such as stochastic gradient descent (SGD), which only requires computing gradients for a subset of the training data.

Each of these strategies has its own trade-offs and can be suitable depending on the specific use case.

Checkpointing, for example, can be particularly effective when computing gradients is expensive and the model’s state changes slowly over time. By storing the model’s state at intermediate points, gradients can be computed more efficiently without having to recompute the entire model.

Gradient Approximation Techniques

Gradient approximation techniques are used to approximate the gradients of a model without computing the exact gradients. This can be beneficial when computing gradients is expensive or when the model’s gradients are not well-defined. Several gradient approximation techniques are available:

- Stochastic gradient descent (SGD): approximating gradients by computing the gradient of a single data point instead of the entire training dataset.

- Mini-batch gradient descent: approximating gradients by computing the gradient of a small batch of data points instead of the entire training dataset.

- Gradient quantization: approximating gradients by quantizing the gradient values to fewer bits or integers.

- Monte Carlo gradient approximation: approximating gradients by using random samples of the data or model to estimate the gradient.

Each of these techniques has its strengths and weaknesses, and selecting the best approach depends on the specific use case.

SGD, for example, can be particularly effective when the model’s gradients are noisy or have high variance. By computing gradients for a single data point, SGD can reduce the impact of noise and improve the stability of the training process.

Mitigating Numerical Instability in Gradient-Based Optimization

Numerical instability is a common issue in gradient-based optimization, where the gradients of the model become too large or too small, leading to divergence or convergence issues. Several techniques can be employed to mitigate numerical instability:

- Gradient clipping: clipping the gradients to a fixed range or standard normal distribution to prevent them from becoming too large.

- Gradient normalization: normalizing the gradients to a fixed scale or distribution to improve stability.

- Gradient momentum: adding momentum to the gradients to stabilize the optimization process.

Each of these techniques has its strengths and weaknesses, and selecting the best approach depends on the specific use case.

Gradient clipping, for example, can be particularly effective when the model’s gradients are very large or have high variance. By clipping the gradients, the optimization process can become more stable and less susceptible to numerical instability.

Applications of Automatic Differentiation

Automatic differentiation (autodiff) has numerous applications in various fields, revolutionizing the way complex problems are tackled. It enables researchers and developers to efficiently compute gradients, which is the backbone of many machine learning and optimization algorithms.

Vision and Robotics

Autodiff has been instrumental in robotics and computer vision tasks requiring precise gradient computation. For instance, in robotics, autodiff allows for:

-

Detailed analysis of movement dynamics by calculating the derivatives of kinematic models, enabling optimal path planning and motion control.

-

Precise tracking of visual features, facilitating object recognition, and scene understanding.

-

Critical analysis of sensor data, leading to better navigation and manipulation in cluttered environments.

-

Gradient-based learning of robotic policies through model-predictive control, leading to more efficient and agile motion.

“Autodiff simplifies gradient-based learning for robotics, enabling more accurate and efficient motion control.”

Natural Language Processing (NLP)

Autodiff plays a vital role in NLP by facilitating gradient-based learning and optimization of complex models such as language translation, sentiment analysis, and text classification:

-

Autodiff in transformer models like BERT and RoBERTa enables efficient gradient computation during training, significantly improving performance on various NLP benchmarks.

-

Using autodiff in masked language modeling, NLP applications can benefit from self-supervised learning, enabling representation learning and pre-training of language models.

-

Autodiff allows NLP tasks to scale to large datasets and architectures, making it a crucial component of modern NLP pipelines.

Game Development

Autodiff helps streamline game development by optimizing critical components such as:

-

Collision detection and response systems, ensuring smooth gameplay and realistic simulations.

-

Physics engines, enabling more accurate and efficient simulations of complex physical phenomena.

-

Artificial intelligence (AI) decision-making, facilitating more realistic agent behaviors and game world interactions.

-

Autodiff-based level generation techniques, allowing for the creation of more diverse and engaging levels.

Additional Industry Applications

Autodiff has also found applications in various other fields, including:

-

Scheduling and resource allocation optimization in supply chain management and logistics.

-

Financial modeling and risk analysis, allowing for more accurate and efficient computation of derivatives and sensitivities.

-

Molecular dynamics simulation, facilitating more accurate and efficient computation of gradients in force fields.

“Autodiff has become a crucial component of many modern applications, enabling efficient and scalable gradient computation and facilitating breakthroughs in diverse fields.”

Last Word

And there you have it – Automatic Differentiation in Machine Learning Survey is a fundamental concept that has revolutionized the field of machine learning. By understanding the importance of gradients in optimization, the various methods used for gradient computation, and the software libraries that make it possible, you’ll be well on your way to building more efficient and effective machine learning models. Remember, AD is not just a technique – it’s a key to unlocking the full potential of machine learning.

FAQ Resource

What is Automatic Differentiation?

Automatic Differentiation (AD) is a technique used to compute gradients of functions, enabling machine learning models to learn from data and improve their performance over time.

What are the benefits of AD in machine learning?

AD simplifies the process of computing gradients, making it possible to optimize machine learning models efficiently and effectively.

What are some common applications of AD in machine learning?

AD is commonly used in robotics, computer vision, and game development, among other applications.

What are some common methods used for gradient computation?

Direct differentiation rules and adjoint sensitivity analysis are two common methods used for gradient computation.