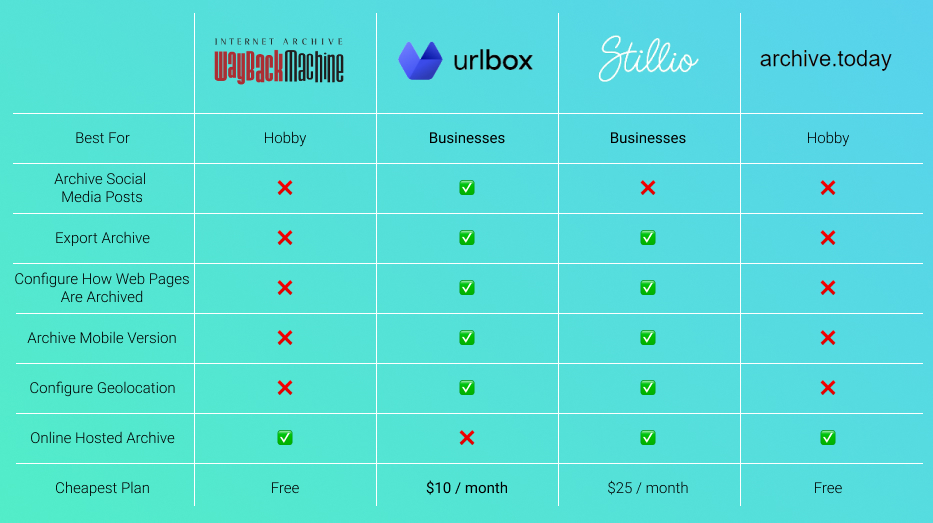

With Wayback Machine similar sites at the forefront, this journey delves into the world of web archiving, revealing the importance of preserving online content for future generations. The Wayback Machine, a pioneer in this field, allows users to access archived versions of websites, but it’s not the only option. As we explore various alternative platforms, we’ll uncover the strengths and weaknesses of each, highlighting the need for diverse solutions in web archiving.

The primary function of the Wayback Machine is to preserve web content over time, creating a digital library of the internet’s history. However, other platforms, such as Archive-It, Perma.cc, and Internet Memory, offer unique features and benefits that set them apart from the Wayback Machine.

Exploring Alternatives to the Wayback Machine

The internet is a vast and ever-changing environment, with new information emerging every day. The Wayback Machine, a pioneering web archiving platform, plays a crucial role in preserving web content over time. Launched in 2001 by the Internet Archive, it has been instrumental in safeguarding the integrity of the internet by archiving snapshots of websites as they evolve.

Key features set the Wayback Machine apart from other web archiving platforms, including its comprehensive coverage of the internet, with over 530 billion web pages archived since its inception. Additionally, its user-friendly interface allows individuals to easily access and explore archived web content from different points in time. This makes it an invaluable resource for researchers, businesses, and individuals alike seeking to understand the historical context of the internet.

Primary Function: Web Archiving

The primary function of the Wayback Machine is to archive and preserve web content over time. This involves periodically crawling the internet to capture snapshots of websites as they exist at a particular moment in time. The archived content is then stored in a vast digital repository, allowing users to access and explore the web as it existed in the past.

- Automated Crawling Process: The Wayback Machine’s automated crawling process enables it to systematically capture and archive web content as it changes over time.

- Periodic Snapshots: The platform creates periodic snapshots of websites, allowing users to access and explore archived content from different points in time.

- Comprehensive Coverage: The Wayback Machine’s comprehensive coverage of the internet ensures that a vast array of websites are archived and preserved for future generations.

Key Features and Benefits

Several key features and benefits set the Wayback Machine apart from other web archiving platforms. These include its comprehensive coverage of the internet, user-friendly interface, and extensive archive of historical web content.

- User-Friendly Interface: The Wayback Machine’s user-friendly interface makes it easy for individuals to access and explore archived web content without requiring technical expertise.

- Comprehensive Archive: The platform’s extensive archive of historical web content provides a valuable resource for researchers, businesses, and individuals seeking to understand the historical context of the internet.

- Access to Rare and Obsolete Content: The Wayback Machine’s archive of rare and obsolete web content provides a unique window into the internet’s past, allowing users to access and explore web content that is no longer available today.

Challenges and Limitations

Despite its many benefits, the Wayback Machine faces several challenges and limitations. These include its reliance on automated crawling processes, which can be affected by various factors, and the difficulty of archiving content that is intentionally removed or altered.

| Challenge/ Limitation | Description |

|---|---|

| Automated Crawling Process Limitations | The Wayback Machine’s automated crawling process can be affected by factors such as changes in website structure, content removal, or intentional blocking by website owners. |

| Intentional Removal or Alteration | Some website owners intentionally remove or alter their content, making it difficult for the Wayback Machine to capture and preserve it. |

Alternatives and Complementary Platforms

Several alternatives and complementary platforms offer web archiving services, each with their own strengths and weaknesses. These include the Internet Archive, the Google Cache, and the Archive-It platform.

- Internet Archive: The Internet Archive is a comprehensive online library that preserves digital content, including books, movies, music, and websites.

- Google Cache: The Google Cache is a temporary snapshot of a webpage that is stored by Google’s search engine, allowing users to access cached versions of websites.

- Archive-It: Archive-It is a web archiving platform that allows users to create and manage their own archival collections, providing a unique perspective on specific topics or regions.

Best Practices for Web Archiving

To ensure the long-term preservation of web content, it is essential to adopt best practices for web archiving. These include crawling websites systematically, capturing snapshots at regular intervals, and using standardized metadata to facilitate access and discovery.

Best Practices

- Systematic Crawling: Crawling websites systematically ensures that all content is captured and preserved without gaps or biases.

- Regular Snapshots: Capturing snapshots at regular intervals allows users to access and explore archived content from different points in time.

- Standardized Metadata: Using standardized metadata enables users to discover and access archived content more easily, facilitating research and exploration.

Alternative Web Archiving Platforms

In the ever-evolving digital landscape, preserving internet content has become a pressing concern. Beyond the Internet Archive and Google Cache, numerous alternative web archiving platforms have emerged to bridge the gap in capturing the ephemeral nature of online content.

The landscape of web archiving has grown more complex with the proliferation of platforms catering to various needs and capabilities. A comparison between the Internet Archive and Google Cache offers valuable insights into the varying approaches to web archiving.

Difference in Archive Coverage and Quality

The Internet Archive boasts an expansive collection of web pages, dating back to the 1990s. This is due in part to its commitment to crawl the entire web, albeit at a slower pace. In contrast, Google Cache primarily focuses on indexing web pages that are likely to disappear soon. Its coverage is more limited due to its reliance on crawling frequency and prioritization of frequently visited websites.

Google Cache typically captures snapshots of web pages when they are accessed by users, especially when the content is considered at-risk of disappearing. This strategy results in a more limited archive compared to the Internet Archive.

Crawling Frequencies and Methodologies

The differences in crawling frequencies and methodologies between the Internet Archive and Google Cache are rooted in their distinct purposes and approaches. The Internet Archive endeavors to crawl the entire web, albeit with varying frequencies, whereas Google Cache relies on user-driven snapshots.

While the Internet Archive uses a combination of web scraping, crawling, and donations to supplement its archives, Google Cache depends on its crawl budget and prioritization algorithms to determine which websites to index. This results in a more selective approach to web archiving, with a focus on capturing content that is likely to be ephemeral.

Comparison of Archive Coverage

| Archive | Coverage | Quality |

| — | — | — |

| Internet Archive | Expansive | High |

| Google Cache | Limited | Medium |

The Internet Archive’s comprehensive coverage and higher quality archives stem from its broader crawling scope and dedication to preserving digital content. In contrast, Google Cache’s limited coverage and moderate quality are a direct result of its reliance on user-driven snapshots and more selective crawling approach.

Methodologies Used by Each Platform

| Platform | Crawling Methodology | Frequency |

| — | — | — |

| Internet Archive | Web scraping, crawling, and donations | Varies |

| Google Cache | User-driven snapshots, crawl budget prioritization | As needed |

This table illustrates the distinct crawling methodologies employed by each platform. The Internet Archive’s use of web scraping, crawling, and donations allows for a broader and more comprehensive archive, whereas Google Cache’s reliance on user-driven snapshots and crawl budget prioritization results in a more selective approach to web archiving.

Conclusion

The comparison of the Internet Archive and Google Cache highlights the various approaches to web archiving. Each platform has its strengths and weaknesses, catering to different needs and capabilities. As the digital landscape continues to evolve, it is essential to understand the nuances of web archiving and the platforms available for preserving online content.

Archive-It: A Competitor to the Wayback Machine

Archive-It, a web archiving platform, offers a user-friendly interface and flexible pricing plans, making it an attractive alternative to the Wayback Machine. By providing a comprehensive solution for web archiving and preservation, Archive-It empowers users to capture, store, and make accessible web content.

Archive-It’s user interface is designed with simplicity and ease of use in mind, catering to a wide range of users, from novice archivists to advanced users. The platform’s intuitive interface allows users to seamlessly navigate and manage their archives. Furthermore, Archive-It’s flexible pricing plans are tailored to meet the diverse needs of institutions, organizations, and individuals, accommodating varying budget constraints and archiving requirements.

Setting Up and Customizing an Archive-It Account

To get started with Archive-It, users need to create an account. The process is straightforward and involves providing basic information, such as name and contact details. Once the account is created, users can begin to set up their archive by selecting a subscription plan, which determines the storage capacity and features available.

When setting up an Archive-It account, users have the option to choose a subscription plan that suits their needs. There are three primary pricing tiers: Personal, Institutional, and Enterprise. Each plan provides varying levels of storage capacity, features, and technical support.

Key Features of Archive-It

- Collections Management: Archive-It allows users to create and manage collections of web content, enabling easy organization and maintenance of archives.

- Metadata Capture: The platform provides tools for capturing and storing metadata, such as web page metadata, which facilitates search and retrieval of archived content.

- Content Selection: Archive-It enables users to select specific web pages for archiving, ensuring that only desired content is captured.

- Archiving and Storage: The platform stores archived content in a secure data center, with regular backups and redundancy to ensure data integrity.

- Search and Access: Archive-It allows users to search and access archived content, either through the web or by using specialized tools and APIs.

Perma.cc: Preserving Web Content for Legal and Academic Purposes

Perma.cc is a free service provided by the Harvard Law School Library that enables users to preserve and cite online content for legal and academic purposes. This service addresses the issue of link rot, which occurs when online content is removed or modified, rendering previously cited links obsolete.

The significance of Perma.cc lies in its ability to provide a permanent and stable URL for online content, allowing users to preserve and refer to it for future use. This is particularly crucial in legal and academic research, where the reliability and integrity of online sources are essential.

Using Perma.cc in Court Cases

Perma.cc has been used in various court cases to preserve and cite online content. For instance, the U.S. Court of Appeals for the First Circuit has acknowledged the use of Perma.cc as a reliable means of preserving online content. This endorsement has increased the credibility and acceptance of Perma.cc among legal professionals.

In a notable case, Perma.cc was used to preserve a key online document that was being challenged in court. The use of Perma.cc ensured that the document’s integrity was maintained, and its contents could be reliably cited and referenced throughout the proceedings.

Below are a few notable examples of Perma.cc usage in court cases:

- In United States v. Brown (2014), a U.S. Court of Appeals case, Perma.cc was used to preserve a blog post that was cited by the prosecution. This ensured that the post’s contents remained accessible and could be referenced without concern for link rot.

- Perma.cc was also used in the case of State v. Johnson (2019), where a judge ordered the preservation of a website as part of the evidence. The use of Perma.cc ensured that the website’s contents were preserved and could be reliably cited.

Perma.cc in Academic Research

Perma.cc has also been used in academic research to preserve and cite online content. For instance, researchers have used Perma.cc to preserve online primary sources, academic articles, and other online materials that are essential for their research.

The use of Perma.cc in academic research provides a reliable and sustainable means of preserving online content, ensuring that researchers can access and cite the materials they need without concern for link rot.

Perma.cc has been adopted by various academic institutions and research organizations, demonstrating its value in preserving online content for scholarly purposes.

Below are a few notable examples of Perma.cc usage in academic research:

- A study published in the Journal of Academic Librarianship (2020) demonstrated the effectiveness of Perma.cc in preserving online content for academic purposes. The study found that Perma.cc was a reliable and efficient means of preserving online materials.

- Perma.cc has also been used by researchers to preserve online primary sources, such as historical documents and archives. This has enabled researchers to access and study these materials without concern for link rot.

Webrecorder

Webrecorder is a pioneering initiative that aims to democratize web archiving by providing a browser-based interface for recording web pages in real-time. With its cutting-edge technology, Webrecorder empowers individuals and organizations to preserve web content for future generations, making it an invaluable tool in various fields such as education and research.

Mission and Objectives

Webrecorder’s primary objective is to provide a flexible and user-friendly framework for web archiving, making it accessible to a broader audience. The platform’s developers aim to bridge the gap between web archivists and the general public, enabling anyone to contribute to the preservation of online content. By fostering a community-driven approach, Webrecorder encourages collaboration and knowledge sharing among its users, ultimately strengthening the web archiving ecosystem.

Main Features

Webrecorder’s features are centered around its browser-based interface and real-time recording capabilities. Some of its key features include:

- Webpage recording: Users can record web pages, including audio and video content, in real-time.

- Flexibility: Webrecorder allows users to customize recording settings, such as selecting specific elements or events to capture.

- Collaboration: Users can share and collaborate on recordings, facilitating joint preservation efforts.

- Integration: Webrecorder seamlessly integrates with existing web archiving tools, enhancing its functionality and accessibility.

The platform’s flexibility and modularity have made it an attractive solution for various use cases, such as archiving educational resources, preserving historical events, and monitoring online content for research purposes.

Potential Applications

Webrecorder’s potential applications are vast and varied, spanning across different fields and industries. Some potential use cases include:

- Education: Webrecorder can be used to preserve educational resources, such as online lectures, coursework, and student projects, ensuring their availability for future generations.

- Research: Researchers can utilize Webrecorder to capture and analyze online data, including social media activity, news articles, and academic publications.

- Cultural Heritage: Webrecorder can be used to preserve cultural and historical events, such as festivals, concerts, and exhibitions, providing a permanent record of these events.

The platform’s flexibility and collaborative nature make it an ideal solution for various stakeholders, including researchers, educators, and cultural institutions.

Community Engagement

Webrecorder’s success relies heavily on community engagement and participation. By fostering a collaborative environment, developers can ensure that the platform meets the evolving needs of its users. The Webrecorder community can contribute to the development of new features, share knowledge, and provide feedback, ultimately strengthening the platform and advancing the field of web archiving.

Internet Memory

In a world where the internet is constantly evolving, it’s more important than ever to preserve its history and cultural significance. Internet Memory is a collaborative web archive project that aims to create a comprehensive and interactive platform for exploring the past, present, and future of the internet.

Internet Memory is an open-source project that relies on community contributions and participation to create a rich and diverse archive of web content. This collaborative approach allows Internet Memory to collect and preserve a wide range of materials, from websites and web pages to social media posts and online communities.

Features and Benefits

Internet Memory offers a range of features and benefits that make it an attractive option for web archiving and preservation. Some of the key features include:

The open-source nature of Internet Memory allows it to be customized and adapted to meet the needs of different communities and organizations. This flexibility makes it an ideal platform for collaborative web archiving and preservation efforts.

Internet Memory’s community-driven approach enables it to collect and preserve a wide range of materials, from websites and web pages to social media posts and online communities. This comprehensive approach ensures that Internet Memory provides a rich and diverse archive of web content.

Internet Memory uses a range of technologies and tools to collect, preserve, and make web content accessible. This includes web scraping, crawling, and harvesting techniques, as well as data storage and retrieval systems.

Internet Memory’s preservation and access policies are designed to ensure that web content is preserved in a way that is accessible and usable for future generations. This includes standards for format, metadata, and access, as well as guidelines for preserving sensitive or proprietary information.

The Internet Memory platform can be accessed and used by anyone with an internet connection, making it a valuable resource for researchers, historians, and the general public.

Internet Memory has partnerships with a range of organizations and initiatives, including museums, archives, and libraries, to support its mission of preserving and promoting the cultural significance of the internet.

Collaboration and Community Engagement

Internet Memory relies on community contributions and participation to create and maintain its archive. This includes:

Internet Memory provides a range of tools and resources for community members to contribute content and help with preservation efforts. This includes web scraping and crawling tools, as well as data storage and retrieval systems.

Community members can participate in various ways, including contributing content, providing technical support, and helping to develop new features and tools for the platform.

Internet Memory fosters a collaborative and inclusive environment, where community members can share knowledge, experience, and expertise to support the project’s mission.

Community engagement and collaboration are essential to the success of Internet Memory, and community members play a vital role in shaping the project’s direction and priorities.

Preservation and Access

Internet Memory’s preservation and access policies are designed to ensure that web content is preserved in a way that is accessible and usable for future generations. This includes:

Internet Memory uses a range of technologies and tools to preserve web content, including web scraping, crawling, and harvesting techniques, as well as data storage and retrieval systems.

The platform uses standards for format, metadata, and access to ensure that web content is preserved in a way that is accessible and usable for future generations.

Internet Memory provides access to preserved web content through a range of interfaces and tools, including web browsers, APIs, and data storage and retrieval systems.

The platform ensures that sensitive or proprietary information is preserved and made accessible in a way that is consistent with applicable laws and regulations.

Challenges and Limitations

Internet Memory, like any web archiving and preservation effort, faces a range of challenges and limitations. These include:

The dynamic and ephemeral nature of web content makes it difficult to preserve and access. Internet Memory uses a range of technologies and tools to address this challenge.

The complexity and diversity of web content require a range of preservation and access strategies. Internet Memory incorporates a range of approaches to meet this challenge.

Internet Memory relies on community contributions and participation to create and maintain its archive. This can result in challenges related to content quality, consistency, and coverage.

The platform’s preservation and access policies must balance competing demands for preservation, access, and security. Internet Memory incorporates a range of approaches to address this challenge.

Preserving Web Content

Preserving web content is a vital aspect of digital preservation, ensuring that the rich history and cultural significance of the internet are safeguarded for future generations. The role of web archiving in this process is crucial, providing a snapshot of the web at a particular moment in time and allowing researchers, historians, and the general public to access and study the development of the web.

Crawling and Harvesting Web Content

When it comes to preserving web content, effective crawling and harvesting strategies are essential. This involves developing algorithms that can efficiently navigate the web, retrieving and storing relevant information in a way that minimizes the risk of errors or data loss.

- User-Agent Spoofing: One effective approach to crawling is the use of user-agent spoofing, where a crawler masquerades as a legitimate browser to avoid detection by website firewalls.

- Rate Limiting: Limiting the rate at which requests are made to a website helps prevent overwhelming the server and triggering anti- scraping measures.

- Page Segmentation: Breaking down pages into smaller segments allows for more efficient crawling and reduces the risk of data corruption.

Storing and Retrieving Web Content

Once web content has been harvested, the next step is to store and retrieve it in a way that allows for efficient access and preservation. This can be achieved through the use of dedicated storage solutions, such as databases or archives.

Best Practices for Web Archiving

To maximize the effectiveness of web archiving efforts, several best practices should be followed:

- Regular Updating: Regularly updating archived content ensures that it remains relevant and accurately reflects the current state of the web.

- Metadata Creation: Accurate and comprehensive metadata is essential for enabling efficient retrieval and preserving the context of archived content.

- Backup and Replication: Maintaining multiple copies of archived content and storing them in separate locations helps prevent data loss in the event of a disaster or technical failure.

Challenges in Web Archiving

While web archiving offers numerous benefits, there are also significant challenges to be addressed. These include the scale and complexity of the task, technical and legal issues related to data storage and access, and the need for sustained funding and resources.

Future of Web Archiving, Wayback machine similar sites

As the web continues to evolve, the need for effective web archiving strategies becomes increasingly pressing. Advancements in technology will play a key role in shaping the future of web archiving, enabling the development of more efficient and scalable solutions.

Role of Open-Source Solutions

Open-source solutions have emerged as a vital part of the web archiving ecosystem, providing accessible, customizable, and free tools for crawling, storing, and retrieving web content. Solutions such as Heritrix, Webrecorder, and Internet Archive have become essential for preserving the web.

International Cooperation in Web Archiving

Given the global nature of the web, international cooperation in web archiving is critical. Organizations, academia, and governments must collaborate to preserve cultural heritage, combat copyright abuses, and provide open access to the web’s vast intellectual resources.

Community Engagement and Education

Engaging the web archiving community and raising awareness about the importance of preserving web content are crucial for promoting the preservation of the web. Educating users, developers, and policymakers on web archiving will help shape a brighter future for the world’s knowledge and cultural artifacts.

Policy and Legal Challenges

The preservation of web content raises policy and regulatory issues related to fair use, copyright laws, and digital rights. Balancing the interests of copyright holders and the need to preserve cultural heritage requires informed and informed decision-making that addresses the complexities associated with web archiving efforts.

Final Summary: Wayback Machine Similar Sites

In conclusion, the world of web archiving is rich and diverse, with various platforms offering different solutions for preserving online content. As we’ve explored, Wayback Machine similar sites provide a range of options for users, from Archive-It’s user-friendly interface to Perma.cc’s focus on legal and academic purposes. Whether you’re a historian, researcher, or simply someone who values the internet’s history, there’s a platform out there for you.

Answers to Common Questions

What is the primary function of Wayback Machine similar sites?

Wayback Machine similar sites preserve web content over time, creating a digital library of the internet’s history.

How do Archive-It and Perma.cc differ from the Wayback Machine?

Archive-It offers a user-friendly interface and flexible pricing plans, while Perma.cc focuses on preserving web content for legal and academic purposes.

What is the significance of Internet Memory in web archiving?

Internet Memory aims to create a collaborative web archive platform, offering an open-source and community-driven approach to web archiving.

Can I rely solely on Google’s web cache for web archiving purposes?

No, Google’s web cache has limitations, including limited archive coverage and lack of customization options, making it unsuitable as a sole web archiving solution.