With what are micromodels in machine learning NLP at the forefront, this topic opens a window to a world of exciting possibilities and insights in the realm of artificial intelligence. Micromodels in machine learning NLP are innovative models that have gained popularity for their ability to learn and adapt from vast amounts of data, making them a crucial component in modern AI.

These models differ from traditional machine learning models in their ability to provide a more accurate and nuanced understanding of complex tasks such as language translation, text summarization, and sentiment analysis. By analyzing the strengths and limitations of micromodels, researchers and developers can unlock new applications and improve existing ones, pushing the boundaries of what is possible in AI.

Introduction to Micromodels in Machine Learning NLP

In the bustling world of Machine Learning and Natural Language Processing (NLP), a new trend is emerging – Micromodels. Micromodels are small, domain-specific neural networks that have gained popularity due to their ability to process and understand complex language patterns. These models have been successfully applied in various NLP tasks such as language translation, sentiment analysis, and text summarization.

A Brief History of Micromodels in NLP

The concept of Micromodels originated from the idea of distilling complex neural networks into smaller, more manageable models. In 2019, researchers introduced the concept of “Tiny Neural Networks” which were designed to be highly expressive and compact. Micromodels have since evolved to become a crucial component in various NLP pipelines, enabling applications with improved performance, speed, and interpretability.

The Importance of Micromodels in NLP Applications

Micromodels have revolutionized the field of NLP by providing several key benefits:

Efficient Inference

Micromodels require significantly less computational resources compared to larger neural networks. This enables real-time inference and faster processing of large volumes of text data.

Improved Performance

Micromodels have been shown to achieve better performance in various NLP tasks, often surpassing the performance of larger neural networks.

Enhanced Interpretability

Micromodels provide insights into the decision-making process, enabling developers to understand and improve the model’s behavior.

-

Micromodels have been applied in various NLP applications, including language translation, sentiment analysis, and text summarization.

-

“Micromodels can be seen as a bridge between traditional machine learning and deep learning, providing the best of both worlds.”

What are Micromodels?

Micromodels are relatively new in the field of machine learning, specifically in Natural Language Processing (NLP). Unlike traditional machine learning models, micromodels focus on smaller, more specific tasks, leveraging the advantages of both symbolic reasoning and connectionist learning.

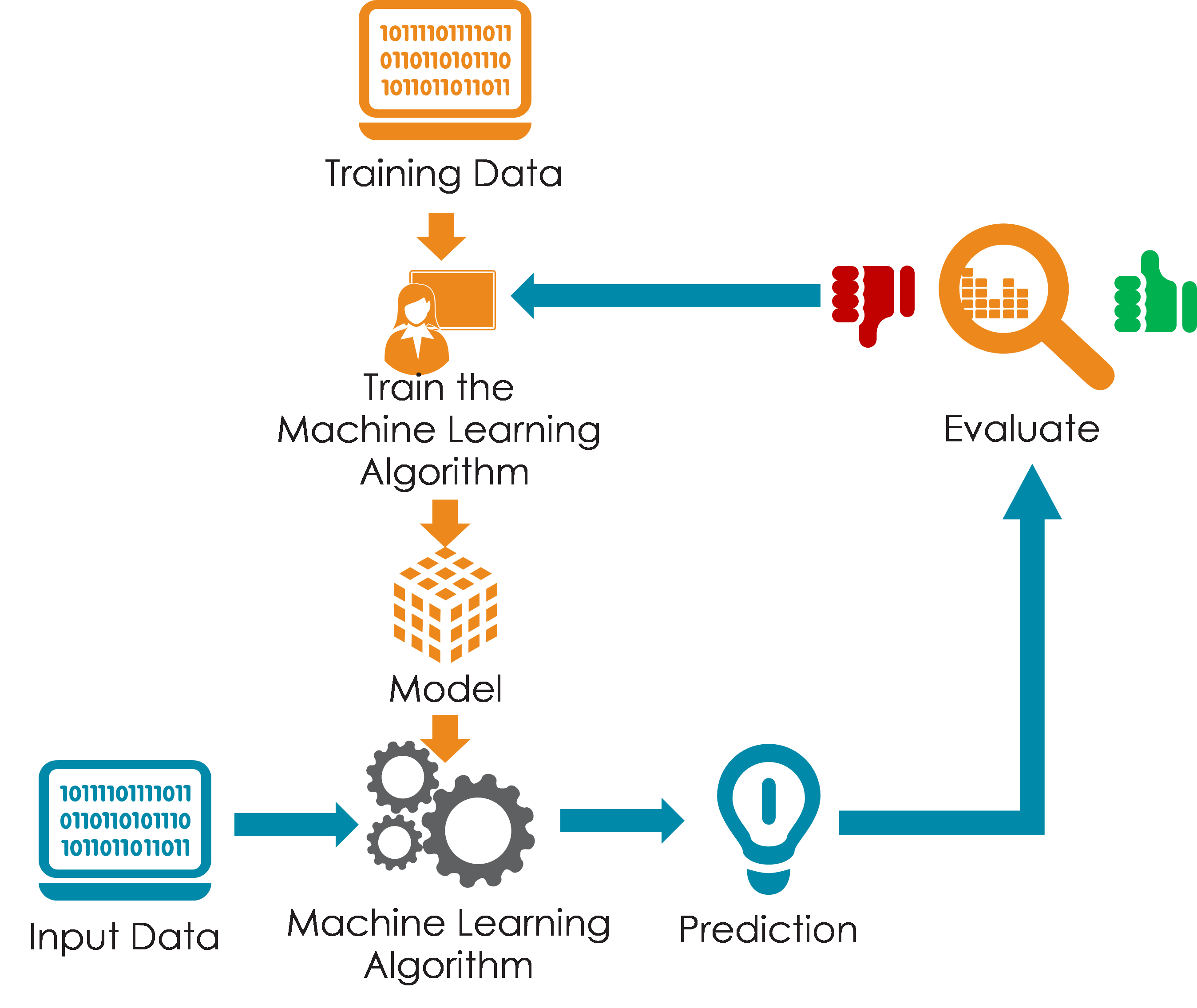

Traditionally, machine learning models relied on the collective power of complex neural networks, trained on enormous datasets to learn relationships between inputs and outputs. However, such models often struggle with handling the complexity and nuances of human language, a fundamental aspect of NLP applications. These limitations led to the development of micromodels, which provide a distinct approach by breaking down the problem into smaller, more manageable pieces.

Difference between Micromodels and Traditional Machine Learning Models

Traditional machine learning models are complex networks that handle vast amounts of data. Conversely, micromodels are more modular, each focusing on smaller, related tasks. Unlike their traditional counterparts, micromodels leverage symbolic reasoning in addition to connectionist learning. Symbolic systems, in a way, mimic how humans think by using symbolic representations of data and rules to derive conclusions. This hybrid approach gives micromodels a competitive edge in handling specific NLP tasks, like language interpretation, information retrieval, or text classification.

Examples of Micromodels in NLP Applications

Micromodels are used in a variety of NLP applications, such as information retrieval, question-answering systems, text classification, and sentiment analysis.

The use of micromodels simplifies many tasks within NLP, allowing for better performance and more accurate results. For instance, a micromodel designed for sentiment analysis will not only look for s but also consider the context in which they’re used.

Strengths and Limitations of Micromodels in NLP

Micromodels offer a more precise and efficient approach to NLP, which comes with several strengths:

–

Efficient and Precise

Micromodels can be designed to handle specific NLP tasks, providing a more focused solution compared to traditional machine learning models.

–

Hierarchical Reasoning

They combine symbolic reasoning with connectionist learning, mimicking human thought processes and enabling them to derive conclusions based on symbolic representations of data and rules.

–

Flexibility

Since micromodels are modular, they can be easily updated, modified, or replaced without affecting the overall performance of the larger system.

However, micromodels also have some limitations:

–

Complexity of Integration

Integrating micromodels into a larger system can be complex, especially if the models are not designed with integration in mind.

–

Overfitting

If not properly trained, micromodels can overfit to the training data, making them less effective on unseen data.

–

Limited Scalability

While micromodels are more efficient and precise, they may face challenges when dealing with very large datasets or complex tasks that require a larger model.

Types of Micromodels: What Are Micromodels In Machine Learning Nlp

In the realm of machine learning and NLP, micromodels are a vital component that enables efficient and accurate processing of information. Understanding the different types of micromodels is crucial to leveraging their potential. Here, we dive into the various categories of micromodels and explore their characteristics.

1. Rule-based Micromodels

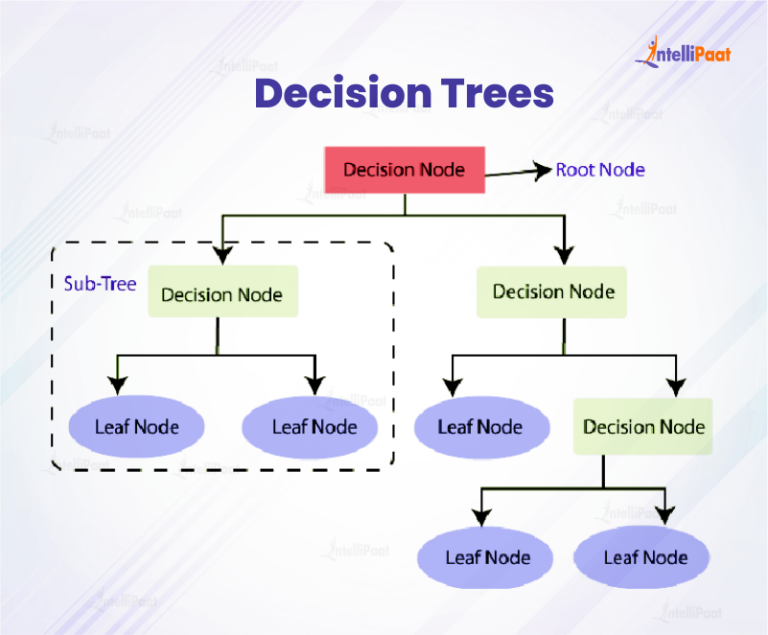

Rule-based micromodels operate on predetermined rules and decision-making processes that are hardcoded into the system. These rules are usually in the form of IF-THEN statements or decision trees. This type of micromodel is particularly useful in situations where the rules are well-defined and don’t require continuous adaptation. The rules and decision-making process in rule-based micromodels are typically explicit and can be understood by humans. However, they can be limited in their ability to handle complex or uncertain situations.

| Rule-based Micromodel Characteristics | Example |

|---|---|

| Fixed set of rules | Prioritizing customer complaints based on severity and urgency |

| Decision-making process is explicit | Using a decision tree to determine the likelihood of a customer buying a product |

| May not handle complex or uncertain situations well | Difficulty in handling ambiguous customer feedback |

2. Statistical Micromodels

Statistical micromodels employ statistical methods to make predictions or classify data. They often rely on supervised learning techniques, such as decision trees, Bayesian networks, or random forests. This type of micromodel is useful in situations where there is a large amount of data and the relationships between variables are complex. Statistical micromodels can be trained on past data to improve their accuracy over time.

| Statistical Micromodel Characteristics | Example |

|---|---|

| Uses statistical methods to make predictions or classify data | Predicting customer churn based on past usage patterns |

| Often relies on supervised learning techniques | Training a decision tree to classify customer feedback as positive or negative |

| Can be trained on past data for improved accuracy | Updating a Bayesian network with new customer purchase data |

3. Neural Micromodels

Neural micromodels utilize artificial neural networks to process and analyze data. These models can learn complex patterns and relationships in data through a process called deep learning. Neural micromodels are often used in situations where traditional machine learning methods are insufficient. They can also be used for tasks such as natural language processing, computer vision, and recommender systems.

| Neural Micromodel Characteristics | Example |

|---|---|

| Utilizes artificial neural networks | Using a neural network to classify customer reviews as positive or negative |

| Can learn complex patterns and relationships in data | Identifying complex customer purchasing behavior using a neural network |

| Often used for tasks such as NLP and computer vision | Using a neural network to translate customer feedback from one language to another |

Micromodel Architectures

In the realm of Micromodels, the architecture plays a crucial role in determining the model’s performance, complexity, and flexibility. The architecture of a Micromodel refers to the structural organization of its components, which can significantly impact its ability to capture complex patterns and relationships in the data. In this section, we’ll delve into the different Micromodel architectures that have gained prominence in the field of NLP.

Micromodels can be broadly categorized into three main architectures: Layered, Tree-like, and Graph-based. Each of these architectures has its unique characteristics, advantages, and use cases.

Layered Micromodels

Layered Micromodels are the most traditional and widely used architecture in NLP. This architecture consists of multiple layers, where each layer is responsible for processing the input data in a specific way. The layers are typically organized in a hierarchical manner, with the input data flowing through each layer until it reaches the final output layer.

One of the key characteristics of Layered Micromodels is the use of activation functions, which help to introduce non-linearity in the model and enable it to capture complex relationships between features. The most common layers in a Layered Micromodel are:

- Embedding Layer: This layer converts input words or tokens into dense vectors, called embeddings, which capture the semantic meaning of the words.

- Recurrent Neural Network (RNN) Layer: This layer uses RNNs to process sequential data, such as text or speech, and capture the temporal relationships between inputs.

- Convolutional Neural Network (CNN) Layer: This layer uses CNNs to process input data in a convolutional manner, which is particularly useful for image and audio processing tasks.

- Attention Layer: This layer allows the model to focus on specific parts of the input data, such as certain words or sentences, when generating output.

The advantages of Layered Micromodels include their ability to capture complex patterns, handle sequential data, and leverage pre-trained language models. However, they can be computationally expensive and require careful tuning of hyperparameters.

Tree-like Micromodels

Tree-like Micromodels are a variation of the Layered architecture, where each layer is represented as a decision tree. This architecture is particularly useful for tasks that involve hierarchical classification or regression, such as document categorization or sentiment analysis.

The key characteristics of Tree-like Micromodels include the use of decision trees, which recursively partition the input space into smaller sub-spaces based on the input features. The decision trees are typically shallow, with a small number of levels, to prevent overfitting.

One of the advantages of Tree-like Micromodels is their ability to handle high-dimensional input data and reduce overfitting. They are also relatively fast to train and can be parallelized efficiently. However, they may not capture complex relationships between features as effectively as Layered Micromodels.

Graph-based Micromodels

Graph-based Micromodels are a type of Micromodel that represents the input data as a graph, where nodes represent entities and edges represent relationships between them. This architecture is particularly useful for tasks that involve entity recognition, relationship extraction, or graph classification.

One of the key characteristics of Graph-based Micromodels is the use of graph neural networks (GNNs), which can efficiently process graph-structured data and capture complex relationships between entities. The GNNs typically consist of multiple layers, each of which processes the graph in a different way.

The advantages of Graph-based Micromodels include their ability to capture complex relationships between entities and handle graph-structured data. They are also relatively fast to train and can be parallelized efficiently. However, they may require careful tuning of hyperparameters and can be computationally expensive for large graphs.

Applications of Micromodels in NLP

Machine learning-based micromodels in NLP have numerous applications across various industries, enhancing language understanding and processing capabilities. These applications showcase the versatility of micromodels in handling complex language tasks efficiently.

Micromodels have been successfully applied in several areas of NLP, improving the accuracy and efficiency of language processing tasks. These applications leverage the strengths of micromodels, such as their ability to capture localized patterns and relationships within language data.

Language Translation

Micromodels have been effectively used in language translation tasks, enabling machines to understand and generate accurate translations of languages. In this application, micromodels are trained on large datasets of parallel text, where the input and output are paired with their corresponding translations. By capturing localized patterns and relationships within these datasets, micromodels can generate more accurate and nuanced translations.

- Machine translation systems rely on micromodels to translate languages with higher accuracy, especially for languages with less available resources.

- Micromodels can be fine-tuned for specific language pairs, adapting to the unique characteristics of the languages being translated.

- By leveraging micromodels, language translation systems can handle complex texts, including idioms, colloquial expressions, and specialized terminology.

Text Summarization, What are micromodels in machine learning nlp

Micromodels are also applied in text summarization tasks, enabling machines to automatically generate concise summaries of long documents. In this application, micromodels are trained on large datasets of articles, documents, or other texts, where they learn to identify the most important information and rephrase it in a concise manner.

- Text summarization systems using micromodels can identify key points and supporting details in documents, ensuring that the final summary accurately reflects the content.

- Micromodels can be fine-tuned for specific domains or industries, adapting to the unique characteristics of the text being summarized.

- By leveraging micromodels, text summarization systems can handle long and complex texts, including technical documents, academic papers, and news articles.

Sentiment Analysis

Micromodels are also applied in sentiment analysis tasks, enabling machines to identify and categorize opinions and emotions expressed in text. In this application, micromodels are trained on large datasets of labeled text, where the text is annotated with its corresponding sentiment (positive, negative, neutral, etc.).

- Sentiment analysis systems using micromodels can identify the overall sentiment of text, including emotions and opinions, with high accuracy.

- Micromodels can be fine-tuned for specific domains or industries, adapting to the unique language and tone used in the text being analyzed.

- By leveraging micromodels, sentiment analysis systems can handle long and complex texts, including social media posts, product reviews, and customer feedback.

Future Directions in Micromodel Research

As micromodel research continues to evolve, it is essential to consider future directions that can further enhance the capabilities of these models. The potential benefits and challenges of these directions will be discussed below.

Integration with Other AI Techniques

The integration of micromodels with other AI techniques, such as deep learning and reinforcement learning, can lead to the development of more powerful and versatile models. This integration can enable micromodels to leverage the strengths of other AI techniques and improve their performance on complex tasks. For example, combining micromodels with deep learning can enable the models to learn from large datasets and improve their accuracy.

- Transfer learning: Micromodels can be integrated with other AI techniques to enable transfer learning, where a pre-trained model is fine-tuned for a specific task.

- Multi-task learning: Micromodels can be integrated with multiple AI techniques to enable multi-task learning, where a single model learns to perform multiple tasks simultaneously.

- Adversarial training: Micromodels can be integrated with other AI techniques to enable adversarial training, where a model is trained to be robust against adversarial attacks.

Development of New Micromodel Architectures

New micromodel architectures can be developed to enable the models to perform more complex tasks and handle larger datasets. These new architectures can be designed to take advantage of the strengths of micromodels, such as their ability to learn from small datasets. For example, a new micromodel architecture can be developed to enable the models to learn from sequential data.

- RNN-based micromodels: RNN-based micromodels can be developed to enable the models to learn from sequential data, such as text or speech.

- Transformer-based micromodels: Transformer-based micromodels can be developed to enable the models to learn from parallel data, such as images or text.

- Graph-based micromodels: Graph-based micromodels can be developed to enable the models to learn from complex relationships between data points.

Application to Other Domains

Micromodels can be applied to other domains, such as computer vision, natural language processing, and recommendation systems. These applications can help to improve the performance of the models and enable them to handle more complex tasks.

- Image classification: Micromodels can be applied to image classification tasks to enable the models to learn from images and classify them into different categories.

- Speech recognition: Micromodels can be applied to speech recognition tasks to enable the models to learn from speech data and recognize spoken words.

- Recommendation systems: Micromodels can be applied to recommendation systems to enable the models to learn from user behavior and recommend personalized products.

Closing Notes

In conclusion, micromodels in machine learning NLP are a game-changing technology that has the potential to revolutionize the field of artificial intelligence. As research continues to advance and new applications emerge, it is essential to understand the principles and benefits of micromodels so we can harness their power to create smarter AI solutions for a brighter future.

Common Queries

What is the primary advantage of micromodels in machine learning NLP?

Micromodels provide a more accurate and nuanced understanding of complex tasks, enabling researchers and developers to unlock new applications and improve existing ones.

How do micromodels differ from traditional machine learning models?

Micromodels learn and adapt from vast amounts of data, providing a more accurate and nuanced understanding of complex tasks.

What are some common applications of micromodels in machine learning NLP?

Micromodels are used in language translation, text summarization, sentiment analysis, and other NLP applications.

What are some potential future directions in micromodel research?

Future research directions include integrating micromodels with other AI techniques, developing new micromodel architectures, and applying them to other domains.