As best data annotation tools for machine learning takes center stage, this opening passage beckons readers into a world where accurate and reliable data-driven insights are the backbone of modern machine learning models. The importance of data annotation in machine learning cannot be overstated, as it directly affects model performance, influencing the accuracy, precision, and scalability of these models. Various industries such as healthcare, autonomous vehicles, and speech recognition heavily rely on data annotation to develop high-performing models.

Data annotation is a time-consuming and labor-intensive process, requiring human expertise and skills in natural language processing, computer vision, and other specialized areas. To overcome these challenges, industries have turned to data annotation tools and platforms that support collaborative annotation, real-time feedback, and automation features, ensuring high-quality annotations that significantly impact model performance.

Data Annotation Tools and Platforms

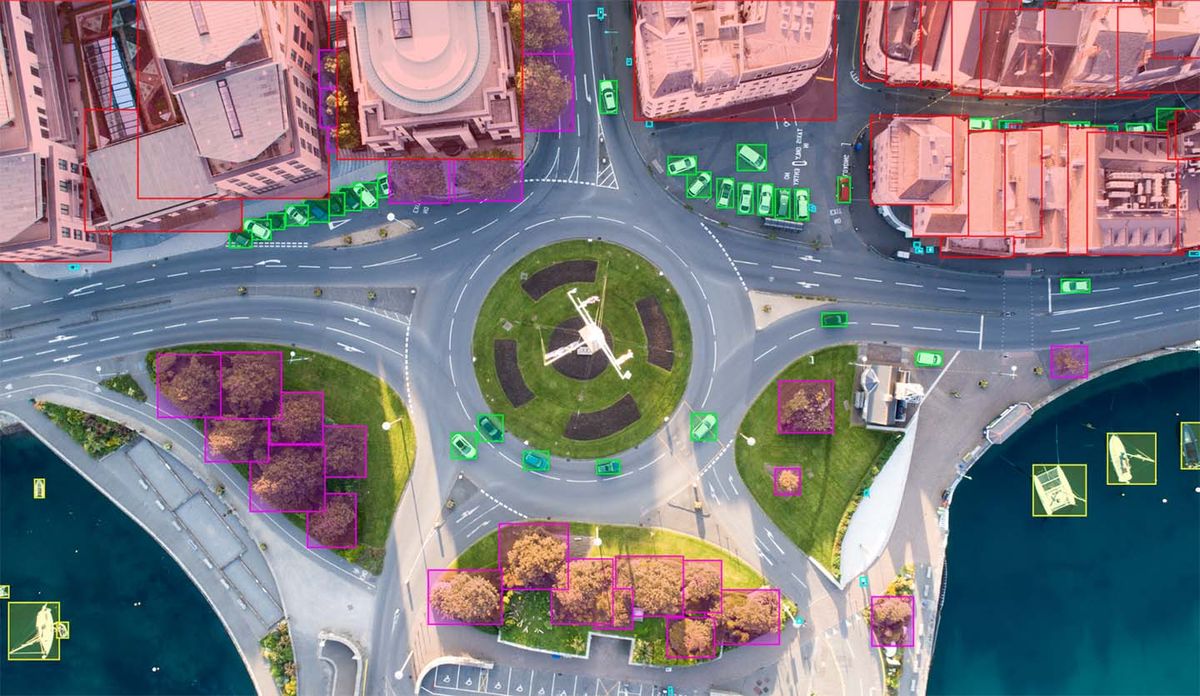

Data annotation is a crucial step in the machine learning pipeline, as high-quality training data enables models to make accurate predictions. To facilitate efficient and accurate data annotation, various tools and platforms have been developed. In this section, we will discuss some popular data annotation tools and their key features.

These tools cater to different annotation needs, such as multi-label classification, object detection, and sentiment analysis. Each tool offers a unique set of features and functionalities, making them suitable for specific use cases.

Labelbox: A Collaborative Annotation Environment

Hive: A Flexible Annotation Platform with Real-Time Collaboration

Annotate.ai: Focus on Computer Vision Tasks

- Labelbox:

- Provides a collaborative annotation environment

- Supports multiple data types, including text, images, and audio

- Offers a user-friendly interface and customizable workflows

- Hive:

- Offers a flexible annotation platform

- Enables real-time collaboration and version control

- Supports multiple annotation tasks, including text classification and object detection

- Annotate.ai:

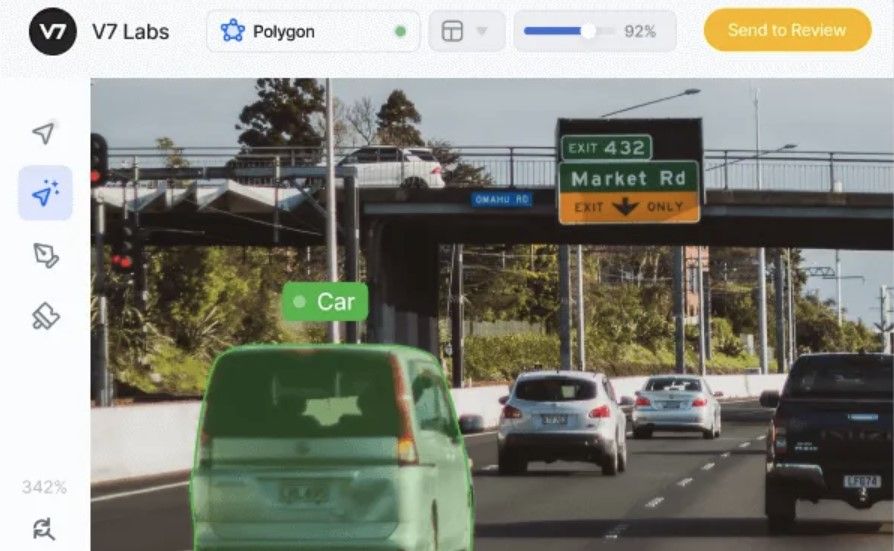

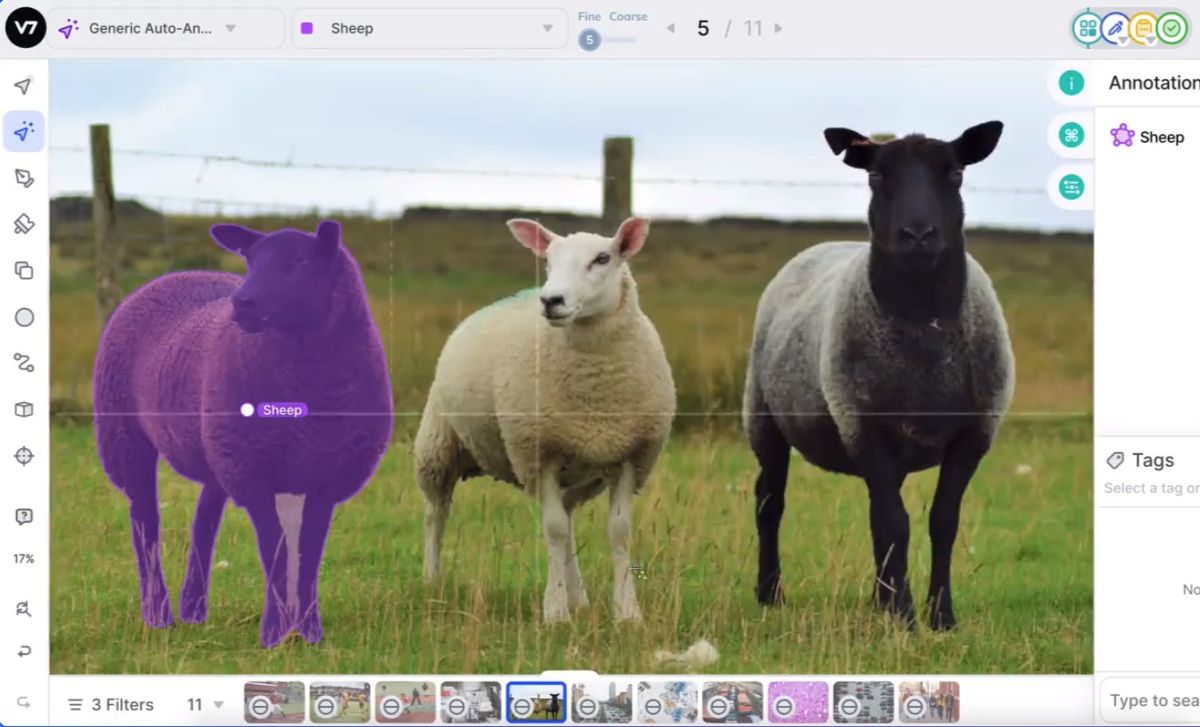

- Focuses on computer vision tasks, including object detection and image segmentation

- Provides a user-friendly interface and customizable workflows

- Supports real-time collaboration and version control

Best Practices for Data Annotation

In the world of machine learning, high-quality data is the backbone of accurate model training. To achieve this, data annotation plays a crucial role. Effective data annotation involves more than just slapping labels on data; it’s a delicate process that requires precision, consistency, and attention to detail. In this section, we’ll dive into the best practices for data annotation, exploring the importance of data quality and consistency, creating annotation guidelines and dictionaries, and sharing strategies for annotating data efficiently and effectively.

Use Clear and Concise Annotation Guidelines, Best data annotation tools for machine learning

Clear annotation guidelines are essential for ensuring that all annotations are consistent and accurate. When creating guidelines, consider the following:

-

Define clear and concise annotation categories to avoid confusion and overlap.

For instance, you can create categories for objects, actions, emotions, or locations. - Establish specific rules for annotation syntax and formatting. This can include guidelines for punctuation, capitalization, and spelling.

- Provide examples of annotated data to demonstrate how the guidelines should be applied in practice.

Having well-crafted guidelines will help reduce inconsistencies and errors, ultimately leading to a higher quality dataset.

Establish a Standardized Naming Convention for Annotations

A standardized naming convention for annotations can greatly simplify the annotation process and improve collaboration among annotators. Consider the following:

-

Develop a consistent naming convention for annotation categories and labels.

This can include using a specific notation for categories, such as “obj1” for object 1. -

Create a dictionary or glossary of annotation terms to ensure that all annotators are using the same language.

This can help reduce confusion and errors. - Use a consistent naming convention across all annotation data to facilitate easy querying and analysis.

Having a standardized naming convention will make it easier to manage and analyze the annotated data, ultimately leading to better insights and decision-making.

Utilize Active Learning Strategies to Prioritize High-Impact Annotations

Active learning strategies can help ensure that annotators focus on high-impact annotations, such as those that are most relevant to the machine learning model or have the greatest effect on the output. Consider the following:

-

Identify the most relevant and impactful annotations based on the machine learning model’s requirements.

For instance, you can prioritize annotations related to the model’s main objectives or decision-making processes. - Annotate a small sample of data first to understand the distribution of annotations and identify areas where active learning strategies can be applied.

- Implement active learning strategies, such as uncertainty sampling, to prioritize annotations that have the greatest impact on the model.

By utilizing active learning strategies, you can optimize the annotation process and ensure that the most valuable annotations are completed first.

Scalability and Automation in Data Annotation

In machine learning model development, the quality and quantity of training data play crucial roles. As datasets grow, manual data annotation becomes increasingly time-consuming and prone to human error. Therefore, achieving scalability in data annotation is vital to support the development and deployment of machine learning models.

To improve scalability, data annotation workflows need to be optimized for efficiency and productivity. This can be achieved by automating certain annotation tasks and leveraging collaboration tools to reduce the workload of human annotators.

Automation and Machine Learning in Data Annotation

Machine learning algorithms can be employed to automate specific data annotation tasks that do not require human judgment or expertise. One such example is the use of active learning strategies, which selectively samples the most informative data points for human annotation. This approach can significantly reduce the amount of data that needs to be annotated by humans, thus increasing scalability and efficiency.

Another area where machine learning can be applied is in the creation of data annotation templates and guidelines. By using natural language processing and machine learning algorithms, these templates can be generated automatically, reducing the time and effort required to create and maintain them.

Tools Supporting Annotation Workflows and Automation

Several data annotation tools and platforms provide features that support annotation workflows and automation. Here are some examples:

Labelbox, for instance, offers an automated labeling feature that can be used for simple annotation tasks such as image classification. Hive, on the other hand, provides integration with machine learning algorithms, making it suitable for more complex annotation tasks. Annotate.ai is a computer vision-focused platform that offers automated object detection and segmentation capabilities.

Human-Annotated Data and Model Evaluation

In the world of machine learning, model evaluation is a crucial step in ensuring that our models are accurate, reliable, and perform well in real-world scenarios. Human-annotated data plays a vital role in this process, as it provides a gold standard against which our model’s predictions can be evaluated. The quality of our model’s performance is directly tied to the quality of the data used to train and evaluate it, making human-annotated data an essential component of the machine learning pipeline.

Metrics and Techniques for Evaluating Model Performance

When it comes to evaluating model performance, there are several key metrics and techniques that can be used. These metrics and techniques provide a comprehensive understanding of how well our model is performing and help identify areas for improvement.

-

Use metrics such as precision, recall, and F1 score to evaluate model performance

`Precision = TP / (TP + FP)` `Recall = TP / (TP + FN)` `F1 score = 2 * (Precision * Recall) / (Precision + Recall)`

Precision and recall are two fundamental metrics used to evaluate model performance. Precision measures the proportion of true positives out of all positive predictions, while recall measures the proportion of true positives out of all actual positive instances. The F1 score, which is the harmonic mean of precision and recall, provides a comprehensive understanding of a model’s performance.

-

Compare multiple models using techniques like A/B testing and model selection

A/B testing involves dividing the data into two groups and training two separate models on each group. The performance of each model is then compared, and the best-performing model is selected. Model selection, on the other hand, involves evaluating multiple models on a single dataset and selecting the best-performing model.

-

Utilize techniques like model interpretability and feature importance to identify areas for improvement

Model interpretability involves analyzing the decisions made by a model to understand why it made certain predictions. Feature importance, which is a measure of how much each feature contributes to the model’s predictions, can help identify areas where the model may be overfitting or underfitting.

Challenges and Limitations in Data Annotation

Data annotation is a crucial step in the machine learning pipeline, but it’s not without its challenges. One of the primary concerns is data quality. Annotated data can be noisy, incomplete, or inconsistent, which can lead to biased models and poor performance.

Data Quality Issues

Data quality issues arise from various sources, including:

- Data sampling bias: If the data sample is not representative of the population, the model may not generalize well to new, unseen data.

- Noisy or noisy labels: Labels that are incorrect or incomplete can lead to poor model performance.

- Inconsistent annotations: Differing annotation guidelines or quality control processes can result in inconsistent annotations.

- Class imbalance: When one class has significantly more instances than others, it can skew the model’s performance and learning.

To address these data quality issues, it’s essential to implement strategies that ensure data quality and consistency. This includes establishing clear annotation guidelines, utilizing active learning strategies, and implementing feedback loops to ensure data quality. By doing so, we can mitigate the effects of data quality issues and improve the overall performance of our machine learning models.

Annotator Variability and Biases

Annotator variability and biases can also impact the quality of annotated data. Annotators may have different levels of expertise, cultural backgrounds, or linguistic preferences, which can lead to inconsistent or biased annotations.

- Annotator bias: Annotators may bring their personal biases to the task, which can result in biased annotations.

- Culture and language bias: Annotators may have cultural or linguistic backgrounds that influence their annotation decisions.

- Inconsistent annotation standards: Differences in annotation standards or guidelines across annotators can lead to inconsistent annotations.

To mitigate these biases and variability, it’s crucial to implement strategies that promote annotator consistency and quality control. This includes providing clear guidelines, utilizing active learning strategies, and implementing feedback loops to ensure data quality.

Addressing Challenges through Active Learning and Feedback Loops

Active learning and feedback loops can help address challenges in data annotation by:

- Improving data quality: Active learning strategies select the most informative instances for annotation, ensuring that the data is of high quality.

- Reducing annotator variability: Feedback loops provide annotators with feedback on their performance, allowing them to improve their annotation consistency.

- Increasing annotator efficiency: By selecting the most informative instances, active learning reduces the number of instances that need to be annotated, increasing annotator efficiency.

> By implementing active learning and feedback loops, we can ensure data quality and consistency, and improve the overall performance of our machine learning models.

Final Summary: Best Data Annotation Tools For Machine Learning

Ultimately, the best data annotation tools for machine learning are those that provide seamless collaborative annotation, real-time feedback, and automation features, ensuring high-quality annotations that significantly impact model performance. With the rapidly evolving landscape of machine learning, having the right data annotation tools can be the difference between developing robust models and those that falter. Therefore, it is essential to explore and understand the various data annotation tools and platforms available, carefully selecting the ones that best suit your industry’s needs.

General Inquiries

What is data annotation, and why is it essential for machine learning?

Data annotation is the process of labeling and categorizing data to prepare it for use in machine learning models. It is critical for machine learning as it directly affects the accuracy and performance of these models.

What are the different types of data annotation tasks?

Common data annotation tasks include text classification, object detection, sentiment analysis, and others, each requiring specific skills and expertise.

How can I ensure the quality of my annotated data?

To ensure data quality, use clear annotation guidelines, establish a standardized naming convention, and implement active learning strategies to prioritize high-impact annotations.