Alternative to Wayback Machine, web archiving has become an essential tool for preserving online content for future generations. The vast amount of information available online is constantly changing, making it a challenging task to maintain a record of the past. Without a reliable method of web archiving, our digital heritage is at risk of being lost forever.

The Wayback Machine, launched by the Internet Archive in 2001, has been a pioneering effort in web archiving. However, its limitations have led to the need for alternative solutions that can meet the growing demands of preserving online content.

Archiving Alternatives

In the absence of the Wayback Machine, several alternatives offer comprehensive archiving solutions for internet content. These alternatives cater to different needs and provide a range of features that help preserve web resources. In this section, we will explore some of the notable archiving alternatives.

Internet Archive

The Internet Archive serves as a prominent archiving alternative, preserving web content since 2001. This non-profit organization stores over 20 petabytes of data across its three main repositories: the Wayback Machine, the Internet Archive’s main repository, and the Library of Congress’s repository. The Internet Archive provides access to a vast collection of archived websites, books, articles, and multimedia content. Its advanced search capabilities enable users to find and explore archived content efficiently.

- Advanced Search: The Internet Archive offers an advanced search feature, allowing users to filter results based on date, type, and other relevant criteria.

- Archiving Process: The organization uses a combination of automated crawlers and user-submitted content to archive websites.

- Preservation Efforts: The Internet Archive collaborates with libraries, museums, and other institutions to ensure the long-term preservation of digital content.

Google Cache

Google Cache serves as an intermediary archiving solution, storing snapshots of websites temporarily. While not a primary archival source, Google Cache provides an essential backup for website availability and can be useful for recovering deleted content. Its reliance on Google’s crawling process means that it may not capture entire websites, especially those with complex structures.

- Temporal Archiving: Google Cache stores snapshots of websites for a limited time, typically up to a few weeks or months.

- Partial Archiving: Google Cache may not capture certain aspects, such as user-generated content or dynamically generated pages.

- Backup Functionality: Google Cache can be used as a temporary backup solution for website owners.

Libraries’ Digital Collections

Libraries play a significant role in preserving digital content through their digital collections. These collections often feature a wide range of materials, including e-books, articles, and other forms of digital media. By leveraging libraries’ digital collections, users can access a vast array of information on various topics.

- Preservation Efforts: Libraries collaborate with other institutions to ensure the long-term preservation of digital content.

- Organization and Curation: Libraries organize and curate digital collections using standardized metadata and taxonomy systems.

- Public Access: Libraries make their digital collections available to the public, promoting accessibility and knowledge sharing.

Creative Commons

Creative Commons is an organization that provides a set of licenses for creators to share their work under specific terms. By utilizing Creative Commons licenses, authors can grant permission for their work to be shared, adapted, or reused, promoting a culture of collaboration and knowledge sharing.

With Creative Commons licenses, creators can share their work and give others permission to reuse it, adapting it to their needs.

- License Types: Creative Commons offers various license types, including Attribution, ShareAlike, and NonCommercial, allowing creators to choose the right terms for their work.

- Community Engagement: Creative Commons fosters a community of creators and contributors who share knowledge and collaborate on projects.

- Fair Use: By using Creative Commons licenses, creators can help promote fair use and reduce copyright disputes.

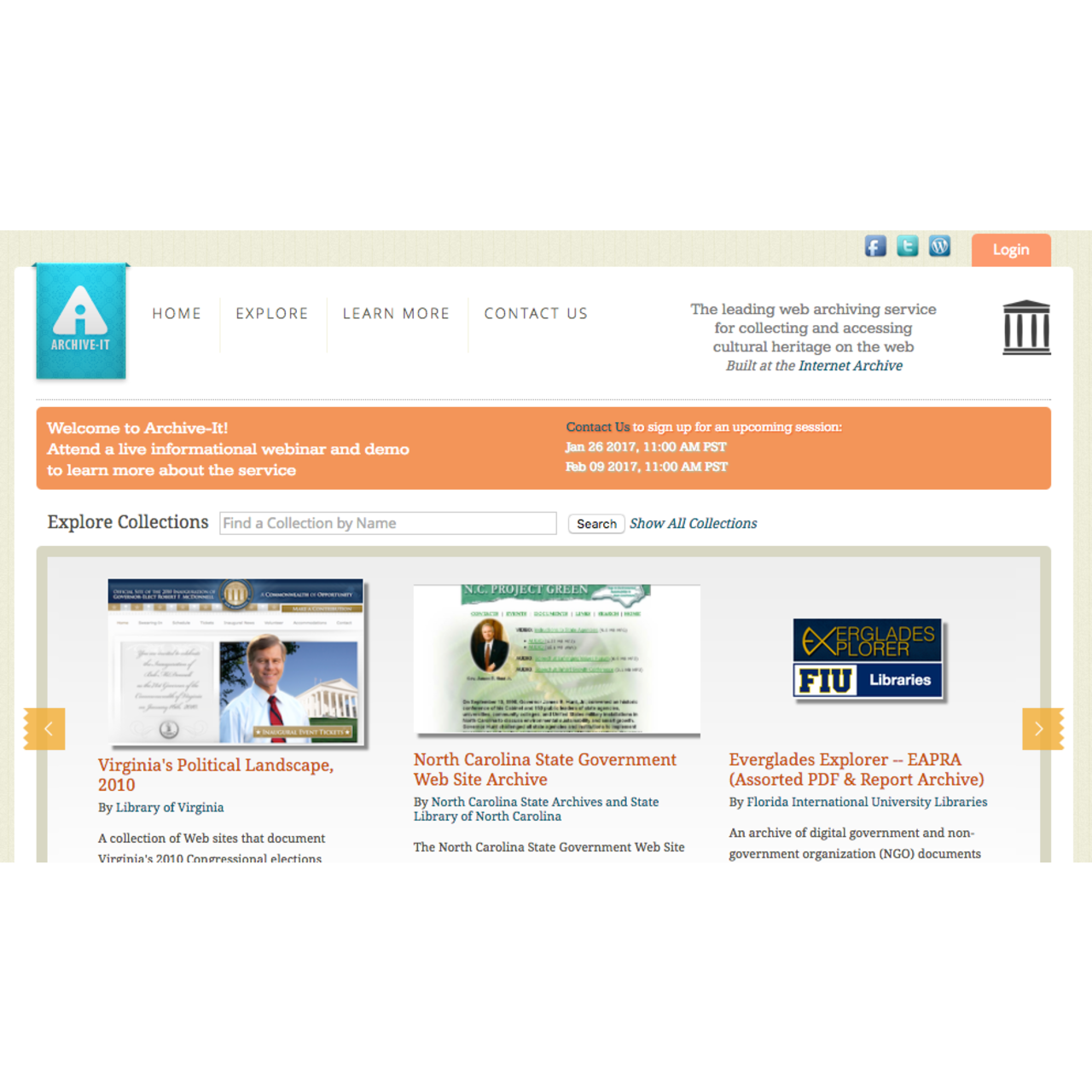

Institutional Web Archiving

Institutional web archiving plays a vital role in preserving the digital heritage of organizations, institutions, and communities. As the web continues to grow and evolve, institutions are taking proactive steps to capture and preserve their online presence, ensuring that their digital footprint remains accessible for future generations.

Institutional web archiving involves the systematic collection, preservation, and maintenance of online content, often with the goal of documenting an organization’s history, activities, and achievements. This can include websites, social media, blogs, online documents, and other digital materials that are important for the institution’s operations, research, or public engagement.

Creating a Web Archiving System

Institutions can create and manage their own web archiving systems, which typically involve the following components:

- Content selection and crawling: Identifying the online content to be archived and using crawlers to extract and collect the data.

- Storage and preservation: Storing the archived content in a secure and durable repository, ensuring that it remains accessible and usable over time.

- Metadata creation and management: Capturing and managing metadata about the archived content, such as descriptions, timestamps, and provenance information.

- Access and delivery: Making the archived content available for retrieval and reuse, often through a dedicated portal or repository.

These components require careful planning, technical expertise, and ongoing maintenance to ensure the integrity and usability of the archived content.

Major Archiving Institutions

Several institutions have made significant contributions to web archiving, including:

| Institution | Focus | Key Initiatives |

|---|---|---|

| The Internet Archive | Preserving web content and culture | warc, web crawling, and digital preservation |

| The International Internet Preservation Consortium (IIPC) | Collaborative web archiving efforts | Shared repository, crawling tools, and community engagement |

| Portico | Preserving scholarly content | E-journal archiving, deposit services, and content verification |

These institutions and many others play a crucial role in advancing web archiving practices, promoting digital preservation, and making cultural and historical content accessible to the public.

Benefits and Challenges

Institutional web archiving offers several benefits, including:

*

Preserving digital cultural heritage for future generations

* Enhancing the durability and accessibility of online content

* Supporting research, teaching, and outreach activities

* Facilitating collaboration and community engagement

However, web archiving also poses challenges, such as:

* Keeping up with the rapid pace of online content creation and evolution

* Balancing preservation needs with storage and resource constraints

* Ensuring the long-term sustainability and usability of archived content

* Addressing issues of intellectual property, copyright, and cultural rights

“The web is a dynamic and ever-changing entity, making it essential for institutions to proactively capture and preserve their online presence.” – [Source: Internet Archive]

Community-Driven Web Archiving

Community-driven web archiving is a collaborative approach to preserving web content, where individuals and groups work together to identify, collect, and store digital artifacts from the web. This approach relies heavily on community involvement and participation to ensure the long-term preservation of web content.

Community members can contribute to web archiving efforts in various ways, including identifying and collecting relevant content, participating in decision-making processes, and assisting with the management of archived collections. By engaging with the community, archivists and librarians can gain a better understanding of the needs and interests of users, ultimately leading to the creation of more relevant and accessible digital archives.

Benefits of Community-Driven Web Archiving

Community-driven web archiving offers several benefits, including:

- Improved relevance and accuracy of archived content, as community members can provide valuable insights and expertise.

- Increased community engagement and participation, as individuals can take an active role in shaping the collection and preservation of digital artifacts.

- Enhanced accessibility of archived content, as community members can help to develop and implement effective access strategies.

- More robust and sustainable preservation practices, as community members can contribute to the development and maintenance of preservation infrastructure.

By leveraging the collective knowledge and expertise of community members, web archivists and librarians can create more comprehensive and inclusive digital archives that reflect the diverse needs and interests of users.

Examples of Community-Driven Web Archiving Initiatives

There are several examples of community-driven web archiving initiatives that have successfully leveraged community involvement to preserve web content:

- The Internet Archive’s “Save It!” initiative, which allows community members to nominate and preserve important web pages.

- The Archive Team, a community-driven collective that works to rescue and preserve web content from defunct websites and servers.

- The Web Curator Tool, a framework for community-driven web archiving that allows curators to identify, collect, and store web content.

These initiatives demonstrate the potential of community-driven approaches to web archiving and highlight the importance of community involvement in preserving digital artifacts.

Web Archiving for Specific Domains

Web archiving for specific domains is a crucial aspect of preserving the digital heritage of the internet. These domains include government websites, academic websites, and social media platforms, which play significant roles in shaping our understanding of the world and its history.

Government websites, such as official government portals, statistical databases, and online documents, contain valuable information on public policies, laws, and historical events. However, due to their dynamic nature, these websites are often subject to frequent updates, revisions, and even censorship, which can lead to loss of critical information. Archiving government websites requires careful consideration of data preservation, metadata collection, and compliance with existing regulations and laws.

Government Websites

- Preservation of public records and documents is crucial in ensuring transparency and accountability in governance.

- The Internet Archive’s Presidential Library Archives is a notable example of web archiving for government websites, with collections spanning multiple presidential administrations.

- The US Congressional Website is another example of a website that has implemented web archiving practices to preserve its historical content.

Government websites are critical in preserving the historical record of public policy, legislation, and governance. Web archiving for these domains requires a nuanced approach that balances data preservation with the need for up-to-date information.

Academic Websites

- Academic websites, including those of universities and research institutions, contain a wealth of information on scientific discoveries, academic debates, and cultural achievements.

- The arXiv platform is a notable example of web archiving for academic websites, with collections spanning various fields of research, including physics, mathematics, and computer science.

- The JSTOR database is another example of a platform that preserves and provides access to academic journals, books, and primary sources.

Academic websites are essential in preserving the historical record of scientific knowledge and intellectual discourse. Web archiving for these domains requires careful consideration of data preservation, metadata collection, and interoperability with existing academic networks.

Social Media Platforms

- Social media platforms, including Twitter, Facebook, and Instagram, have become essential channels for global communication and information dissemination.

- The Perma.cc service provides a way to preserve and cite social media content, helping to ensure its availability for future research and reference.

- The archive.today service is another example of web archiving for social media platforms, with features like page scraping and content preservation.

Social media platforms are critical in preserving the historical record of global communication and cultural expression. Web archiving for these domains requires a careful approach that balances data preservation with the need for access and reuse.

Challenges and Future Directions

Web archiving faces numerous challenges, hindering its widespread adoption and effectiveness. Despite the efforts of various organizations and initiatives, there is still a need for improvement in multiple areas. One of the primary obstacles is the sheer scale and complexity of the web, which makes it difficult to capture and preserve its content.

Crawling Challenges

Crawling is a fundamental aspect of web archiving, as it enables the collection of web pages from various sites. However, crawling poses several challenges, including:

- Scalability: As the web continues to grow, crawling becomes increasingly resource-intensive. This has led to the development of more efficient crawling algorithms and tools, but the problem remains a significant challenge.

- Content volatility: Web pages are constantly changing, making it difficult for crawlers to keep up with updates, deletions, and additions. This has led to the development of more sophisticated crawling strategies, such as incremental crawling and continuous monitoring.

- Website restrictions: Some websites restrict crawling due to spam, denial-of-service (DoS) attacks, or other security concerns. This requires crawlers to adapt to these restrictions and find workarounds.

Data Processing Challenges

Once web pages are crawled, they need to be processed and stored for future reference. This involves data compression, deduplication, and indexing, among other tasks. However, data processing poses its own set of challenges:

- Large dataset management: The sheer volume of crawled data requires efficient storage solutions and retrieval systems.

- Data quality issues: Web pages can contain errors, inconsistencies, and biases, which must be addressed through data cleansing and normalization techniques.

- Metadata management: Effective metadata capture, storage, and retrieval are crucial for search and analysis purposes.

Emerging Trends and Future Directions

Despite the challenges, web archiving continues to evolve with emerging trends and technologies. Some of the exciting developments include:

- Artificial intelligence (AI) and machine learning (ML): AI and ML can help improve crawling efficiency, data processing accuracy, and search relevance.

- Blockchain-based archiving: Blockchain technology has the potential to ensure data integrity, verifiability, and immutability, making it an attractive solution for web archiving.

- Decentralized web archiving: Decentralized architectures can enable peer-to-peer data sharing, reducing dependence on centralized servers and improving data availability.

Web Archiving for Specialized Domains

Web archiving is not limited to general web data. Specialized domains, such as scientific research, social media, or news archives, require tailored archiving solutions. These domains often demand customized crawling strategies, data processing workflows, and search interfaces to meet their unique needs.

Web Archiving Tools Comparison: Alternative To Wayback Machine

![6 Great Wayback Machine Alternatives to Use [Archive Websites] Alternative to wayback machine](https://analternativeto.com/wp-content/uploads/alternative-to-wayback-machine-1.jpg)

When it comes to web archiving, selecting the right tool is crucial for effective preservation and retrieval of web content. With numerous options available, it can be overwhelming to choose the best tool for your needs. In this section, we’ll compare and contrast the features and functionalities of different web archiving tools to help you make an informed decision.

Crawling Capabilities

Crawling is the process of discovering and retrieving web pages for archiving. Let’s take a look at the crawling capabilities of different web archiving tools.

| Tool | Crawling | Data Processing | Storage |

|---|---|---|---|

| Internet Archive | Yes | Yes | Yes |

| Google Cache | Yes | No | No |

| Scrapy | Yes | Yes | Yes |

| P-archive | Yes | Yes | Yes |

| Heritrix | Yes | Yes | Yes |

In this table, you can see the crawling capabilities of various web archiving tools. Tools like Internet Archive, Scrapy, P-archive, and Heritrix support crawling, while Google Cache does not process data but can be used for crawling web pages.

Data Processing

Once web pages are crawled, they need to be processed and transformed into a format suitable for archiving. Let’s examine the data processing capabilities of different web archiving tools.

| Tool | Crawling | Data Processing | Storage |

|---|---|---|---|

| Internet Archive | Yes | Yes | Yes |

| Google Cache | Yes | No | No |

| Scrapy | Yes | Yes | Yes |

| P-archive | Yes | Yes | Yes |

| Heritrix | Yes | Yes | Yes |

In this table, you can see the data processing capabilities of various web archiving tools. Tools like Internet Archive, Scrapy, P-archive, and Heritrix support data processing, while Google Cache does not process data.

Storage Capabilities, Alternative to wayback machine

The storage capabilities of web archiving tools determine how much data they can store and how long they can retain it. Let’s examine the storage capabilities of different web archiving tools.

| Tool | Crawling | Data Processing | Storage |

|---|---|---|---|

| Internet Archive | Yes | Yes | Yes |

| Google Cache | Yes | No | No |

| Scrapy | Yes | Yes | Yes |

| P-archive | Yes | Yes | Yes |

| Heritrix | Yes | Yes | Yes |

In this table, you can see the storage capabilities of various web archiving tools. Tools like Internet Archive, Scrapy, P-archive, and Heritrix support storage, while Google Cache does not store data.

Best Practices for Web Archiving

Web archiving is a complex process that requires careful consideration of various factors to ensure the long-term preservation of web content. Effective web archiving depends on several best practices that must be followed to guarantee the accuracy, completeness, and accessibility of archived data.

Crawling Frequency and Scheduling

Proper crawling frequency and scheduling are crucial for web archiving. It is essential to strike a balance between data freshness and website load.

* The frequency of crawling depends on the content’s volatility and the website’s capacity to handle requests.

To achieve this balance, you should consider the following factors:

-

* The website’s content update frequency

* The number of visitors and page views per month

* The server’s processing power and bandwidth

By adjusting the crawling frequency and scheduling, you can prevent overloading the website and ensure that the archived content remains up-to-date and accurate.

Data Retention Policies

Data retention policies are critical for web archiving, as they determine how long archived content will be preserved. A well-defined retention policy helps ensure that archived data is not deleted prematurely or kept unnecessarily.

* Data retention policies should be based on the website’s content life cycle and the preservation goals.

To establish a data retention policy, consider the following factors:

-

* The website’s content life cycle (e.g., temporary, permanent, or archival)

* The preservation goals (e.g., research, education, or historical significance)

* The storage capacity and costs associated with data archiving

By establishing a clear data retention policy, you can ensure that archived content is preserved for an adequate period and remains accessible for future use.

Metadata Collection and Standards

Metadata is essential for web archiving, as it provides context and structure to archived content. Ensuring metadata collection and adherence to standards is vital for long-term preservation and accessibility.

* Metadata collection should follow established standards, such as Dublin Core or MODS.

To collect and manage metadata effectively, consider the following best practices:

-

* Use standardized metadata formats and vocabularies

* Document metadata collection and processing procedures

* Ensure metadata is properly linked to archived content

By following established metadata collection and standards, you can provide a solid foundation for web archiving and ensure the long-term accessibility of archived content.

Backup and Replication

Backup and replication are crucial for web archiving, as they provide a safeguard against data loss and ensure data availability in case of system failures or disasters.

* Backup and replication strategies should be regularly tested and updated to ensure data integrity.

To maintain data integrity and ensure backup and replication, consider the following best practices:

-

* Regularly test backup and replication processes

* Store backup data in separate locations

* Ensure data is properly duplicated and verified

By implementing effective backup and replication strategies, you can reduce the risk of data loss and ensure the availability of archived content even in the event of a disaster or system failure.

Collaboration and Communication

Effective web archiving requires collaboration and communication among stakeholders, including archivists, librarians, researchers, and website owners.

* Clear communication is essential for ensuring the accuracy and completeness of archived content.

To foster collaboration and communication, consider the following best practices:

-

* Establish a clear and open communication channel

* Define roles and responsibilities among stakeholders

* Schedule regular meetings and updates

By promoting collaboration and communication among stakeholders, you can ensure that web archiving efforts are effective, efficient, and beneficial for all parties involved.

Monitoring and Evaluation

Monitoring and evaluation are crucial for web archiving, as they help ensure the quality and accuracy of archived content.

* Regular monitoring and evaluation help identify issues and areas for improvement.

To monitor and evaluate web archiving efforts, consider the following best practices:

-

* Regularly review and update the web archiving process

* Conduct performance metrics and quality control

* Use user feedback to improve web archiving services

By monitoring and evaluating web archiving efforts, you can identify areas for improvement and ensure that archived content remains accurate, complete, and accessible over time.

Final Summary

In conclusion, alternative to Wayback Machine is a crucial topic in the era of digital preservation. By exploring different web archiving tools and techniques, we can ensure that our online content remains accessible for future generations. As the internet continues to evolve, it is essential that we develop effective strategies for preserving our digital heritage.

Top FAQs

What happens to web pages when they are archived?

When web pages are archived, they are saved in a state that represents the snapshot of the page at a particular point in time. This allows future generations to view how the page looked and functioned on that specific date.

How do web archiving tools handle dynamic content?

Web archiving tools like Internet Archive use various techniques, such as crawling and scraping, to archive dynamic content. These tools can also use browser simulation software to render JavaScript-heavy web pages accurately.

Can anyone use web archiving tools without technical expertise?

Many web archiving tools have user-friendly interfaces that make them accessible to users without extensive technical knowledge. However, some tools may require more advanced technical skills, especially when it comes to customizing and configuring the tools for specific needs.