Delving into best machine learning models for object detection, this discussion focuses on the latest and most effective architectures and techniques used in real-world applications. From YOLO to Faster R-CNN, we’ll explore the strengths and weaknesses of each model.

The increasing demand for accurate and efficient object detection has driven innovation in this field, with various machine learning models being developed and adapted for different use cases. In this overview, we’ll compare and contrast popular machine learning models such as YOLO, SSD, and Faster R-CNN, discussing their strengths and weaknesses to help readers choose the best approach for their specific needs.

Overview of Best Machine Learning Models for Object Detection

YOLO (You Only Look Once), SSD (Single Shot Detector), and Faster R-CNN are the top doggos in the world of object detection. Each one has its own strengths and weaknesses, making them suitable for different tasks.

YOLO (You Only Look Once)

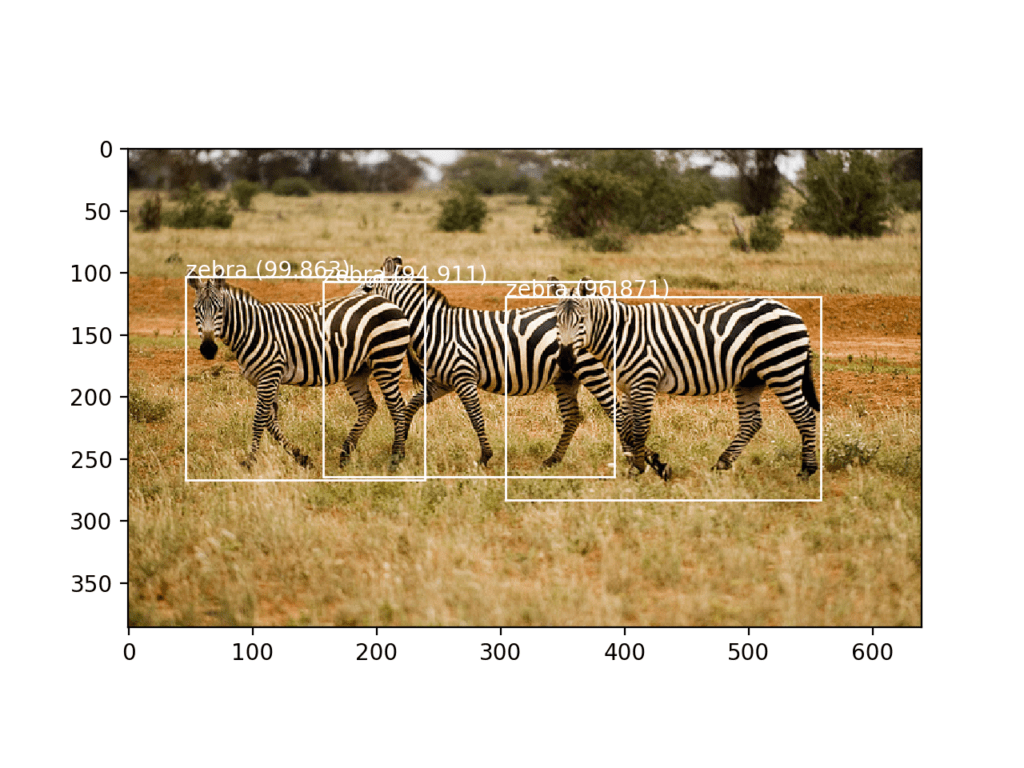

YOLO is a real-time object detection system that operates by predicting bounding boxes and class probabilities directly from full images in one pass. This approach allows for extremely fast detection. Here are some key points about YOLO:

-

* YOLO divides the image into a grid of cells, detects objects in each cell, and then combines the detections to get the final output.

* It uses a convolutional neural network (CNN) to process the image and identify objects.

* YOLO is well-suited for applications that require real-time object detection, such as self-driving cars and security systems.

YOLO is fast, but not always the most accurate. Its accuracy can be improved by using techniques such as anchor boxes and non-maximum suppression.

SSD (Single Shot Detector)

SSD is another well-known object detection algorithm that differs from YOLO in how it detects objects. While YOLO predicts bounding boxes and class probabilities in a single pass, SSD uses a hierarchical architecture to detect objects. Here are some key points about SSD:

-

* SSD extracts images at multiple scales and then applies a sliding window approach to locate objects.

* It uses a CNN to process the image and identify objects.

* SSD is well-suited for applications that require accurate object detection, such as facial recognition and object tracking.

SSD is more accurate than YOLO but slower.

Faster R-CNN (Faster Region-based Convolutional Neural Networks)

Faster R-CNN is a popular object detection algorithm that is more accurate than YOLO and SSD but slower. It uses a two-stage approach to detect objects: first, it generates region proposals and then classifies them using a CNN. Here are some key points about Faster R-CNN:

-

* Faster R-CNN uses a Region Proposal Network (RPN) to generate proposals from the image.

* It then uses a Fast R-CNN network to classify the proposals and predict bounding boxes.

* Faster R-CNN is well-suited for applications that require high-accuracy object detection, such as autonomous vehicles and robotics.

Faster R-CNN is the most accurate of the three algorithms but also the slowest.

YOLO, SSD, and Faster R-CNN are all popular object detection models, but they differ in terms of their architecture, speed, and accuracy.

Recent Advances in Object Detection

Recent advances in object detection have significantly improved the accuracy and efficiency of computer vision tasks. One of the key factors contributing to this improvement is the widespread adoption of pre-trained models and transfer learning techniques. Let’s delve into the impact of transfer learning and attention mechanisms on object detection.

Transfer Learning in Object Detection

Transfer learning is a technique where a pre-trained model is fine-tuned for a new task. In the context of object detection, transfer learning has been shown to greatly improve performance by leveraging the knowledge gained from pre-trained models on large datasets such as ImageNet. This is because the pre-trained models have already learned to recognize general features such as edges, textures, and patterns that are common across a wide range of images.

According to a study published in the CVPR 2016 conference, fine-tuning a pre-trained model can achieve state-of-the-art results on the COCO dataset, outperforming traditional object detection methods.

Transfer learning is achieved by adding a new head or layer on top of the pre-trained model and training it on the target dataset. This approach allows the model to focus on the specific task at hand while leveraging the general knowledge gained from the pre-trained model.

- Feature Extraction: The pre-trained model extracts features from the input image, which are then passed through the new head to make predictions.

- Fine-tuning: The new head is trained on the target dataset to adapt to the specific task.

- Prediction: The final predictions are made by combining the feature extraction and fine-tuning steps.

For example, consider a model pre-trained on ImageNet, which is then fine-tuned on a dataset of cars. The pre-trained model has already learned to recognize general features such as wheels, windows, and body shape. The new head is trained to adapt to the specific task of detecting cars, learning to recognize subtle differences such as car models, colors, and angles.

Attention Mechanisms in Object Detection

Attention mechanisms are a type of neural network component that allows the model to focus on specific parts of the input data. In object detection, attention mechanisms can be used to selectively focus on the regions of interest in the image, such as the faces, cars, or buildings.

Attention mechanisms work by computing a weighted sum of the input features based on their relevance to the task at hand. This results in a higher-dimensional representation of the input data that highlights the most informative regions.

Attention mechanisms have been shown to improve the performance of object detection models by focusing on the most relevant regions of the image.

Some common types of attention mechanisms used in object detection include:

- Spatial Attention: This type of attention focuses on specific regions of the image based on their spatial location.

- Channel Attention: This type of attention focuses on specific channels of the feature map based on their relevance to the task.

- Recurrent Attention: This type of attention uses recurrent neural networks to selectively focus on the most informative regions of the image.

For example, consider a model using spatial attention to detect faces in an image. The model computes a spatial attention map that highlights the regions of the image where the faces are likely to be present. This attention map is then combined with the feature extraction step to produce the final predictions.

Evaluating Object Detection Models

In the world of machine learning, evaluating object detection models is like giving grades to your pesky little brother, Tukang (short for betawi: Tukang Ojek, which means delivery boy) – you gotta know how well they’re doing, and what they need to improve on! Object detection models are judged based on their ability to accurately identify and locate objects within images or videos.

The metrics used to evaluate object detection models are quite varied, and it’s essential to understand the strengths and weaknesses of each one. In this section, we’ll delve into the world of object detection evaluation, discussing the most popular metrics, their benefits, and limitations.

Popular Evaluation Metrics

Evaluating object detection models using various metrics helps us better understand their strengths and weaknesses. The most commonly used evaluation metrics in object detection include accuracy, precision, recall, and F1-score.

Accuracy

Accuracy is a metric that measures the proportion of correctly classified instances out of the total number of instances. It’s like asking your little brother Tukang how many deliveries he successfully made out of all the ones he attempted. If he says, “All of them, abang!” (abang means big brother), he’s probably a pretty good delivery boy!

However, accuracy isn’t always the best metric for object detection, as it doesn’t consider the number of false positives or false negatives. For example, if a model has 10 false positives and 1 true positive, its accuracy will be 90%, but it’s still not very effective.

- The problem with accuracy is that it doesn’t account for class imbalance. In object detection, classes like “car” and “person” are relatively common, while classes like “bicycle” or “tree” might be less common.

- Additionally, accuracy can be misleading when dealing with overlapping classes, where multiple classes have similar features.

Precision

Precision is a measure of how accurate a model is, but only considering the true positives. It’s like asking Tukang how many deliveries he made successfully out of all the ones he actually delivered. If he says, “I delivered all of them, abang!”, he’s likely being honest!

Precision is a useful metric when dealing with class imbalance or overlapping classes, as it rewards models for being more accurate. However, it can be misleading when dealing with classes that are difficult to detect.

Recall

Recall is a measure of how well a model can detect instances of a class, regardless of whether they’re true or false positives. It’s like asking Tukang how many deliveries he missed out on, or how many he failed to deliver. If he says, “I missed quite a few, abang!”, he’s likely honest!

Recall is an essential metric when dealing with medical applications or applications where missing an instance is more critical than incorrectly detecting one.

F1-score

The F1-score is a measure of a model’s precision and recall simultaneously. It’s like asking Tukang how well he balanced his delivery business, making sure he delivers accurately and meets his targets. If he scores high on both, he’s probably a great delivery boy!

The F1-score is a useful metric when balancing precision and recall, and it’s often used as the primary evaluation metric in object detection tasks.

Other Evaluation Metrics

In addition to the metrics mentioned above, other popular evaluation metrics for object detection include mean average precision (mAP), IoU (intersection over union), and average precision.

- Mean average precision (mAP) is a measure of a model’s performance across all classes, taking into account both precision and recall.

- IoU (intersection over union) is a measure of how well a model predicts the boundaries of an object, taking into account both the intersection and union of the predicted and ground-truth boundaries.

- Average precision is a measure of the precision of a model at different thresholds, taking into account both the number of true and false positives.

Applications of Object Detection

Object detection has revolutionized various industries by enabling machines to identify and classify objects within images and videos. From enhancing user experience to ensuring public safety, the applications of object detection are vast and diverse. In this section, we will explore the use of object detection in autonomous vehicles, surveillance systems, image search, and recommendation systems.

Autonomous Vehicles

Autonomous vehicles rely heavily on object detection to navigate through complex environments. By detecting and classifying objects such as pedestrians, vehicles, and road signs, autonomous vehicles can make informed decisions to avoid accidents and ensure safe travel. For instance, a self-driving car may detect a pedestrian crossing the street and slow down to avoid a collision. Object detection algorithms such as YOLO (You Only Look Once) and SSD (Single Shot Detector) are commonly used in autonomous vehicle applications due to their high accuracy and efficiency.

Surveillance Systems

Surveillance systems utilize object detection to monitor and track individuals and objects within a designated area. By detecting and classifying objects such as faces, vehicles, and luggage, surveillance systems can alert authorities to potential security threats. Object detection algorithms such as Haar cascades and convolutional neural networks (CNNs) are commonly used in surveillance systems to detect and classify objects in real-time.

Image Search and Recommendation Systems

Image search and recommendation systems leverage object detection to improve user experience. By detecting and classifying objects within images, these systems can provide users with relevant search results and personalized recommendations. For instance, an image search engine may detect objects such as dogs, cats, and flowers within an image and provide relevant search results. Object detection algorithms such as ResNet and Inception are commonly used in image search and recommendation systems due to their high accuracy and efficiency.

Other Applications, Best machine learning models for object detection

Object detection has numerous other applications beyond autonomous vehicles, surveillance systems, and image search. Some examples include:

- Healthcare: Object detection can be used to detect medical conditions such as tumors and diseases within medical images.

- Retail: Object detection can be used to detect and track inventory levels within retail stores.

- Security: Object detection can be used to detect and track individuals and objects within secured areas such as prisons and airports.

In conclusion, object detection has a wide range of applications beyond its initial introduction in the field of computer vision. Its ability to detect and classify objects has revolutionized various industries and continues to shape the way we live and interact with technology.

Final Review

In this discussion, we’ve explored the best machine learning models for object detection, including YOLO, SSD, and Faster R-CNN. By understanding the strengths and weaknesses of each model, readers can make informed decisions about which approach to use in their real-world applications. Whether you’re working on autonomous vehicles, surveillance systems, or image search and recommendation systems, the insights gained from this discussion will help you develop more accurate and efficient object detection models.

Common Queries: Best Machine Learning Models For Object Detection

Q: What is object detection, and why is it important in real-world applications?

A: Object detection is a technique used in computer vision to identify and localize objects within an image or video. It plays a crucial role in many applications, including autonomous vehicles, surveillance systems, and image search and recommendation systems.

Q: What are the key differences between YOLO, SSD, and Faster R-CNN?

A: YOLO (You Only Look Once) is a real-time object detection algorithm that detects objects in a single pass. SSD (Single Shot Detector) is a fast and accurate object detection algorithm that uses a single neural network to detect objects. Faster R-CNN is a more accurate object detection algorithm that uses a region proposal network to detect objects.

Q: How do convolutional neural networks (CNNs) contribute to object detection?

A: CNNs are essential in object detection as they enable the model to learn spatial hierarchies of features from the input image.