Kicking off with BLEU: A Method for Automatic Evaluation of Machine Translation, this method is a significant development in the field of machine translation. It aims to evaluate the quality of machine translations by comparing them to human translations.

The BLEU method has become a widely accepted standard for evaluating machine translation systems, and it has been instrumental in advancing the field of natural language processing. Its importance lies in its ability to provide a quantitative measure of translation quality, which is essential for improving machine translation systems.

How BLEU Scores are Calculated

Understanding the mechanics behind BLEU scores is essential for evaluating the quality of machine translation. The BLEU score is a widely used metric for measuring the fluency and accuracy of machine-generated content, and its calculation involves several key components.

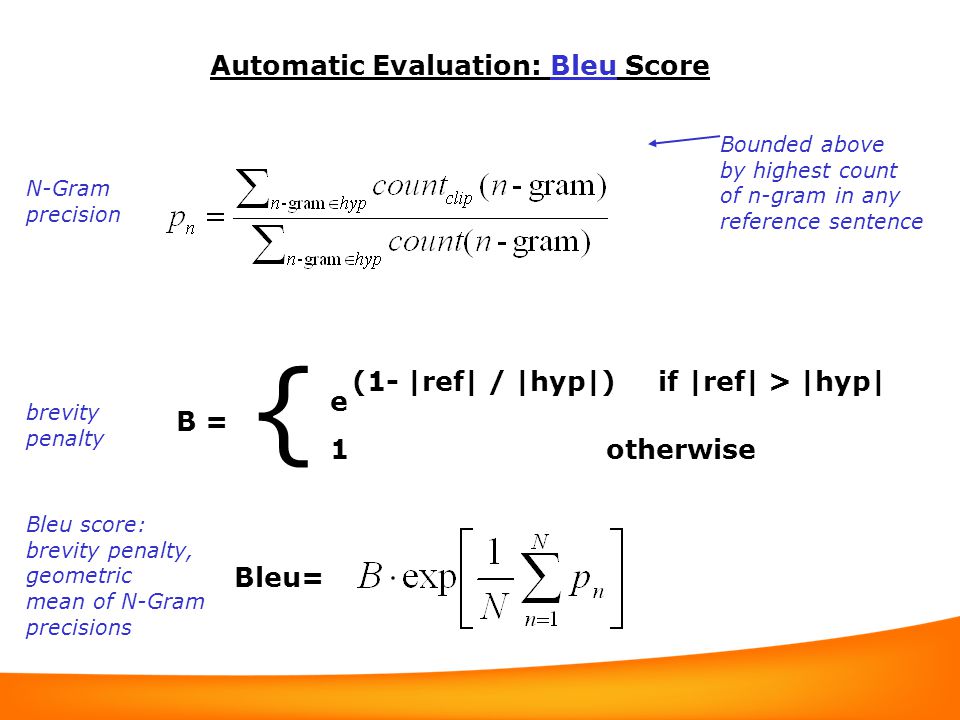

The BLEU score is calculated using the following formula:

BLEU = BP * \* (n-gram precisions)

where BP is the brevity penalty, * is the geometric mean, and n-gram precisions are calculated based on word n-grams.

Breaking Down the Calculation

To understand the calculation of BLEU scores, it’s essential to grasp the individual components involved.

n-gram Precision

The n-gram precision is a measure of how closely the machine-generated content matches the reference translation. It’s calculated by comparing the number of correct n-grams to the total number of n-grams in the reference translation.

The precision of a single n-gram is calculated using the following formula:

p(n) = correct n-grams / total n-grams in reference

Where correct n-grams are the number of n-gram occurrences in the machine-generated content that are also present in the reference translation.

Brevity Penalty (BP)

The brevity penalty is a factor that’s applied when the machine-generated content is shorter than the reference translation. This penalty ensures that shorter translations aren’t unfairly penalized in comparison to longer ones.

The brevity penalty is calculated using the following formula:

BP = 1 if (r / t) < 1 Where r is the length of the reference translation, and t is the length of the machine-generated translation. For longer translations, the brevity penalty is 1, since there's no need to penalize longer translations.

Geometric Mean

The geometric mean is a mathematical operation that calculates the average of a set of numbers. In the context of BLEU scores, it’s used to combine the individual n-gram precisions into a single score.

The geometric mean is calculated using the following formula:

GM = \* (p(1) * p(2) * … * p(N))

Where p(n) is the precision of each n-gram, and N is the total number of n-grams considered.

Impact of Precision, Recall, and F-score on BLEU Scores

While the BLEU score is a widely used metric, it has some limitations. One of the key limitations is its reliance on n-gram precision, which can lead to biases in evaluation.

The BLEU score is sensitive to both precision and recall, with a bias towards precision. This means that a translation that has a high number of correct n-grams but is relatively short may score higher than a translation that has a lower number of correct n-grams but is longer.

The F-score, which is a weighted average of precision and recall, can be used to mitigate this bias. However, the F-score is not directly integrated into the BLEU score calculation.

Real-World Applications of BLEU Scores

BLEU scores are widely used in machine translation evaluation, but they have limitations in real-world applications.

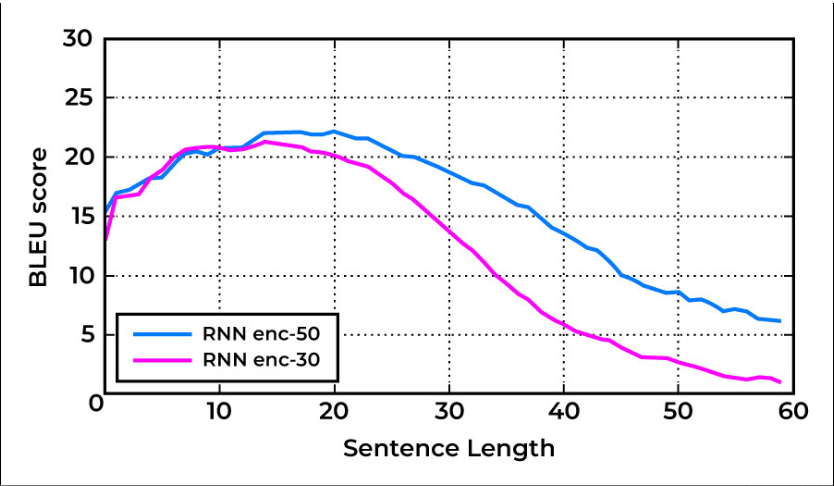

In practice, BLEU scores can be sensitive to the choice of n-gram size, and the optimal n-gram size may vary depending on the language pair and the specific translation task.

Additionally, BLEU scores may not accurately capture the nuances of human translation, which can involve context-dependent and figurative language that’s difficult to quantify.

Applications of BLEU in Machine Translation

In the world of machine translation, a multitude of evaluation metrics exists to assess the efficacy of translation systems. However, the BLEU score stands out as a widely accepted metric, employed in diverse applications to gauge the quality of translation outputs. From evaluating English translations to assessing machine translation for non-English languages like Chinese and Spanish, BLEU’s versatility has made it an indispensable tool for researchers and practitioners alike.

Language Support

BLEU’s utility is not limited to a specific language or language pair. It has been adapted and fine-tuned for various languages, including English, Chinese, and Spanish, to name a few. The metric’s flexibility lies in its ability to accommodate changes in linguistic structures, idioms, and syntax of different languages.

In English, BLEU has been extensively used to evaluate machine translation systems for a wide range of domains, including technical and literary texts. Its application in non-English languages has been equally impressive, with researchers successfully adapting BLEU for Chinese and Spanish translation systems.

Tasks beyond Translation

While BLEU is primarily associated with machine translation evaluation, its applications extend beyond translation tasks. In text summarization, BLEU is used to assess the accuracy of summaries generated by machine translation systems. This ensures that the summaries accurately reflect the essence of the original text.

Furthermore, BLEU plays a crucial role in question answering systems, where its output is used to evaluate the relevance and accuracy of answers generated by the system. In dialogue generation tasks, BLEU serves as a metric to assess the coherence and fluency of generated dialogue.

Research and Development

BLEU’s significance extends to the realm of machine translation research and development. In benchmarking new models, BLEU serves as a yardstick to measure their performance. By evaluating the strengths and weaknesses of a model using BLEU, researchers can make informed decisions about model improvements and optimization.

In addition, BLEU is employed in testing and evaluating the robustness of machine translation systems under various conditions, such as language variability and domain adaptation. By subjecting models to rigorous testing using BLEU, researchers can identify areas that require improvement and optimize the systems accordingly.

Limitations and Challenges of BLEU: Bleu: A Method For Automatic Evaluation Of Machine Translation

The Bleu score, although a widely used and effective method for evaluating machine translation, is not without its limitations and challenges. As with any evaluation metric, there are certain shortcomings that must be considered when using Bleu to assess the accuracy of machine translation systems.

The primary criticism of Bleu is its reliance on n-gram precision, which focuses on measuring the overlap between the predicted translation and the reference translation. However, this approach neglects the syntax and semantics of the language, leading to a superficial assessment of the translation quality.

Reliance on N-gram Precision

Bleu’s reliance on n-gram precision has been a subject of criticism. While it is able to capture the co-occurrence of words and phrases, it fails to capture the deeper syntactic and semantic structures of language.

This is particularly problematic when dealing with non-trivial cases, where the translation may not simply be a matter of swapping out individual words, but rather re-arranging the entire sentence structure to convey the intended meaning.

Neglect of Syntax and Semantics

The Bleu score neglects the syntax and semantics of language, which can lead to a shallow assessment of translation quality. By focusing solely on n-gram precision, Bleu fails to capture the nuances of language, such as context, idioms, and figurative language.

For example, the sentence “The dog chased the cat” and “The cat was chased by the dog” have the same n-gram precision, but clearly convey different meanings. Bleu would not be able to capture this distinction.

Out-of-Vocabulary Words, Punctuation, and Special Characters

Bleu can also be misled by out-of-vocabulary words, which are words or phrases that do not appear in the training data. In such cases, the Bleu score may not be an accurate reflection of the translation quality, as the model may be forced to rely on context or paraphrasing to convey the intended meaning.

Additionally, out-of-vocabulary words can include punctuation and special characters, which are often used to disambiguate language and convey tone. Bleu’s failure to capture these nuances can lead to a misleading assessment of translation quality.

Strategies for Improving Bleu Scores

To improve Bleu scores, several strategies can be employed. One approach is to use word embeddings, which can capture the semantic relationships between words and improve the accuracy of translation.

Another approach is to use named entity recognition (NER), which can identify and disambiguate named entities in the translation, such as people, places, and organizations. This can improve the accuracy of translation and reduce the reliance on out-of-vocabulary words.

Finally, sentiment analysis can be used to capture the tone and sentiment of the original text and ensure that it is accurately conveyed in the translated text. This can help to improve the coherence and consistency of the translation.

Word Embeddings

Word embeddings are a technique used to capture the semantic relationships between words. By representing each word as a vector in a high-dimensional space, word embeddings can capture the nuances of language, including context, idioms, and figurative language.

Word embeddings can be used to improve Bleu scores by allowing the model to better capture the meaning of words and phrases, and to reduce the reliance on out-of-vocabulary words.

“The meaning of a word is not fixed, it’s dynamic and evolves over time, and word embeddings are a powerful tool for capturing that dynamic relationship.”

Named Entity Recognition (NER)

Named entity recognition (NER) is a technique used to identify and disambiguate named entities in the translation. By identifying and labeling named entities, NER can improve the accuracy of translation and reduce the reliance on out-of-vocabulary words.

For example, in the sentence “John Smith is a software engineer at Google”, the NER model would identify “John Smith” as a person, “software engineer” as a profession, and “Google” as an organization. This allows the model to accurately convey the meaning of the sentence and to reduce the reliance on out-of-vocabulary words.

Sentiment Analysis

Sentiment analysis is a technique used to capture the tone and sentiment of the original text and to ensure that it is accurately conveyed in the translated text. By analyzing the sentiment of the text, sentiment analysis can help to improve the coherence and consistency of the translation.

For example, in the sentence “I love my new car!”, the sentiment analysis model would identify the sentiment as positive, indicating a high level of enthusiasm and excitement. The model would then use this information to translate the sentence in a way that conveys the intended tone and sentiment.

Future Directions of BLEU Research

As we continue to advance in the field of machine translation, it’s essential to explore the potential future directions of BLEU research. This will enable us to improve the accuracy and effectiveness of machine translation systems. BLEU has been a pioneering evaluation metric in the field, and its continued development is crucial for pushing the boundaries of machine translation.

Integration with Other Evaluation Metrics

BLEU has been primarily used as a standalone evaluation metric for machine translation. However, recent research has shown that combining BLEU with other evaluation metrics can provide a more comprehensive and accurate assessment of machine translation systems. For instance, combining BLEU with metrics such as ROUGE (Recall-Oriented Understudy for Gisting Evaluation) and METEOR (Metric for Evaluation of Translation with Explicit ORdering) can provide a more nuanced evaluation of machine translation systems.

- The integration of BLEU with other evaluation metrics can provide a more comprehensive and accurate assessment of machine translation systems.

- Combining BLEU with metrics such as ROUGE and METEOR can provide a more nuanced evaluation of machine translation systems.

- This approach can help identify the strengths and weaknesses of machine translation systems and provide a more accurate evaluation of their performance.

Domain Adaptation and Transfer Learning

Domain adaptation and transfer learning are emerging areas of research in machine translation that can significantly improve the performance of BLEU scores. Domain adaptation involves adapting a machine translation system to a new domain or task, while transfer learning involves leveraging the knowledge gained from one task or domain to improve the performance on another task or domain. By leveraging domain adaptation and transfer learning, researchers can improve the accuracy of BLEU scores and enable machine translation systems to perform better on low-resource languages.

“Domain adaptation and transfer learning can significantly improve the performance of BLEU scores by enabling machine translation systems to adapt to new domains and leverage knowledge from other tasks or domains.”

Human-Computer Interaction and Human-Machine Translation, Bleu: a method for automatic evaluation of machine translation

The increasing use of machine translation in human-computer interaction and human-machine translation presents new challenges and opportunities for BLEU research. Human-machine translation requires a more nuanced evaluation metric that can capture the complexities of human-computer interaction. By developing BLEU-based metrics that can capture the subtleties of human-computer interaction, researchers can improve the performance of machine translation systems and enhance the user experience.

- The increasing use of machine translation in human-computer interaction and human-machine translation presents new challenges and opportunities for BLEU research.

- A more nuanced evaluation metric is required to capture the complexities of human-computer interaction.

- Developing BLEU-based metrics that can capture the subtleties of human-computer interaction can improve the performance of machine translation systems and enhance the user experience.

Conclusion

BLEU has revolutionized the field of machine translation by providing a reliable and objective method for evaluating translation quality. However, it is not without its limitations, and researchers are continually working to improve and refine the method to better capture the nuances of human language.

FAQ Summary

What is the BLEU method?

The BLEU method is a standard evaluation metric for machine translation systems, which compares the output of a machine translation system to a human translation.

How is the BLEU score calculated?

The BLEU score is calculated by comparing the n-gram precision, brevity penalty, and length ratio of the machine translation output to the human translation.

What are the limitations of the BLEU method?

The BLEU method has limitations, including its reliance on n-gram precision and its neglect of syntax and semantics. It can also be misled by out-of-vocabulary words, punctuation, and special characters.