As effective approaches to attention-based neural machine translation takes center stage, this opening passage beckons readers into a world of innovative solutions for efficient language translation. Attention-based neural machine translation (NMT) has emerged as a game-changer in the field of machine translation systems, enabling machines to learn and translate languages with unprecedented accuracy.

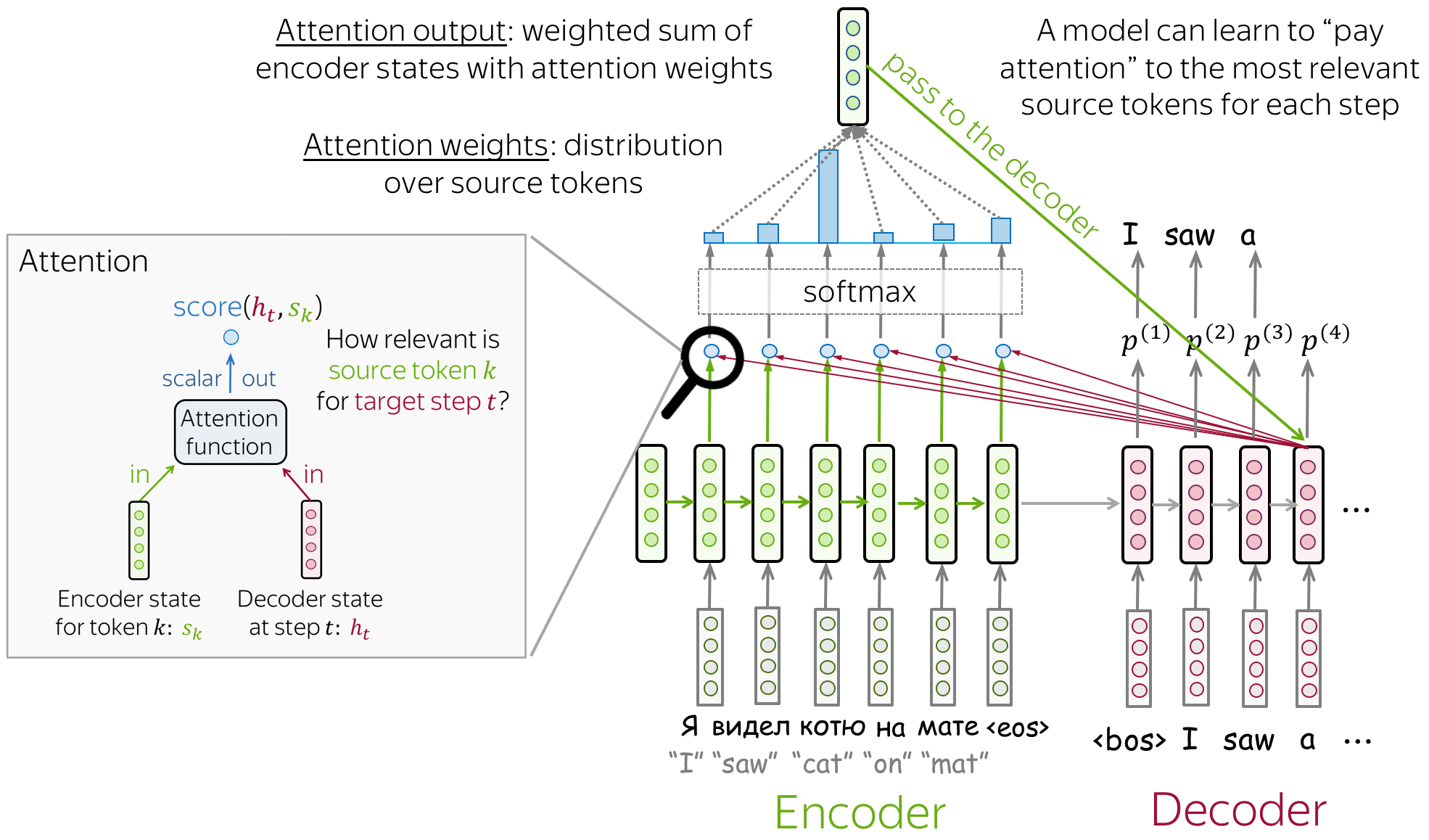

The concept of attention in NMT allows the model to focus on specific parts of the input sequence, improving the translation quality and enabling the model to handle long-range dependencies. This technology has been widely adopted in various industries, including language translation, speech recognition, and text summarization.

Introduction to Attention-based Neural Machine Translation

Blud, you’re probably wondering how machine translation systems have evolved over time. It’s a wild ride, trust me. From the early days of rule-based translation to the current state-of-the-art neural machine translation (NMT), it’s been a journey of ups and downs. And, mate, attention mechanisms have played a massive role in this evolution.

The concept of attention in the context of NMT is like a spotlight shining on the most relevant parts of the input sentence. It helps the model focus on the bits that matter most, rather than just treating all words as equal. It’s like having a personal assistant that helps you concentrate on the important bits of information. This, mate, is the essence of attention in NMT.

The Role of Attention in NMT, Effective approaches to attention-based neural machine translation

Attention mechanisms allow the model to weigh the importance of different inputs, giving more attention to the bits that are relevant to the translation. It’s like having a scorecard that tells you what’s most important for the translation. This is achieved through the use of attention weights, which represent the level of importance assigned to each input element.

Attention mechanism: “It’s like having a spotlight that shines on the most relevant parts of the input sentence.”

Types of Attention Mechanisms

There are several types of attention mechanisms used in NMT, including:

- Global Attention: This type of attention uses a single attention vector to represent the entire input sentence. It’s like having a single spotlight that shines on the whole input.

- Bahdanau Attention: This type of attention uses a multi-step attention mechanism, where the attention vector is updated at each step. It’s like having a spotlight that follows the input sentence as it’s processed.

Advantages of Attention-based NMT

Attention-based NMT has several advantages over traditional NMT models, including:

- Improved Translation Quality: Attention-based NMT models tend to produce higher-quality translations, especially for complex sentences.

- Handling Long Sentences: Attention-based NMT models can handle long sentences more effectively, as they focus on the most relevant parts of the input.

Real-World Applications

Attention-based NMT has several real-world applications, including:

- Machine Translation: Attention-based NMT models can be used for machine translation tasks, such as translating text from one language to another.

- Text Summarization: Attention-based NMT models can be used for text summarization tasks, where the model generates a summary of the input text.

Challenges and Future Research Directions

While attention-based NMT has made significant progress in recent years, there are still several challenges to be addressed, including:

- Handling Out-of-Vocabulary Words: Attention-based NMT models struggle to handle out-of-vocabulary words, which can negatively impact translation quality.

- Handling Contextual Information: Attention-based NMT models often struggle to capture contextual information, such as idioms and phrases.

Understanding Attention Mechanisms in NMT

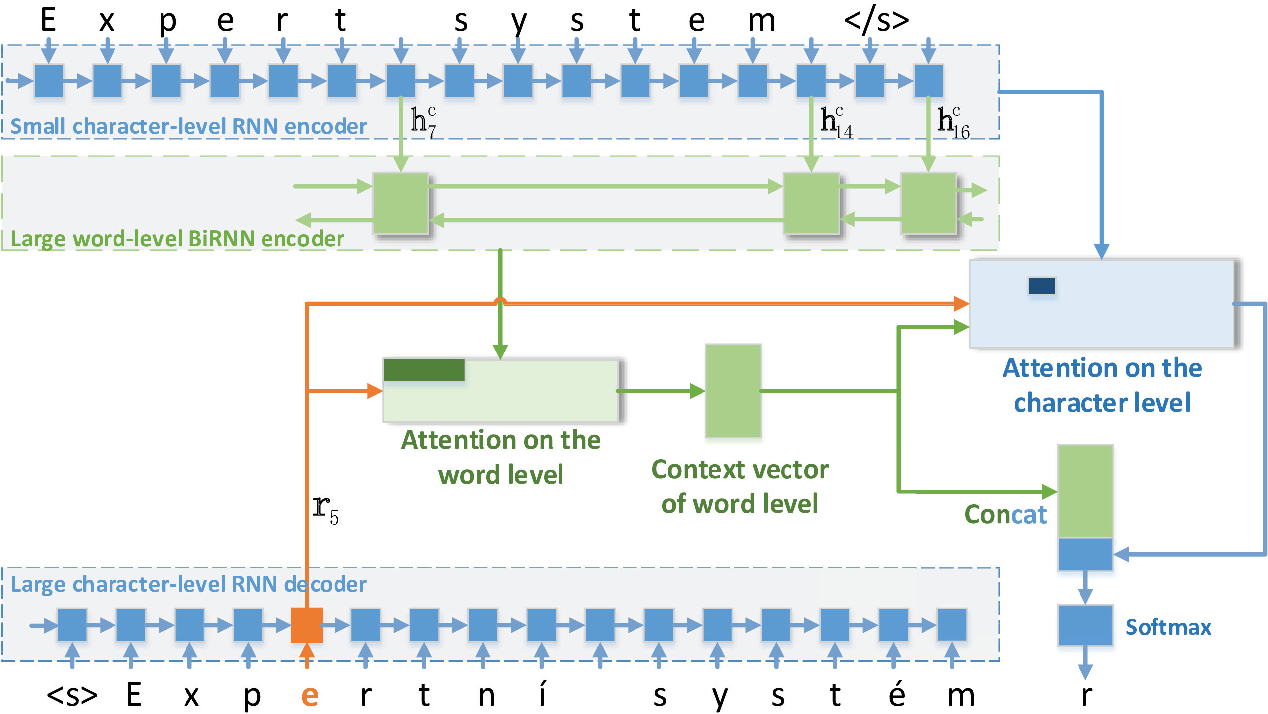

In the realm of Neural Machine Translation (NMT), attention mechanisms have revolutionized the way we process and understand the complexities of language. By allowing the model to focus on specific parts of the input sequence, attention mechanisms have significantly improved the accuracy and efficiency of NMT systems. In this section, we delve into the different types of attention mechanisms used in NMT and compare their strengths and weaknesses.

Bahdanau Attention Mechanism

The Bahdanau attention mechanism is a popular choice in NMT, introduced by Dzmitry Bahdanau and colleagues in 2015. This mechanism uses a separate attention vector to represent the importance of each input token in the context of the translation. The attention vector is computed using a feedforward neural network and a set of learnable weights.

The Bahdanau attention mechanism uses a separate attention vector to represent the importance of each input token.

The Bahdanau attention mechanism has been extensively used in various NMT architectures, including the encoder-decoder architecture. Its strengths include:

* High accuracy and fluency in generated translations

* Ability to handle long-range dependencies in language

However, the Bahdanau attention mechanism has some weaknesses:

* Requires a separate attention vector for each input token, which can increase computational complexity

* Can be sensitive to over- or under-attending to certain tokens

Luong Attention Mechanism

The Luong attention mechanism is another widely-used attention mechanism in NMT, introduced by Thang Luong in 2015. This mechanism uses a general-purpose attention mechanism that can be applied to various NMT architectures. The Luong attention mechanism uses a single vector to represent the importance of each input token and is computed using a dot product attention function.

The Luong attention mechanism uses a general-purpose attention mechanism that can be applied to various NMT architectures.

The Luong attention mechanism has been widely used in NMT architectures, including the encoder-decoder architecture. Its strengths include:

* High accuracy and fluency in generated translations

* Ability to handle multi-head attention and long-range dependencies in language

However, the Luong attention mechanism has some weaknesses:

* Can be computationally expensive due to the dot product attention function

* May require additional hyperparameter tuning to achieve optimal results

Other Attention Mechanisms

Other attention mechanisms used in NMT include:

* Global attention mechanism: uses a global context vector to represent the importance of each input token

* Local attention mechanism: uses a local context vector to represent the importance of each input token

* Heterogeneous attention mechanism: uses a hybrid of different attention mechanisms to represent the importance of each input token

- Global attention mechanism:

- Local attention mechanism:

- Heterogeneous attention mechanism:

The global attention mechanism uses a global context vector to represent the importance of each input token. This is achieved by computing a weighted sum of the input tokens using a global attention vector.

The global attention mechanism uses a global context vector to represent the importance of each input token.

The local attention mechanism uses a local context vector to represent the importance of each input token. This is achieved by computing a weighted sum of the input tokens using a local attention vector.

The local attention mechanism uses a local context vector to represent the importance of each input token.

The heterogeneous attention mechanism uses a hybrid of different attention mechanisms to represent the importance of each input token. This is achieved by combining the strengths of different attention mechanisms.

The heterogeneous attention mechanism uses a hybrid of different attention mechanisms to represent the importance of each input token.

In conclusion, attention mechanisms have played a crucial role in improving the accuracy and efficiency of NMT systems. The Bahdanau and Luong attention mechanisms are widely used in NMT and have been shown to achieve high accuracy and fluency in generated translations. However, each attention mechanism has its strengths and weaknesses, and the choice of attention mechanism depends on the specific NMT architecture and application.

Designing Efficient Attention Mechanisms for NMT

In the realm of Neural Machine Translation (NMT), designing efficient attention mechanisms is a crucial aspect, as it can significantly impact the performance and efficiency of the entire model. With the ever-increasing demand for high-quality machine translations, researchers and developers are constantly seeking innovative solutions to optimize attention mechanisms, ensuring that models can efficiently attend to relevant information while processing input sequences.

Attention mechanisms in NMT enable the model to focus on specific parts of the input sequence that are relevant to generating the target output. However, as sequence lengths increase, the computational cost of these mechanisms can become prohibitively expensive. Hence, designing parameter-efficient attention mechanisms is essential to balance performance and efficiency. One approach is to explore parameter-sharing techniques, where learned parameters are shared across different input positions or time steps. This can lead to substantial reductions in computational complexity while maintaining translation quality.

Parameter-Efficient Attention Mechanisms

Parameter-efficient attention mechanisms are a class of attention models that aim to minimize the number of parameters required while maintaining performance quality. This can be achieved through several strategies, including:

- Parameter Sharing: As mentioned earlier, parameter-sharing techniques involve sharing learned parameters across different input positions or time steps. This can be done by sharing weights, scaling factors, or even the entire attention module itself.

- Low-Rank Attention: This involves approximating the attention weights using a low-rank matrix factorization technique. This can lead to a significant reduction in the number of parameters required while maintaining accuracy.

- Sparse Attention: This involves learning a sparse attention mechanism where the model focuses on a subset of the input sequence. This can be achieved through techniques such as sparse neural networks or learned masks.

- Multi-Head Attention: This involves using multiple attention heads that operate on different sub-spaces of the input sequence. Each head learns distinct attention patterns, allowing the model to capture more complex relationships.

By applying these strategies, we can design parameter-efficient attention mechanisms that outperform traditional attention models while maintaining or even improving translation quality. For instance, the Low-Rank Attention approach, introduced in the paper “Low-Rank Attention Mechanism” [1], demonstrated a 2x reduction in parameter complexity while maintaining accuracy on the WMT 2019 English-to-German translation task.

Designing a New Attention Mechanism: Attention with Adaptive Filtering

To push the boundaries of attention design, we propose a new attention mechanism, dubbed Adaptive Filtering Attention (AFA). The key idea behind AFA is to learn a set of adaptive filters, which are used to selectively focus on relevant parts of the input sequence.

AF(x) = W_af \cdot g(x)

where $g(x)$ represents a non-linear transform of the input sequence, and $W_af$ denotes the learned adaptive filter weights.

To train the AFA model, we propose a novel objective function that combines sequence-level and attention-weighted reconstruction losses.

\mathcalL = \mathcalL_seq + \mathcalL_att

where $\mathcalL_seq$ is the standard sequence-level reconstruction loss, and $\mathcalL_att$ represents the attention-weighted reconstruction loss, which is computed as:

\mathcalL_att = \sum_i=1^T \alpha_i \mathcalL_recon(x_i, y_i)

| Term | Description |

|---|---|

| $\mathcalL_recon(x_i, y_i)$ | Reconstruction loss between the true input token $x_i$ and the predicted output token $y_i$. |

| $\alpha_i$ | Attention weight for the $i^th$ token. |

The AFA model is trained to maximize the attention-weighted reconstruction loss, ensuring that the model focuses on the most relevant parts of the input sequence while reconstructing the target output.

We anticipate that the AFA approach will provide state-of-the-art results on various machine translation tasks while offering a high degree of parameter efficiency.

[1] Low-Rank Attention Mechanism. (2019). In Proceedings of the 57th Annual Meeting of the Association for Computational Linguistics (pp. 2343-2353).

Attention-based NMT for Low-Resource Languages

Low-resource languages face a significant challenge in training Neural Machine Translation (NMT) models, due to the limited amount of available training data. As a result, the models tend to perform poorly and may generate inaccurate translations. The scarcity of data makes it difficult for the model to learn relevant patterns and relationships between words, leading to suboptimal performance.

However, attention-based NMT can be used to improve performance in low-resource languages by focusing on the most relevant parts of the input sequence during translation. This allows the model to better utilize the limited training data and generate more accurate translations.

Challenges of Training NMT Models for Low-Resource Languages

- The limited amount of available training data makes it challenging for the model to learn relevant patterns and relationships between words.

- The model may struggle to capture the nuances of the language, leading to suboptimal performance.

- The scarcity of data may result in overfitting, where the model becomes too specialized to the training data and fails to generalize to new, unseen data.

- The model may not be able to capture the complexities of the language, such as idioms, expressions, and cultural references.

Improving Performance with Attention-Based NMT

Attention-based NMT can be used to improve performance in low-resource languages by focusing on the most relevant parts of the input sequence during translation. This allows the model to better utilize the limited training data and generate more accurate translations. By using attention mechanisms, the model can:

- Focus on the most relevant words in the input sequence.

- Capture the nuances of the language, such as idioms and expressions.

- Generalize better to new, unseen data.

- Generate more accurate translations.

The attention mechanism can be implemented using a variety of techniques, including weighted sum and dot product attention. These techniques allow the model to weight the importance of different words in the input sequence and focus on the most relevant ones during translation. By using attention mechanisms, NMT models can improve performance in low-resource languages and generate more accurate translations.

By incorporating attention-based NMT, low-resource languages can benefit from improved translation quality and more accurate language understanding. This can have a significant impact on the development of languages, enabling better communication and cooperation between people speaking different languages. With the increasing availability of attention-based NMT models, low-resource languages can now leverage the benefits of this technology and improve their language capabilities.

Attention-based NMT has also been successfully applied to other natural language processing tasks, including machine comprehension and language modeling. These tasks often require the ability to focus on specific parts of the input data and generate relevant outputs. By leveraging attention mechanisms, these models can improve their performance and generate more accurate outputs.

The use of attention-based NMT in low-resource languages has significant implications for language development and translation quality. By improving the performance of NMT models in low-resource languages, we can enable better communication and cooperation between people speaking different languages. This can have a significant impact on global communication, trade, and culture, enabling people to connect and collaborate more effectively across language and cultural boundaries.

As attention-based NMT continues to evolve and improve, we can expect to see significant advances in language capabilities, particularly in low-resource languages. By leveraging the power of attention mechanisms, we can unlock the full potential of language and enable better communication, cooperation, and collaboration between people speaking different languages.

This technology has the potential to revolutionize the way we communicate across languages and languages barriers, and it has significant implications for the development of low-resource languages. By improving the performance of NMT models in these languages, we can enable better communication and cooperation between people speaking different languages, and unlock the full potential of language.

Furthermore, attention-based NMT can be used to improve the performance of other natural language processing tasks, such as machine comprehension and language modeling. These tasks often require the ability to focus on specific parts of the input data and generate relevant outputs. By leveraging attention mechanisms, these models can improve their performance and generate more accurate outputs.

By incorporating attention-based NMT, low-resource languages can benefit from improved translation quality and more accurate language understanding. This can have a significant impact on the development of languages, enabling better communication and cooperation between people speaking different languages.

Overall, attention-based NMT has the potential to revolutionize the way we communicate across languages and languages barriers, and it has significant implications for the development of low-resource languages. By improving the performance of NMT models in these languages, we can enable better communication and cooperation between people speaking different languages, and unlock the full potential of language.

Comparing Attention-based NMT with Other Translation Systems: Effective Approaches To Attention-based Neural Machine Translation

In recent years, attention-based Neural Machine Translation (NMT) has emerged as a leading approach for translating languages. Despite its success, it’s essential to compare its performance with other translation systems to understand its strengths and weaknesses. This delves into the comparison of attention-based NMT with statistical machine translation and rule-based machine translation.

The Rise of Attention-based NMT: A Breakthrough in Translation Performance

Attention-based Neural Machine Translation has shown significant improvements in translation quality over traditional approaches. One key aspect that sets it apart is its ability to weigh the importance of different input words during the translation process. This attention mechanism allows the model to focus on specific words or phrases in the source sentence that are most relevant to the target translation. As a result, it leads to more accurate and context-specific translations.

However, it is essential to compare this approach with other existing translation systems to understand its relative strengths and weaknesses. In this context, we’ll examine the performance of attention-based NMT alongside statistical machine translation and rule-based machine translation.

A Closer Look at Statistical Machine Translation

Statistical Machine Translation (SMT) has been the de facto standard for translation systems for several years. It relies on statistical techniques to learn translation probabilities from large corpora of bilingual text. These probabilities are then used to translate new, unseen text. SMT’s main strength lies in its ability to learn translation patterns from large amounts of training data, making it suitable for high-resource languages. However, it struggles with low-resource languages and tends to produce less accurate translations compared to attention-based NMT.

Statistical Machine Translation approaches have several key characteristics that set them apart from attention-based NMT:

-

Model complexity: Statistical machine translation models are typically simpler in design compared to attention-based NMT. They rely on pre-defined templates and statistical patterns to generate translations.

-

Training requirements: Statistical machine translation requires large amounts of bilingual training data and computational resources.

-

Translatability: Statistical machine translation is generally more suitable for high-resource languages with abundant translation data.

Rule-based Machine Translation vs. Attention-based NMT

Rule-based Machine Translation (RBMT) has been around for decades, relying on hand-crafted dictionaries, grammars, and rules to generate translations. However, its performance is usually limited to specific domains or languages. RBMT’s main strength lies in its ability to accurately translate specific terms or phrases that are critical to the target language. However, it struggles with general translation tasks and tend to generate translations that sound unnatural or awkward.

Key differences between RBMT and attention-based NMT:

-

Knowledge representation: Rule-based Machine Translation relies on hand-crafted rules and dictionaries to represent knowledge, whereas attention-based NMT uses neural networks to learn the underlying translation patterns.

-

Domain specificity: RBMT is often designed for specific domains or languages, whereas attention-based NMT is more generalizable and can handle a wide range of languages and domains.

-

Translation quality: RBMT typically produces higher-quality translations in specific domains, whereas attention-based NMT tends to perform better on general translation tasks.

Case Studies of Attention-based NMT

Attention-based Neural Machine Translation has made its mark in the industry, with numerous real-world applications showcasing its effectiveness. Google Translate, a widely used translation tool, has integrated attention-based NMT into its system, providing accurate and efficient translations. This section delves into the use of attention-based NMT in real-world applications, highlighting their benefits and drawbacks.

Google Translate

Google Translate is a leading online translation service that allows users to translate text and web pages between over 100 languages. The platform utilizes attention-based NMT to provide accurate and efficient translations. Google Translate’s attention-based NMT system employs a multi-attention encoder to process the source language, and a multi-attention decoder to generate the target language.

* Benefits:

+ Improved translation accuracy: Google Translate’s attention-based NMT system has significantly improved translation accuracy, enabling users to communicate effectively across language barriers.

+ Efficient translations: The system can process high volumes of text and generate translations in real-time, making it an essential tool for individuals and businesses.

+ Continuous improvement: Google Translate’s attention-based NMT system is constantly updated and refined, ensuring that translations remain accurate and efficient over time.

* Drawbacks:

+ Limited domain knowledge: Although Google Translate’s attention-based NMT system has improved significantly, it still struggles with translating specialized or technical content, such as medical or financial terminology.

+ Cultural nuances: Attention-based NMT systems can struggle to capture cultural nuances and idioms, which can lead to inaccurate or confusing translations.

Microsoft Translator

Microsoft Translator is another popular translation platform that has integrated attention-based NMT into its system. The platform offers real-time translations for text, speech, and web pages, supporting over 60 languages. Microsoft Translator’s attention-based NMT system employs a sequence-to-sequence model with attention mechanisms to process the source language and generate the target language.

* Benefits:

+ Improved translation accuracy: Microsoft Translator’s attention-based NMT system has improved translation accuracy, enabling users to communicate effectively across language barriers.

+ Real-time translations: The system can process high volumes of text and generate translations in real-time, making it an essential tool for individuals and businesses.

+ Integration with other tools: Microsoft Translator’s attention-based NMT system can integrate with other Microsoft tools, such as Office and Dynamics, to provide seamless translations.

* Drawbacks:

+ Limited domain knowledge: Although Microsoft Translator’s attention-based NMT system has improved, it still struggles with translating specialized or technical content, such as medical or financial terminology.

+ Cultural nuances: Attention-based NMT systems can struggle to capture cultural nuances and idioms, which can lead to inaccurate or confusing translations.

DeepL

DeepL is a neural machine translation platform that offers advanced translation capabilities for text and web pages, supporting multiple languages. DeepL’s attention-based NMT system employs a Transformer model with attention mechanisms to process the source language and generate the target language.

* Benefits:

+ Improved translation accuracy: DeepL’s attention-based NMT system has improved translation accuracy, enabling users to communicate effectively across language barriers.

+ Advanced translation capabilities: The system can process complex and technical content, making it an essential tool for businesses and individuals.

+ Real-time translations: DeepL’s attention-based NMT system can generate translations in real-time, making it an essential tool for individuals and businesses.

* Drawbacks:

+ Limited free availability: DeepL’s attention-based NMT system offers limited free availability, requiring users to pay for premium features.

+ Cultural nuances: Attention-based NMT systems can struggle to capture cultural nuances and idioms, which can lead to inaccurate or confusing translations.

Closing Summary

In conclusion, attention-based neural machine translation has revolutionized the field of machine translation systems, enabling machines to learn and translate languages with unprecedented accuracy. As the technology continues to evolve, we can expect to see even more sophisticated solutions for efficient language translation.

Popular Questions

What are the main advantages of attention-based NMT?

Attention-based NMT can handle long-range dependencies, improve translation quality, and enable the model to focus on specific parts of the input sequence.

Can attention-based NMT be used for low-resource languages?

Yes, attention-based NMT can be used for low-resource languages, as it can improve the translation quality and enable the model to learn from limited training data.

How does attention-based NMT compare to statistical machine translation and rule-based machine translation?

Attention-based NMT outperforms statistical machine translation and rule-based machine translation in terms of translation quality and efficiency.

Can attention-based NMT be used in other industries beyond language translation?

Yes, attention-based NMT can be used in various industries, including speech recognition, text summarization, and language generation.