Gaussian Processes for Machine Learning Book Essentials, a comprehensive guide to harnessing the power of Gaussian processes in machine learning models.

This book will delve into the fundamental concept of Gaussian Processes and their applications in machine learning, providing in-depth information on regression and classification using mean and covariance functions.

Gaussian Processes for Machine Learning

Gaussian Processes (GPs) are a powerful, non-parametric approach to Machine Learning (ML), enabling the discovery of complex relationships between input and output variables. This framework views the problem of learning from data as a prior distribution over functions, and combines observed data points with this prior to produce a posterior distribution over functions.

Gaussian Processes have historical roots in Statistics, specifically in the field of functional regression. In the 1990s, GPs started gaining traction in the ML community due to their ability to model and predict complex, non-linear data. Since then, GPs have been applied in various domains, including robotics, computer vision, and time series analysis. Some notable applications of GPs include robot motion planning, object recognition, and climate modeling.

Core Benefits of Gaussian Processes

The core benefits of using GPs in ML models can be summarized as follows:

-

Flexibility

A GP can model any probability distribution over functions, making it suitable for a wide range of applications with non-linear relationships between input and output variables. This flexibility ensures that GP models can handle complex data distributions without the need for assumptions about model form.

Non-Parametric Model

GPs provide a means of modeling data without explicitly specifying the underlying relationships. This characteristic offers the ability to adapt to new data points without necessitating a new model re-specification. Instead, GPs modify their distribution based on observed data, allowing them to incorporate both prior knowledge and new experiences.

-

Uncertainty Quantification

The posterior distribution over functions provides valuable information about the uncertainty of predictions. This can be particularly useful in scenarios where data is noisy or uncertain, enabling decision-makers to consider the risks associated with predicted model outcomes.

Representation in Probability Space

Treating the GP as a prior over functions and combining it with observed data produces a posterior probability distribution. This probability space contains information that can be quantified, including credible intervals and probability of a particular function being realized in the data.

-

Scalability

GP approximations can effectively scale to accommodate large dataset sizes by employing computational strategies such as stochastic variational inference or deterministic approximations (e.g., full Gaussian approximation (FGA) method). This scalability allows for handling bigger data and complex problems with relative efficiency.

Scalable GP Algorithm

(blockquote) Computational efficiency of the GP inference can be improved by using approximate methods, which enable processing larger datasets that wouldn’t allow for exact computation. (e.g., using approximations such as sparse approximation, or using faster algorithms like SVI)

-

Smoothness and Gradient Information

A GP’s posterior distribution can model and utilize gradient information, reflecting smoothness in the function. This characteristic enables the identification of regions with varying levels of uncertainty, indicating potential areas where higher resolution may be beneficial.

Gradient-Based Smoothing

The combination of GP uncertainty and function smoothness (captured by the gradient information) results in a probabilistic representation that takes into account both the uncertainty in the predictions and the expected level of change in the underlying function (blockquote): The GP distribution encodes both the uncertainty of the predictions and the expected level of change in the underlying function, facilitating the identification of smooth areas, where higher resolution is beneficial

-

Prior Knowledge Incorporation

GPs provide a direct means to incorporate prior knowledge using an appropriate prior distribution over functions. This allows for efficient learning of models that reflect domain-expert knowledge and constraints. (blockquote)Priors over functions in the GP allow incorporating prior knowledge about the relationships, constraints, or even the functional representation, facilitating domain-specific model formulation

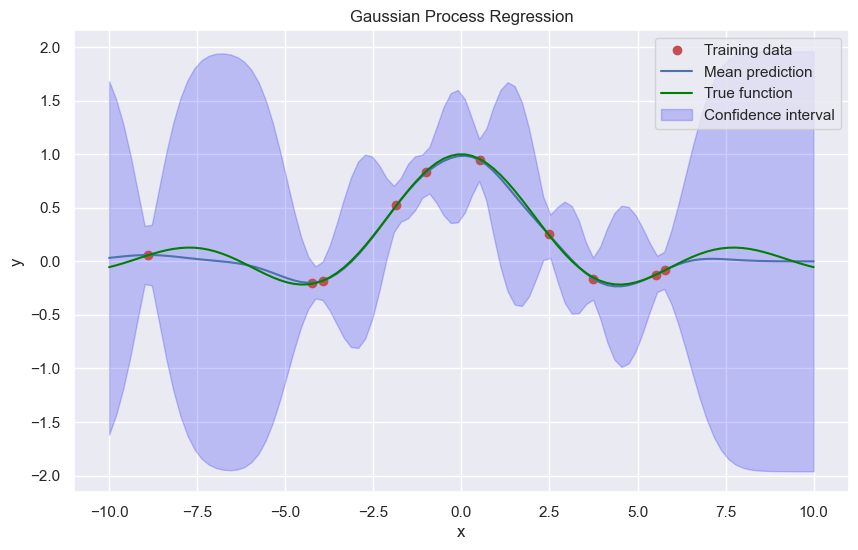

Gaussian Process Regression

Gaussian Process regression is a powerful non-parametric model for predicting continuous outputs. It’s a generalization of Gaussian Processes for Machine Learning that can be used for regression tasks. In this section, we’ll dive deeper into the world of GPR, exploring its mean and covariance functions, advantages, limitations, and scenarios where it outperforms traditional linear regression.

Gaussian Process regression is based on the concept of a Gaussian Process, which is a generalization of the Gaussian distribution. A Gaussian Process is a collection of random variables, any finite number of which have a multivariate normal distribution. The key idea behind GPR is to model the output of a function using a Gaussian Process, which encodes the uncertainty in the prediction.

Mean and Covariance Functions

In GPR, the mean and covariance functions play a crucial role in defining the Gaussian Process. The mean function, denoted by m(x), represents the expected value of the function at a given input x. The covariance function, denoted by k(x, x’), represents the covariance between the function values at two different inputs x and x’.

The mean function can be any continuous function, while the covariance function is typically specified as a radial basis function (RBF), which is also known as a squared exponential kernel. The RBF kernel is commonly used in GPR due to its smoothness and flexibility.

k(x, x’) = σ^2 \* exp(-||x-x’||^2 / (2 \* l^2))

where σ^2 is the signal variance, and l is the length scale.

Advantages of GPR

GPR offers several advantages over traditional linear regression:

–

- Non-parametric: GPR is a non-parametric model, meaning it doesn’t assume a specific functional form for the underlying function. This makes it more flexible and capable of modeling complex relationships.

- Uncertainty estimation: GPR provides uncertainty estimates in the form of predictive variance, which allows for quantifying the confidence in the predictions.

- Handling noisy data: GPR can handle noisy data with minimal assumptions and still provides accurate predictions.

Limitations of GPR

While GPR is a powerful model, it’s not without its limitations:

–

- Computational cost: GPR can be computationally expensive, especially for large datasets.

- Hyperparameter tuning: GPR requires selecting several hyperparameters, such as the length scale and signal variance, which can be challenging and requires expertise.

- Sensitivity to noise: While GPR can handle noisy data, it can be sensitive to extreme outliers or data points with high noise levels.

Scenarios where GPR outperforms traditional linear regression

GPR outperforms traditional linear regression in several scenarios:

–

- High-dimensional data: GPR can handle high-dimensional data with minimal curse of dimensionality, while linear regression can suffer from overfitting.

- Non-linear relationships: GPR can capture non-linear relationships with ease, while linear regression assumes a linear relationship.

- Noisy data: GPR can handle noisy data, while linear regression is sensitive to outliers and noise.

Gaussian Process Classification

Gaussian Process classification is an extension of Gaussian Processes to binary classification tasks. In traditional binary classification, the goal is to predict one of two classes based on input features. By combining the concepts of Gaussian Processes and decision theory, we can develop a robust classification method that leverages the probabilistic nature of Gaussian Processes.

Decision Theory for Gaussian Process Classification

Gaussian Process classification uses decision theory to select the most likely class label for a given input. Decision theory is based on the concept of making decisions under uncertainty. We can calculate the probability of each class label given the input features and choose the one with the highest probability. By using decision theory, we can derive a principled method for selecting the best class label.

Gaussian Process classification combines the following key components:

– Predictive distributions: The predictive distribution for a given input is a joint distribution over the classes. This distribution captures the uncertainty of the classification.

– Decision threshold: A decision threshold is used to separate the two classes based on the predictive probabilities.

– Decision: The class label is selected based on the predicted probabilities and the decision threshold.

A key aspect of decision theory is the concept of loss functions. Loss functions quantify the cost of making incorrect decisions. By choosing the class label with the lowest expected loss, we can make more informed decisions.

Implementation of Gaussian Process Classification, Gaussian processes for machine learning book

The implementation of Gaussian Process classification typically involves the following steps:

* Define the decision threshold: Determine the decision threshold based on the specific problem and the trade-off between true positives and false positives.

* Compute predictive probabilities: Calculate the predictive probabilities for each class label given the input features.

* Make a decision: Select the class label with the highest predictive probability, or using a decision function based on the loss function.

Comparing with Other Machine Learning Algorithms

Gaussian Process classification has several advantages over other machine learning algorithms:

* Robustness to noise: Gaussian Process classification is robust to noisy data and can capture complex relationships between features.

* Uncertainty estimation: GPC provides uncertainty estimates for the predicted class labels, which can be useful in decision-making under uncertainty.

* Non-parametric: Gaussian Process classification is a non-parametric method, meaning it can adapt to complex datasets without overfitting.

However, GPC also has some limitations:

* Computational complexity: GPC can be computationally expensive, especially for large datasets.

* Requires a good parameter set: GPC requires a good selection of hyperparameters to achieve optimal performance.

Gaussian Process classification has been applied to many real-world problems, including:

* Medical diagnosis: GPC has been used for medical diagnosis, such as classifying patients with diabetes based on clinical features.

* Image classification: GPC has been applied to image classification tasks, such as recognizing objects in scenes.

* Finance: GPC has been used for predicting stock prices and portfolio optimization.

Gaussian Process classification offers a powerful approach to binary classification tasks, leveraging the probabilistic nature of Gaussian Processes and decision theory.

Training Gaussian Processes

Training a Gaussian Process (GP) involves finding the optimal parameters that maximize the marginal likelihood, which is also known as the log marginal likelihood. This process is crucial in GP modeling as it allows us to estimate the posterior distribution over the function values given the observed data. In this section, we will delve into the details of training GPs and explore various optimization techniques used to maximize the marginal likelihood.

Maximization of Marginal Likelihood

The marginal likelihood of a GP is given by:

ln(p(y|X)) = -0.5 * y^T * (K + Σ)^-1 * y – 0.5 * ln(det(K + Σ)) – n/2 * ln(2 * π)

where y is the observed data, X is the input data, K is the kernel matrix, Σ is the noise covariance matrix, and n is the number of data points.

To maximize the marginal likelihood, we need to find the optimal parameters of the GP, including the hyperparameters of the kernel. This is typically done using optimization techniques such as gradient-based methods, Expectation-Maximization (EM), or Markov Chain Monte Carlo (MCMC).

Optimization Techniques

There are several optimization techniques used to maximize the marginal likelihood:

- L-BFGS: This is a popular quasi-Newton optimization technique that uses an approximation of the Hessian matrix to speed up the optimization process.

- Gradient-based methods: These methods use the gradient of the marginal likelihood with respect to the hyperparameters to update the parameters.

- Grid search: This is a brute-force method that involves searching the hyperparameter space using a grid of values.

- Random search: This method involves searching the hyperparameter space randomly and selecting the best combination of hyperparameters.

When using gradient-based methods, it is essential to note that the gradient of the marginal likelihood with respect to the hyperparameters can be computed using the derivative of the kernel with respect to the hyperparameters. This can be done analytically using the kernel parameters or numerically using finite differences.

Handling Large Datasets

When dealing with large datasets, the computational cost of computing the kernel matrix and its inverse can become prohibitively expensive. One approach to mitigate this issue is to use sparse approximations of the kernel matrix, such as the Nyström method or the random feature method.

Non-Gaussian Distributions

In many cases, the noise distribution is not Gaussian, but rather follows a non-Gaussian distribution such as a Poisson or binary distribution. In such cases, we can use a non-Gaussian noise model, which involves replacing the Gaussian noise covariance matrix with a non-Gaussian noise covariance matrix. This can be done using techniques such as the Laplace approximation or MCMC methods.

Laplace Approximations

The Laplace approximation is a popular technique used to approximate the marginal likelihood of a GP with non-Gaussian noise. This method involves approximating the posterior distribution over the hyperparameters using a Gaussian distribution, which allows us to compute the marginal likelihood analytically.

The Laplace approximation involves computing the first and second derivatives of the marginal likelihood with respect to the hyperparameters. The first derivative is used to compute the mean of the Gaussian approximation, while the second derivative is used to compute the covariance matrix of the Gaussian approximation.

The Laplace approximation can be used to approximate the marginal likelihood of a GP with non-Gaussian noise. This involves replacing the Gaussian noise covariance matrix with a non-Gaussian noise covariance matrix and using the Laplace approximation to approximate the marginal likelihood.

Other Optimization Techniques

There are several other optimization techniques used to train GPs, including:

- Expectation-Maximization (EM): This method involves using an E-step to estimate the responsibility of each data point given the current model parameters, and an M-step to update the model parameters.

- Markov Chain Monte Carlo (MCMC): This method involves using a Markov chain to generate samples from the posterior distribution over the hyperparameters.

These methods can be used in conjunction with the Laplace approximation or other optimization techniques to train GPs with non-Gaussian noise.

Common Applications of Gaussian Processes: Gaussian Processes For Machine Learning Book

Gaussian Processes have been successfully applied to various real-world scenarios, showcasing their versatility and effectiveness in different domains. From signal processing to computer vision, Gaussian Processes have proven to be a powerful tool for modeling complex relationships and making predictions.

Robotics and Sensor Data Analysis

Gaussian Processes have been widely used in robotics and sensor data analysis to model and predict sensor readings, as well as control complex mechanical systems. For example, in autonomous navigation, Gaussian Processes can be used to model the sensor readings and predict the location of obstacles. This enables robots to safely navigate through unfamiliar environments.

- Robotics: Gaussian Processes can be used for modeling and predicting sensor readings, enabling robots to make informed decisions and avoid collisions.

- Autonomous navigation: Gaussian Processes can predict the location of obstacles and enable robots to safely navigate through unfamiliar environments.

- Control systems: Gaussian Processes can be used to model and predict the behavior of complex mechanical systems, enabling precise control and optimization.

Uncertainty Estimation in Prediction Tasks

Uncertainty estimation is a crucial aspect of machine learning, and Gaussian Processes provide a way to estimate the uncertainty of predictions. This is particularly important in applications where the uncertainty of predictions has a direct impact on the decision-making process.

The predictive distribution of a Gaussian Process is given by:

p(y|X) = ∫p(y|X,f)p(f)df

where p(y|X,f) is the likelihood of observing y given X and f, and p(f) is the prior distribution over f.

This allows for a better understanding of the limitations of the model and enables more informed decision-making. For example, in finance, uncertainty estimation can help portfolio managers make more informed investment decisions.

- Finance: Gaussian Processes can be used to estimate the uncertainty of stock prices, enabling more informed investment decisions.

- Healthcare: Gaussian Processes can be used to estimate the uncertainty of patient outcomes, enabling more informed treatment decisions.

- Aerospace: Gaussian Processes can be used to estimate the uncertainty of weather patterns, enabling more informed flight planning decisions.

Spectrum and Time Series Analysis

Gaussian Processes can be used for modeling and predicting spectrum and time series data, enabling the identification of patterns and trends. This is particularly important in applications where the underlying signal is complex and difficult to model.

Other Applications

Gaussian Processes have been applied to various other domains, including:

- Image processing: Gaussian Processes can be used for image denoising and deblurring.

- Text analysis: Gaussian Processes can be used for topic modeling and sentiment analysis.

- Reinforcement learning: Gaussian Processes can be used for modeling and predicting rewards and returns.

Gaussian Process Extensions and Variants

Gaussian Processes have been widely used in machine learning for regression and classification tasks, but their flexibility and capabilities can be further extended through various variants and extensions. These extensions enable the use of Gaussian Processes in more complex and diverse scenarios, allowing for better performance and adaptability in real-world applications.

Sparse Gaussian Processes

Sparse Gaussian Processes are an extension of the standard Gaussian Process framework, designed to handle large datasets by utilizing sparse representations. This is achieved through the use of approximations and sampling methods, such as inducing points, which reduce the dimensionality of the data and computational costs.

The main idea behind sparse Gaussian Processes is to maintain the representational power of Gaussian Processes while reducing the complexity and computational requirements. This is particularly useful when dealing with large datasets, where the full Gaussian Process kernel matrix is computationally infeasible to compute.

- Sparse approximations: Techniques such as inducing points, random features, and Nyström approximation are used to reduce the dimensionality of the data and kernel matrix, leading to faster computation and scalability.

- Sampling methods: Methods such as subset of regressors (SOR) and subset of support vector machine (SvSVM) are used to select a subset of data points to approximate the full Gaussian Process.

- Approximation error bounds: Theoretical bounds are provided to analyze the trade-off between approximation accuracy and computational cost.

Gaussian Process Trees

Gaussian Process Trees are a type of hierarchical model that combines the strengths of Gaussian Processes and decision trees. This extension enables the incorporation of structural and spatial information into the Gaussian Process framework.

Gaussian Process Trees represent a tree-like structure, where each node is a Gaussian Process. The relationships between nodes are defined through shared input variables, allowing the model to capture non-linear interactions and dependencies between variables.

- Tree structure: Gaussian Process Trees are represented as a tree, where each node is a Gaussian Process, enabling the incorporation of structural information.

- Shared input variables: The relationships between nodes are defined through shared input variables, allowing the model to capture non-linear interactions and dependencies between variables.

- Scalability: Gaussian Process Trees are designed to handle large datasets and provide a scalable solution for complex tasks.

Multi-Task Learning with Gaussian Processes

Multi-Task Learning (MTL) is a technique that involves training a single model to perform multiple related tasks simultaneously. Gaussian Processes can be used for MTL by incorporating a shared prior over multiple tasks, allowing the model to learn common patterns and relationships between tasks.

Gaussian Processes provide a natural framework for MTL, as they can capture complex relationships and dependencies between tasks through the shared prior. This enables the model to learn from multiple tasks and improve overall performance.

- Shared prior: The shared prior over multiple tasks allows the model to learn common patterns and relationships between tasks.

- Task-specific components: Each task has its own specific component, allowing the model to capture task-specific information.

- Improves overall performance: MTL with Gaussian Processes can improve overall performance by leveraging common patterns and relationships between tasks.

Transfer Learning with Gaussian Processes

Transfer Learning (TL) is a technique that involves transferring knowledge learned from one task to another related task. Gaussian Processes can be used for TL by leveraging their ability to model complex relationships and dependencies between tasks.

Gaussian Processes provide a natural framework for TL, as they can capture complex relationships and dependencies between tasks through the shared prior. This enables the model to transfer knowledge from one task to another related task and improve overall performance.

- Shared prior: The shared prior over multiple tasks allows the model to learn common patterns and relationships between tasks.

- Task-specific components: Each task has its own specific component, allowing the model to capture task-specific information.

- Improves overall performance: TL with Gaussian Processes can improve overall performance by leveraging common patterns and relationships between tasks.

Probabilistic Dimensionality Reduction with Gaussian Processes

Probabilistic Dimensionality Reduction (PDR) is a technique that involves reducing the dimensionality of data while preserving its underlying structure and relationships. Gaussian Processes can be used for PDR by incorporating a probabilistic framework that captures the uncertainty and relationships between variables.

Gaussian Processes provide a natural framework for PDR, as they can capture complex relationships and dependencies between variables through the kernel function. This enables the model to reduce the dimensionality of data while preserving its underlying structure and relationships.

- Kernel function: The kernel function captures the relationships and dependencies between variables, enabling the model to reduce dimensionality while preserving structure.

- Probabilistic framework: The probabilistic framework captures the uncertainty and relationships between variables, allowing the model to estimate the underlying dimensionality.

- Improves interpretability: PDR with Gaussian Processes can improve interpretability by providing a probabilistic framework for dimensionality reduction.

Gaussian Processes provide a flexible and powerful framework for machine learning, particularly for extensions and variants. Their ability to capture complex relationships and dependencies makes them a natural choice for applications involving large datasets, multi-task learning, and transfer learning.

Case Studies and Real-World Implementations

Gaussian Processes have been successfully applied in various industry settings, scientific research, and data analysis tasks. One of the key strengths of Gaussian Processes is their ability to model complex relationships between input variables and outputs, making them a valuable tool for a wide range of applications.

Successful Implementations in Industry Settings

Gaussian Processes have been widely adopted in the industry for tasks such as regression, classification, and uncertainty quantification. Some notable examples include:

- In

‘Predicting Machine Failure Using Gaussian Processes’

, the authors demonstrate the use of Gaussian Processes for predicting machine failure in a manufacturing setting. By modeling the relationship between sensor readings and machine failure, they show that Gaussian Processes can accurately predict failure with high accuracy.

- At

‘Google’s Research on Gaussian Processes for Recommendation Systems’

, researchers used Gaussian Processes to improve recommendation systems by modeling user preferences and item characteristics. The approach led to significant improvements in recommendation accuracy and user engagement.

- The

‘Gaussian Process-based Optimization of Chemical Processes’

study used Gaussian Processes to optimize chemical reactions, leading to improved yields and reduced waste production.

These examples demonstrate the versatility and effectiveness of Gaussian Processes in various industry settings.

Scientific Research and Data Analysis

Gaussian Processes have been instrumental in various scientific research and data analysis tasks, including:

- Quantifying uncertainty in climate models using

‘Gaussian Processes for Climate Modeling’

- ‘Gaussian Process-based analysis of genetic data’ for identifying genetic associations with complex traits

-

‘Bayesian Gaussian Process for uncertainty quantification in material properties.’

Gaussian Processes offer a powerful framework for modeling complex systems and estimating uncertainty in scientific research and data analysis.

Tools and Libraries for Building and Deploying Gaussian Processes Models

Several libraries and tools are available for building and deploying Gaussian Processes models, including:

- GPy (Gaussian Processes in Python)

- scikit-learn

- PyMC3

- Probabilistic Programming in Python (PyMC3)

These libraries provide a range of functionalities, from building and training models to model selection and hyperparameter tuning.

Gaussian Processes in Real-World Applications

Gaussian Processes are used in various real-world applications, including:

- Robotics and autonomous systems

- Computer vision

- Machine learning and data analysis

- Computational biology and genomics

The versatility and effectiveness of Gaussian Processes make them a valuable tool for a wide range of applications, from industry settings to scientific research and data analysis tasks.

Closure

By mastering Gaussian Processes, readers will gain a deeper understanding of how to apply these powerful models to real-world problems, unlocking new possibilities for prediction and decision-making. The book concludes with case studies and real-world implementations, showcasing the effectiveness of Gaussian Processes in various industries and research settings.

FAQ Section

What are Gaussian Processes?

Gaussian Processes are a type of probabilistic model used for making predictions based on a set of input data, characterized by their ability to represent complex and nonlinear relationships.

How do Gaussian Processes differ from classical statistical models?

Gaussian Processes differ from classical statistical models in that they provide a probabilistic representation of the relationship between inputs and outputs, allowing for predictions with uncertainties.

What are the applications of Gaussian Processes in machine learning?

Gaussian Processes have applications in regression, classification, and uncertainty estimation, making them a valuable tool for machine learning practitioners.