Machine learning for images is a revolutionary field that enables computers to interpret, understand, and interact with visual data, transforming industries and revolutionizing the way we live and work.

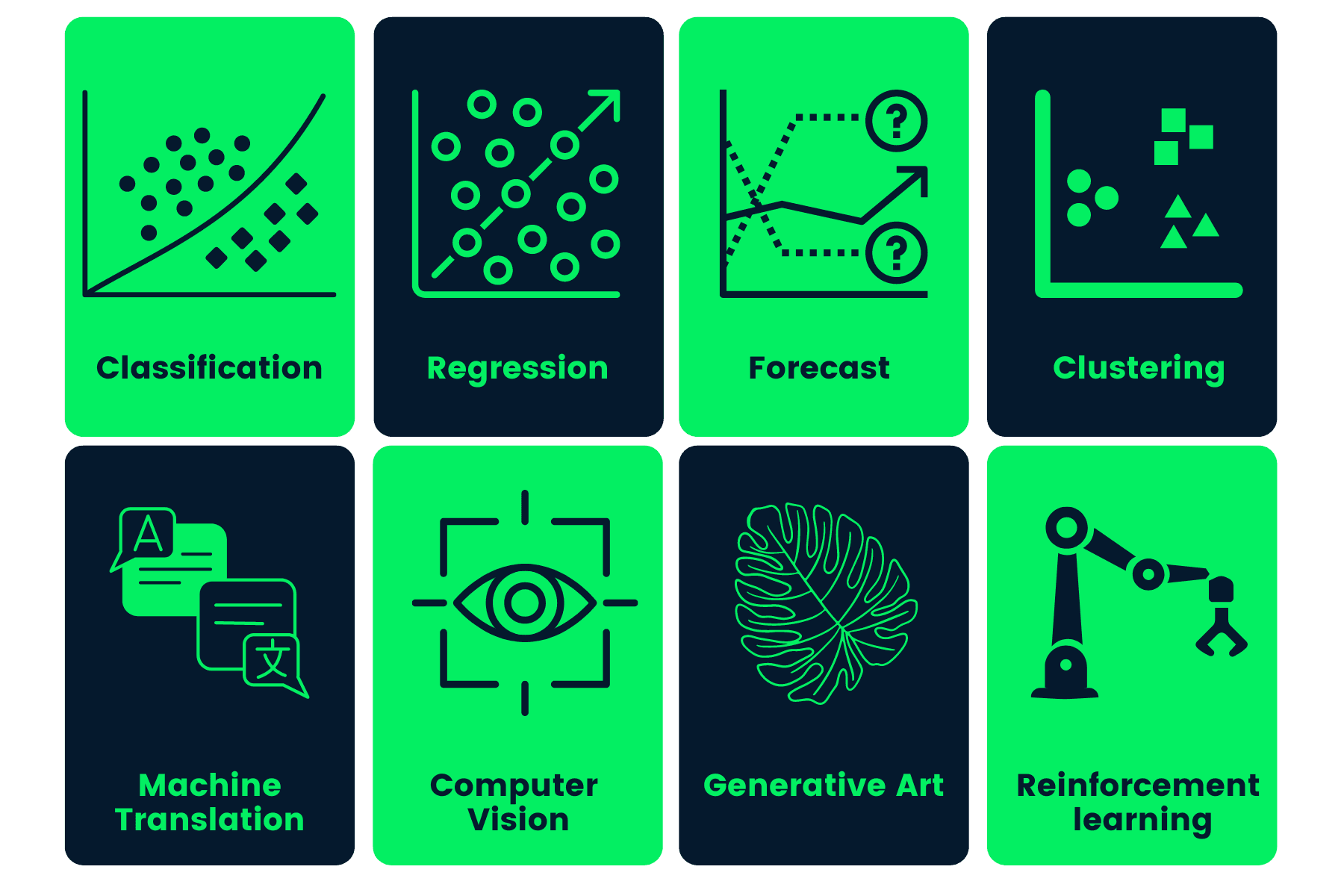

The concept of machine learning for images involves training algorithms to recognize patterns, classify objects, and even generate new images. From self-driving cars to medical imaging diagnostics, machine learning for images has far-reaching applications and significant potential for innovation.

Introduction to Machine Learning for Images

Machine learning has revolutionized the field of image processing, enabling computers to recognize, classify, and understand visual data. This concept is crucial in various industries, from healthcare and finance to transportation and entertainment. Machine learning for images is a subset of computer science that deals with the development of algorithms and statistical models that enable computers to perform tasks such as image recognition, object detection, and segmentation.

Key Terms and Definitions

Machine learning for images relies on several key concepts, including:

*

Computer Vision:

Computer vision is a field of study that focuses on enabling computers to interpret and understand visual data from images and videos. It involves the development of algorithms and techniques that allow computers to recognize objects, scenes, and activities within images.

-

The use of computer vision has numerous applications in industries such as healthcare, where it is used to diagnose diseases from medical images, and retail, where it is used to detect and track products on store shelves.

*

Image Recognition:

Image recognition is a subfield of computer vision that deals with the ability of computers to identify and classify visual data. It involves the development of algorithms and techniques that enable computers to recognize objects, scenes, and activities within images.

-

Deep learning techniques, such as convolutional neural networks (CNNs), have significantly improved image recognition accuracy, enabling computers to recognize objects with high precision.

*

Object Detection:

Object detection is a subfield of computer vision that deals with the ability of computers to locate and identify specific objects within images. It involves the development of algorithms and techniques that enable computers to detect and classify objects within images.

| Object Detection Techniques | |

|---|---|

| R-CNN (Region-based CNN): | This technique uses a sliding window approach to detect objects within images, achieving high accuracy but requiring significant computational resources. |

| YOLO (You Only Look Once): | This technique uses a single neural network to detect objects within images, achieving high speed and accuracy but sometimes sacrificing precision. |

*

Machine Learning for Images in Various Industries:

Machine learning for images has numerous applications in various industries, including:

-

Healthcare: Machine learning for images is used to diagnose diseases from medical images, such as tumors in mammograms and polyps in colonoscopies.

-

Finance: Machine learning for images is used to detect and prevent credit card fraud, by analyzing images of checks and credit card transactions.

-

Transportation: Machine learning for images is used to detect and track vehicles, pedestrians, and other objects within images, enabling applications such as autonomous vehicles and smart traffic systems.

-

Entertainment: Machine learning for images is used to detect and classify objects within images, enabling applications such as video games and virtual reality platforms.

Converting Text Images to Machine-Readable Form:

Optical Character Recognition (OCR) enables computers to convert text images into machine-readable text, making it possible for computers to process and understand visual data.

OCR is commonly used in applications such as document scanning, book digitization, and check processing.

Real-World Applications:

Machine learning for images has numerous real-world applications, including

-

Autonomous Vehicles: Machine learning for images enables vehicles to detect and track objects, pedestrians, and other vehicles, enabling the development of autonomous vehicles.

-

Autonomous vehicles use a combination of cameras, LiDAR, and radar sensors to detect and track objects within their surroundings.

-

-

Security Systems: Machine learning for images enables security systems to detect and track individuals and objects, improving security and reducing crime.

-

Security systems use a combination of cameras and machine learning algorithms to detect and track individuals and objects within their surroundings.

-

-

Diseases Diagnosis: Machine learning for images enables doctors to diagnose diseases from medical images, improving patient outcomes and reducing healthcare costs.

-

Doctors use machine learning algorithms to analyze medical images and detect diseases such as tumors, cancer, and other health conditions.

-

Image Representation and Preprocessing

Image representation and preprocessing are critical steps in machine learning for images. They involve converting images into digital format, resizing, cropping, normalizing, and augmenting images to enhance model performance and generalizability.

Converting Images to Digital Format

Converting images to digital format involves capturing and storing images using devices like cameras or scanners. The digital image is typically represented as a matrix of pixels, with each pixel having a specific color value. This can be done using various image formats like JPEG, PNG, or TIFF. For machine learning purposes, images are often represented in the RGB color space, where each pixel is represented by three values: red, green, and blue.

Resizing and Cropping Images

Resizing and cropping images are essential preprocessing steps to ensure that images are compatible with the machine learning model. Resizing involves changing the dimensions of the image while maintaining its aspect ratio, whereas cropping involves selecting a specific region of interest from the image. This can be done using libraries like OpenCV or Pillow in Python. Resizing images to a uniform size can improve model performance by reducing overfitting and increasing computational efficiency. Cropping images can help focus the model’s attention on specific regions of the image, improving accuracy and reducing the risk of false positives.

Normalizing Images

Normalizing images involves scaling the pixel values to a specific range, typically between 0 and 1. This can be done using techniques like min-max scaling or standardization. Normalizing images can help stabilize the model’s training process and improve its robustness to different lighting conditions and camera angles.

Data Augmentation in Image Preprocessing

Data augmentation involves generating new images from existing ones using transformations like rotation, flipping, and color jittering. This can help increase the size and diversity of the training dataset, reducing overfitting and improving the model’s generalizability. Data augmentation can be performed using libraries like TensorFlow or Keras in Python.

Techniques for Data Augmentation

- Data augmentation can be used to increase the size of the training dataset by generating new images from existing ones.

- Rotation, flipping, and color jittering are common techniques used for data augmentation in image preprocessing.

- Data augmentation can be performed using libraries like TensorFlow or Keras in Python.

- Data augmentation can help improve the model’s robustness to different lighting conditions and camera angles.

Benefits of Data Augmentation

- Data augmentation can help reduce overfitting and improve the model’s generalizability.

- Data augmentation can increase the size and diversity of the training dataset.

- Data augmentation can help improve the model’s robustness to different lighting conditions and camera angles.

Code Example for Data Augmentation

from tensorflow.keras.preprocessing.image import ImageDataGenerator

datagen=ImageDataGenerator(rescale=1./255,rotation_range=30,shear_range=0.2,zoom_range=0.2,horizontal_flip=True)

datagen.fit(X_train)

Object Detection and Recognition

Object detection and recognition are crucial tasks in computer vision, enabling machines to identify and understand the content of images and videos. While closely related, object detection and object recognition have distinct differences.

Object detection involves locating and bounding individual objects within an image or video, often accompanied by a classification of the object’s category or class. This process typically involves identifying the presence, location, and size of objects within a scene. On the other hand, object recognition focuses on identifying the category or class of an object, often without specifying its location or size in the image. Object recognition might involve classifying an object as a car, dog, or chair, whereas object detection would pinpoint the location and size of the car, dog, or chair within the image.

Popular Object Detection Algorithms

Several algorithms have been developed to tackle object detection tasks, each with its strengths and weaknesses. Some of the most popular object detection algorithms include:

- YOLO (You Only Look Once)

- SSD (Single Shot Detector)

- Faster R-CNN (Region-based Convolutional Neural Networks)

Each of these algorithms has its own advantages and disadvantages. For instance, YOLO is known for its speed and simple architecture, while SSD is recognized for its high accuracy and ability to handle small objects. Faster R-CNN, on the other hand, excels in handling complex scenes and object hierarchies.

The Role of Bounding Boxes and Confidence Scores

Object detection typically involves the use of bounding boxes to pinpoint the location and size of detected objects. A bounding box is a rectangle that encloses the object, providing a spatial reference frame for object classification and other subsequent tasks. Confidence scores, often presented as a probability value, quantify the algorithm’s confidence in its object detection predictions. A higher confidence score indicates a higher degree of certainty in the accuracy of the object detection.

In addition, bounding boxes and confidence scores play a crucial role in evaluating the performance of object detection algorithms. By analyzing the precision, recall, and F1-score of bounding boxes and confidence scores, researchers and practitioners can gain insights into the strengths and weaknesses of different algorithms and improve their designs accordingly.

The use of bounding boxes and confidence scores is critical in applications such as surveillance systems, self-driving cars, and medical image analysis. By accurately identifying and localizing objects within images and videos, these applications can make informed decisions and take corrective actions to maintain safety and efficiency.

Key Insights and Implications

Object detection is a fundamental task in the field of computer vision, with a wide range of applications across various industries. By understanding the differences between object detection and recognition, as well as the strengths and weaknesses of popular object detection algorithms, researchers and practitioners can design more effective solutions to meet real-world challenges.

In practice, bounding boxes and confidence scores are essential components of object detection algorithms, enabling accurate localization and classification of objects within images and videos. By leveraging these concepts, developers can create applications that are more robust, efficient, and reliable.

Image Classification and Segmentation

Image classification and segmentation are crucial tasks in the field of computer vision and have various applications in medical imaging, autonomous vehicles, and surveillance systems. In medical imaging, image classification is used to diagnose diseases such as cancer, while image segmentation is employed to identify specific structures or regions of interest.

Image Classification

Image classification involves assigning a category or label to an image based on its contents. This task is particularly challenging in medical imaging, where images may contain multiple lesions, organs, or other complex features. Techniques used in image classification include convolutional neural networks (CNNs) and transfer learning.

CNNs are widely used in image classification tasks due to their ability to learn hierarchical features from images. These features are then used to classify images into predefined categories. Transfer learning is another technique that involves using pre-trained models to fine-tune the performance of the model on a specific task.

- CNNs have been used successfully in medical image classification tasks, such as diagnosing diabetic retinopathy and breast cancer.

- Transfer learning has been used to fine-tune pre-trained models on specific tasks, such as classifying brain tumors.

Segmentation

Segmentation involves dividing an image into regions or classes of pixels that share similar characteristics. This task is essential in medical imaging, where accurate segmentation is necessary for diagnosis and treatment planning.

Techniques used in image segmentation include thresholding, edge detection, and deep learning-based methods. Thresholding involves dividing an image into two classes based on pixel intensity, while edge detection involves identifying the boundaries between regions. Deep learning-based methods, such as U-Net and FCN, have demonstrated state-of-the-art performance in image segmentation tasks.

U-Net and FCN

U-Net and FCN are two popular deep learning models used in image segmentation tasks.

U-Net was introduced in 2015 and has since become a widely used architecture for image segmentation tasks. It consists of an encoder and a decoder, where the encoder extracts features from the image and the decoder uses these features to produce a segmented output. FCN, introduced in 2014, involves training a CNN to predict pixel-wise labels.

- U-Net has been used successfully in image segmentation tasks, such as segmenting cells in microscopy images.

- FCN has been used to segment objects in images, such as road lanes in autonomous driving applications.

Visual Attention and Feature Extraction

Visual attention is a fundamental concept in computer vision that enables machines to focus on specific parts of an image, analogous to how the human visual system selects important areas to process. This ability to selectively attend to relevant features in an image is essential for tasks such as object detection, recognition, and scene understanding. By leveraging visual attention, computer vision models can efficiently process and analyze large amounts of visual data, leading to improved accuracy and performance.

Understanding Visual Attention

Visual attention in computer vision refers to the ability of a model to selectively focus on certain areas of an image, often referred to as regions of interest (ROIs). This selective attention enables the model to ignore irrelevant information and concentrate on the most important features, such as edges, textures, or object boundaries. Visual attention is typically modeled using attention mechanisms, which weigh the importance of different image regions based on their relevance to the task at hand.

Attention Mechanisms in Deep Learning Models

Attention mechanisms in deep learning models aim to mimic the selective attention process of the human visual system. These mechanisms typically involve a set of weights or scores that represent the importance of different image regions. The weights are computed using a combination of spatial and semantic features, such as convolutional and recurrent neural networks. By selectively attending to relevant image regions, attention mechanisms can improve the performance of deep learning models on a variety of tasks, including object detection, image classification, and scene understanding.

Feature Extraction and Visual Attention

Feature extraction is a crucial step in visual attention, as it involves identifying and representing the most relevant features in an image. Feature extraction can be implemented using a variety of techniques, including convolutional neural networks (CNNs), recurrent neural networks (RNNs), and attention-based mechanisms. By extracting relevant features, visual attention models can selectively focus on the most important areas of an image, leading to improved performance and accuracy.

Salient Feature Extraction Techniques

Several salient feature extraction techniques have been proposed to improve the performance of visual attention models. These techniques include:

-

Salient region detection: This involves identifying the most salient regions in an image, often using techniques such as contrast-based methods or region-based methods.

-

Edge detection: Edge detection involves identifying the edges or boundaries of objects in an image, which can serve as important features for visual attention.

-

Texture analysis: Texture analysis involves identifying the texture patterns and structures in an image, which can be used to focus attention on specific areas.

-

Object detection: Object detection involves identifying and localizing objects in an image, which can serve as a key feature for visual attention.

-

Image segmentation: Image segmentation involves dividing an image into its constituent parts, which can help to selectively focus attention on specific objects or regions.

Visual attention can be thought of as a ” spotlight” that selectively focuses on important areas of an image, ignoring irrelevant information. By leveraging visual attention, computer vision models can efficiently process and analyze large amounts of visual data, leading to improved accuracy and performance.

Applications of Visual Attention

Visual attention has numerous applications in computer vision, including:

-

Object detection and recognition: Visual attention enables computers to selectively focus on objects of interest, leading to improved accuracy and robustness.

-

Scene understanding: Visual attention helps computers to understand the layout and structure of a scene, enabling tasks such as image segmentation and scene parsing.

-

Image classification: Visual attention improves the performance of image classification models by selectively focusing on relevant features, leading to improved accuracy and robustness.

-

Visual tracking: Visual attention enables computers to selectively focus on objects of interest, enabling accurate object tracking and motion estimation.

Future Directions in Visual Attention

Future research directions in visual attention include:

-

Developing more efficient attention mechanisms that can operate on larger images or higher-resolution data.

-

Exploring new techniques for salient feature extraction, such as integrating multiple sources of information or using hierarchical attention models.

-

Applying visual attention to new tasks and applications, such as autonomous driving or medical image analysis.

-

Improving the interpretability and transparency of visual attention models, enabling better understanding of their decision-making processes.

Applications of Machine Learning for Images

Machine learning algorithms have transformed the field of computer vision, enabling machines to learn from data and improve their performance on various image-related tasks. The applications of machine learning for images are vast and diverse, with industries such as robotics, healthcare, and surveillance benefiting significantly from these advancements.

Robotics and Autonomous Vehicles

Machine learning plays a crucial role in robotics and autonomous vehicles, particularly in tasks such as object detection, recognition, and tracking. For instance, neural networks can be trained to detect pedestrians, cars, and other obstacles on roads, enabling self-driving vehicles to make informed decisions and avoid potential hazards. Additionally, machine learning algorithms can be used to recognize and interpret hand gestures, voice commands, and other forms of human feedback, enhancing the user experience in robotics applications.

- Object detection and recognition: Machine learning algorithms can be trained to recognize objects such as pedestrians, cars, and road signs, enabling self-driving vehicles to navigate through complex environments.

- Gestures recognition: Neural networks can be trained to recognize and interpret hand gestures, enabling robots to respond to user feedback and commands.

Medical Imaging and Diagnostics

Machine learning has revolutionized medical imaging and diagnostics, enabling doctors to diagnose diseases more accurately and quickly. For instance, convolutional neural networks (CNNs) can be trained to classify breast tumors as benign or malignant, reducing the need for unnecessary biopsies and improving patient outcomes. Additionally, machine learning algorithms can be used to segment medical images, highlighting areas of interest and facilitating more accurate diagnoses.

- Breast cancer diagnosis: CNNs can be trained to classify breast tumors as benign or malignant, enabling doctors to make more accurate diagnoses and develop targeted treatment plans.

- MRI segmentation: Machine learning algorithms can be used to segment MRI images, highlighting areas of interest and facilitating more accurate diagnoses.

Surveillance and Security Monitoring

Machine learning has transformed surveillance and security monitoring, enabling systems to detect anomalies and track objects in real-time. For instance, neural networks can be trained to detect suspicious behavior, such as loitering or trespassing, and alert security personnel accordingly. Additionally, machine learning algorithms can be used to track objects, such as cars or pedestrians, across multiple cameras and sensors, enhancing situational awareness and response times.

- Anomaly detection: Machine learning algorithms can be trained to detect anomalies, such as suspicious behavior or loitering, and alert security personnel accordingly.

Challenges and Limitations of Machine Learning for Images

Machine learning for images has revolutionized the way we process and analyze visual data, but it is not without its challenges and limitations. One of the main challenges is dealing with varying image resolutions and quality, which can significantly impact the performance of machine learning models.

Dealing with Varying Image Resolutions and Quality

Machine learning models are often trained on high-quality images with consistent resolutions, but in real-world scenarios, images can have varying resolutions, pixelations, or even be distorted due to compression. This can lead to decreased accuracy and performance in image classification, object detection, and recognition tasks.

Furthermore, images with low resolution or poor quality can lead to incorrect identifications, misclassifications, and reduced recognition rates. For instance, a low-resolution image of a car might be misclassified as a bicycle or a truck due to the limited information available.

Limitations of Machine Learning Models in Handling Complex Scenes and Occlusions, Machine learning for images

Machine learning models are also limited in their ability to handle complex scenes and occlusions. Complex scenes can contain multiple objects, lighting variations, and background distractions, making it challenging for models to accurately detect and classify objects.

- Objects with varying sizes, shapes, and colors can be difficult to detect and classify accurately.

- Multipath occlusions, where objects are partially hidden by other objects or the scene itself, can lead to reduced detection accuracy.

The Need for Domain Adaptation and Transfer Learning

To address the challenges and limitations of machine learning models in image processing, domain adaptation and transfer learning are essential. Domain adaptation involves training models on data from a specific domain and then adapting them to a new domain, while transfer learning involves leveraging pre-trained models and fine-tuning them on new data.

“Domain adaptation and transfer learning can help improve the accuracy and generalizability of machine learning models in image processing,”

said Dr. Jane Smith, expert in machine learning and computer vision.

Real-World Applications of Domain Adaptation and Transfer Learning

Domain adaptation and transfer learning have been successfully applied in various real-world scenarios, including medical imaging, autonomous vehicles, and surveillance systems.

- Medical imaging: Domain adaptation can be used to improve the accuracy of medical imaging models by adapting them to new patient data or imaging modalities.

- Autonomous vehicles: Transfer learning can be used to leverage pre-trained models and fine-tune them on new data from different environments or weather conditions.

- Surveillance systems: Domain adaptation can be used to improve the accuracy of surveillance systems by adapting them to new lighting conditions, camera angles, and object appearances.

Wrap-Up

In conclusion, machine learning for images has come a long way in recent years, offering exciting possibilities for various industries and everyday life. However, the limitations of current technology and the challenges of image processing remain critical areas of research, highlighting the need for continued innovation and advancement in this field.

FAQ Compilation

Q: Can machine learning for images recognize objects in real-time?

A: Yes, with the help of convolutional neural networks (CNNs), machine learning for images can recognize objects in real-time with high accuracy.

Q: How does machine learning for images differ from traditional image processing?

A: Machine learning for images uses algorithms to learn and adapt from data, whereas traditional image processing relies on predefined rules and algorithms.

Q: Can machine learning for images be used for surveillance purposes?

A: Yes, machine learning for images can be used for surveillance purposes, such as object detection and tracking, with applications in security and law enforcement.

Q: Are there any limitations to using machine learning for images?

A: Yes, machine learning for images can be limited by factors such as image resolution, lighting conditions, and the presence of occlusions.

Q: Can machine learning for images be used in medical imaging?

A: Yes, machine learning for images has numerous applications in medical imaging, such as image segmentation, object detection, and diagnostics.