Kicking off with machine learning time series, this field combines machine learning and time series analysis to predict future events. By leveraging historical data, machine learning algorithms can identify patterns and trends, enabling accurate predictions and informed decision-making.

The applications of machine learning time series are vast and varied, ranging from stock market predictions to weather forecasting. In this Artikel, we will explore the fundamentals of machine learning for time series, data preparation, univariate and multivariate time series forecasting, time series classification and regression, machine learning for real-time time series prediction, deep learning for time series, and the challenges and limitations of machine learning for time series.

Fundamentals of Machine Learning for Time Series

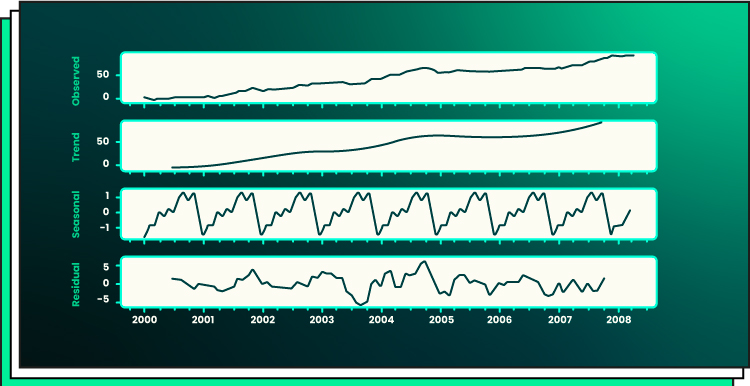

Time series analysis is a type of data analysis that focuses on observations collected over time. It’s all about understanding patterns, trends, and seasonality in data that changes over time. This is a massive area of interest in machine learning, and you’ll be surprised by how widespread its applications are.

Concept of Time Series Data

Time series data is a sequence of data points measured at regular time intervals, which helps in understanding the past, predicting the future, and making informed decisions. This data can be collected from various sources, such as stock prices, weather forecasts, traffic patterns, and more. Think of it as a never-ending stream of data, like a video, where each frame represents a point in time.

Time series data typically has three main characteristics:

– Temporal: It’s based on time, with each data point having a specific timestamp.

– Sequential: Data points are connected in a chronological order, creating a sequence.

– Interdependent: Each data point relies on the previous one, making it essential to analyze the data in its sequence.

Difference between Supervised and Unsupervised Learning for Time Series

When it comes to time series data, machine learning algorithms can be categorized into two main types: supervised and unsupervised learning.

– Supervised Learning: In this type, you have a labeled dataset that contains the actual values for the target variable. You can use this approach for tasks such as predicting future values, identifying anomalous patterns, and classifying time series data.

– Unsupervised Learning: With unsupervised learning, you have an unlabeled dataset, and the goal is to identify patterns, trends, or groupings within the data. This type is useful for understanding the underlying structure of the data and for identifying relationships between different variables.

Real-World Time Series Data Sets and Their Uses

Time series data is used in various real-world applications, including:

– Weather Forecasting: Temperature, precipitation, wind speed, and humidity data are used to predict weather patterns.

– Stock Market Analysis: Historical stock prices and trading volumes help analysts make informed investment decisions.

– Traffic Pattern Analysis: Analyzing traffic volume, speed, and accidents can lead to more efficient traffic management strategies.

Here are some examples of real-world time series data sets and their uses:

- Stock price data (e.g., S&P 500) can be used to predict long-term market trends or identify potential investment opportunities.

- Temperature data from weather stations can help climatologists study the impact of climate change.

- Network traffic data can aid in identifying bottlenecks and areas for improvement in network infrastructure.

- Ride-sharing company data can be used to predict demand, optimize routes, and reduce idle time.

Machine Learning Algorithms Suitable for Time Series Data

The following machine learning algorithms are particularly well-suited for time series analysis:

– ARIMA (AutoRegressive Integrated Moving Average): A classic algorithm for forecasting and modeling time series data.

– Prophet: A powerful open-source software for forecasting time series data, especially for large-scale datasets.

– TensorFlow Time Lagging: A TensorFlow extension for handling temporal data and forecasting.

– LSTMs (Long Short-Term Memory): A type of recurrent neural network (RNN) that’s ideal for modeling complex temporal dependencies.

– GRU (Gated Recurrent Unit): Similar to LSTMs, but with a simpler architecture, making it easier to implement.

Remember that each algorithm has its strengths and weaknesses, and the choice ultimately depends on the specific requirements and characteristics of your dataset.

Time series forecasting can be a complex task, and it’s essential to understand the nuances of each algorithm to make informed decisions.

Time Series Data Preparation

Time series data preparation is a vital step in ensuring that your machine learning model gets off to a good start. It’s like prepping the soil before planting a garden – you gotta get rid of any weeds (outliers, missing values), make sure the soil is fertile (features are properly scaled and normalized), and water it just right (select the right features) so your model gets the nutrition it needs to grow and thrive.

Handling Missing Values

Missing values can be a major pain in the bum when it comes to time series data. They can throw off your models and make them less accurate. So, what do you do? There are a few approaches you can take:

- Filling with the mean: This involves replacing the missing value with the mean of the surrounding values. This is a simple and easy approach, but it can lead to biased results if the missing values are not randomly distributed.

- Linear interpolation: This involves using the previous and next values to estimate the missing value. This approach is more accurate than filling with the mean, but it can still be biased if the missing value is far from the previous and next values.

- Polynomial interpolation: This involves using a polynomial function to estimate the missing value. This approach is more accurate than linear interpolation, but it can be more complex to implement.

- Dropping the value: If a value is missing for a significant portion of the time series, it might be better to drop that value altogether. This approach can help avoid biased results, but it can also reduce the size of your dataset.

It’s worth noting that the approach you choose will depend on the specific characteristics of your dataset and the goals of your project.

Handling Outliers

Outliers can be just as problematic as missing values when it comes to time series data. They can skew your models and make them less accurate. So, what do you do? There are a few approaches you can take:

- Winsorization: This involves capping the value at a certain percentile (e.g. the top 1%) and then using that value for the model. This approach helps to reduce the impact of outliers while still allowing for some flexibility.

- Truncation: This involves dropping any values that fall outside a certain range (e.g. any values above the top 1% or below the bottom 1%). This approach can help to remove outliers entirely, but it can also reduce the size of your dataset.

- Transforming the data: This involves using a transformation (e.g. log or square root) to reduce the impact of outliers. This approach can help to make the data more normally distributed, which can be useful for certain types of models.

It’s worth noting that the approach you choose will depend on the specific characteristics of your dataset and the goals of your project.

Feature Scaling and Normalization

Feature scaling and normalization are important steps in preparing your time series data for modeling. They help to ensure that the features are on the same scale, which can improve the performance of your models.

- Min-Max Scaler: This involves scaling the features to a common range (e.g. between 0 and 1). This approach can help to reduce the impact of features with large ranges.

- Standard Scaler: This involves scaling the features to have a mean of 0 and a standard deviation of 1. This approach can help to reduce the impact of features with large ranges.

- Log Transformation: This involves transforming the features by taking the log of the values. This approach can help to make the data more normally distributed, which can be useful for certain types of models.

It’s worth noting that the approach you choose will depend on the specific characteristics of your dataset and the goals of your project.

Handling Categorical Variables

Categorical variables can be tricky to handle in time series data. They often don’t fit neatly into the numerical scales used by most models. So, what do you do? Here are a few approaches you can take:

- One-hot encoding: This involves turning each category into a separate feature. This approach can help to reduce the impact of categorical variables while still allowing for some flexibility.

- Label encoding: This involves assigning a numerical value to each category (e.g. 0, 1, 2, etc.). This approach can help to reduce the impact of categorical variables while still allowing for some flexibility.

- Mean encoding: This involves replacing any categorical values in the test set with their mean value in the training set. This approach can be useful for certain types of models, especially those that rely on gradients.

It’s worth noting that the approach you choose will depend on the specific characteristics of your dataset and the goals of your project.

Feature Selection

Feature selection is a crucial step in preparing your time series data for modeling. It helps to ensure that you’re using the most relevant features, which can improve the performance of your models.

- K-Feature Elimination: This involves removing the k least important features from the dataset. This approach can help to reduce the size of the dataset while still retaining the most important features.

- Recursive Feature Elimination: This involves removing the least important feature at each step, using a model to evaluate the importance of each feature. This approach can help to ensure that you’re retaining the most important features.

- Correlation-based feature selection: This involves selecting features based on their correlation with the target variable. This approach can help to identify features that have a strong relationship with the target variable.

It’s worth noting that the approach you choose will depend on the specific characteristics of your dataset and the goals of your project.

Machine Learning for Real-Time Time Series Prediction: Machine Learning Time Series

Real-time time series prediction is crucial in various domains, including finance, transportation, and energy management. It enables organizations to make informed decisions quickly, respond to changing patterns, and optimize resources.

In the context of time series data, real-time prediction is essential for several reasons:

– It allows businesses to forecast future values, enabling them to adjust their strategies accordingly.

– It facilitates proactive decision-making, reducing the risk of unexpected events.

– It optimizes resource allocation and improves operational efficiency.

Streaming Data Processing for Real-Time Time Series Prediction

Streaming data processing platforms, like Apache Kafka and Apache Flink, play a vital role in real-time time series prediction. These platforms process high-volume, high-velocity, and high-variety data in real-time, making it an ideal solution for time series data.

- Kafka is a distributed streaming platform that provides low-latency and high-throughput data processing.

- Flink is an open-source platform that enables real-time data processing and analysis.

These platforms handle massive amounts of data, providing real-time insights and enabling organizations to make informed decisions rapidly.

Distributed Computing for Real-Time Time Series Prediction

Distributed computing frameworks, like Apache Spark and Hadoop, are also critical for real-time time series prediction. These frameworks enable organizations to process large amounts of data in parallel, reducing processing times and making real-time predictions possible.

- Apache Spark is an in-memory data processing engine that provides fast processing and low latency.

- Hadoop is a distributed computing framework that enables batch and real-time processing of large datasets.

These frameworks handle complex computations and provide real-time insights, making them an essential tool for real-time time series prediction.

Optimizing Real-Time Time Series Prediction Models

To optimize real-time time series prediction models, several strategies can be employed:

- Data Enrichment: Incorporate additional data sources to enhance the accuracy of predictions.

- Model Selection: Choose models that are well-suited for time series data and can handle high-dimensional inputs.

- Regularization: Regularize models to prevent overfitting and improve generalizability.

- Hyperparameter Tuning: Perform hyperparameter tuning to optimize model performance.

- Ensemble Methods: Combine multiple models to improve prediction accuracy and robustness.

By employing these strategies, organizations can develop high-performing real-time time series prediction models that provide accurate and timely insights.

Strategies for Improving Real-Time Time Series Prediction Models

- Online Learning: Update models in real-time as new data becomes available.

- Transfer Learning: Leverage pre-trained models and fine-tune them for specific time series data.

- Anomaly Detection: Identify unusual patterns in data and flag them for further investigation.

By incorporating these strategies, organizations can further enhance the accuracy and performance of their real-time time series prediction models.

Real-World Applications of Real-Time Time Series Prediction

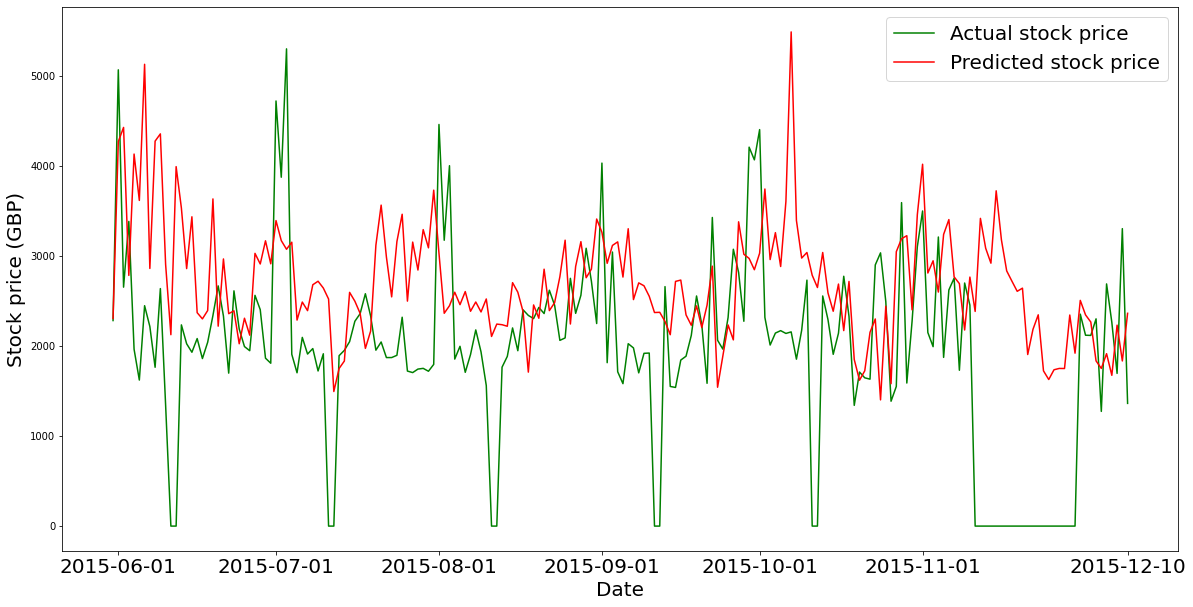

- Financial Forecasting: Predict stock prices, trade volumes, and other market indicators.

- Energy Management: Predict energy demand, optimize energy production, and ensure grid stability.

- Traffic Prediction: Predict traffic patterns, optimize traffic flow, and reduce congestion.

“Real-time time series prediction has the power to revolutionize various industries by enabling data-driven decision-making and predictive analytics.”

Deep Learning for Time Series

Deep learning has revolutionized the field of machine learning, and its application in time series data has yielded impressive results. Deep learning models are capable of learning complex patterns and relationships within time series data, making them an attractive option for time series forecasting. Time series forecasting is a critical task in various fields such as finance, energy, and weather, where accurate predictions can have a significant impact on decision-making.

Recurrent Neural Networks (RNNs) and Long Short-Term Memory (LSTM) Networks

RNNs and LSTMs are types of deep learning models specifically designed for time series data. RNNs are a class of neural networks where the output from one step is fed back into the input of the next step, allowing the model to capture temporal relationships within the data. LSTMs are a type of RNN that incorporates memory cells to handle vanishing gradients and improve the model’s ability to learn long-term dependencies.

Use of RNNs and LSTMs for Time Series Forecasting

RNNs and LSTMs have been widely used for time series forecasting due to their ability to capture temporal relationships and patterns within the data. They are particularly useful for forecasting tasks that involve long-term dependencies and non-linear relationships. The performance of RNNs and LSTMs can be evaluated using metrics such as mean absolute error (MAE) and mean squared error (MSE).

- Sequential Data: RNNs and LSTMs are particularly useful for sequential data, where the order of the data matters.

- Temporal Relationships: These models are capable of capturing temporal relationships and patterns within the data.

- Long-term Dependencies: LSTMs can handle long-term dependencies and vanishing gradients, making them suitable for tasks that involve long-term patterns.

Comparison of Deep Learning Models for Time Series Forecasting

Several deep learning models have been proposed for time series forecasting, each with their strengths and weaknesses. Some popular models include convolutional neural networks (CNNs), transformers, and autoencoders. The choice of model depends on the specific requirements of the task, such as the type of data, the level of complexity, and the desired level of accuracy.

Use of Transfer Learning for Time Series Forecasting with Pre-trained Models

Transfer learning is a technique where a pre-trained model is fine-tuned for a specific task. This approach can be particularly useful for time series forecasting, where the pre-trained model can capture the underlying patterns and relationships within the data. Some pre-trained models that can be used for time series forecasting include convolutional neural networks (CNNs), recurrent neural networks (RNNs), and transformers.

“The power of deep learning lies in its ability to capture complex patterns and relationships within data, making it a valuable tool for time series forecasting.”

Examples of Deep Learning Models for Time Series Forecasting

Several deep learning models have been applied to various time series forecasting tasks, including:

*

| Model | Description | Application |

|---|---|---|

| RNNs | Recurrent Neural Networks | Stock price forecasting |

| LSTMs | Long Short-Term Memory Networks | Weather forecasting |

| Transformers | Attention-based Neural Networks | Cryptocurrency price forecasting |

Challenges and Limitations of Machine Learning for Time Series

Machine learning for time series has made significant strides in recent years, but it’s not without its challenges and limitations. When dealing with noisy and missing data, it can be tough for machine learning models to accurately predict future values.

Noisy and Missing Time Series Data

Noisy and missing data can significantly affect the performance of machine learning models. Noisy data comes from inaccuracies in the measurement process, such as sensor errors or human mistakes, while missing data occurs when observations are not recorded. This can be due to equipment failure, data loss, or intentional removal of data.

- Noisy data can lead to biased models.

- Missing data can reduce the model’s accuracy.

Noisy and missing data can be addressed through data preprocessing techniques such as interpolation, imputation, or removal of outliers. However, this may not always be feasible, especially when dealing with large and complex datasets.

Limitations of Machine Learning Models for Time Series Forecasting, Machine learning time series

Machine learning models have their limitations when it comes to time series forecasting. Despite their ability to capture patterns and trends, they can struggle with complex and high-dimensional data. Moreover, the performance of machine learning models can degrade over time due to the changing nature of time series data.

- Models may perform poorly in the presence of structural breaks or regime shifts.

- Models may struggle to capture non-linear relationships and complex patterns.

- Models may be vulnerable to overfitting and underfitting.

These limitations can be addressed through the use of more advanced machine learning techniques, such as ensemble methods or deep learning architectures. Additionally, careful model selection and hyperparameter tuning can help improve the performance of machine learning models for time series forecasting.

Comparison of Machine Learning Models for Time Series Forecasting

The performance of different machine learning models for time series forecasting varies across domains and datasets. While some models may excel in one domain, they may struggle in another. This highlights the importance of careful model selection and validation.

| Model | Advantages | Disadvantages |

|---|---|---|

| ARIMA | Easy to implement and interpret | May not capture non-linear relationships |

| Prophet | Captures seasonality and trends | May be slow and computationally intensive |

| LSTM | Captures complex patterns and relationships | May be computationally intensive and require large datasets |

Areas for Future Research in Machine Learning for Time Series

There are several areas that warrant further research in machine learning for time series. These include the development of more advanced models that can capture complex patterns and relationships, as well as the improvement of existing models through careful hyperparameter tuning and ensemble methods.

“The future of machine learning for time series lies in the development of more complex and adaptive models that can capture the nuances of dynamic systems.”

Closing Notes

In conclusion, machine learning time series is a powerful tool for predicting future events and making informed decisions. By understanding the fundamentals of machine learning for time series, data preparation, and various forecasting techniques, we can unlock new possibilities and improve the accuracy of our predictions.

Detailed FAQs

What is the difference between supervised and unsupervised learning in time series data?

In supervised learning, the machine learning algorithm is trained on labeled data to predict a specific output. In unsupervised learning, the algorithm is trained on unlabeled data to identify patterns and relationships.

How do I handle missing values in time series data?

There are several methods for handling missing values in time series data, including imputation, interpolation, and extrapolation. The choice of method depends on the specific use case and the nature of the missing data.

What is univariate time series forecasting, and how does it differ from multivariate time series forecasting?

Univariate time series forecasting involves using a single variable to make predictions about future values. Multivariate time series forecasting, on the other hand, involves using multiple variables to make predictions about future values.

What is deep learning, and how is it applied to time series data?

Deep learning involves the use of neural networks with multiple layers to learn complex patterns and relationships in data. In time series data, deep learning can be applied to learn complex patterns and relationships in the data to make accurate predictions.

What are some common challenges and limitations of machine learning for time series?

Some common challenges and limitations of machine learning for time series include the presence of noise and missing data, the need for large amounts of data to train models, and the difficulty of evaluating model performance.