Nvidia microsoft azure machine learning integration announcement 2023 2024 – Delving into Nvidia Microsoft Azure Machine Learning Integration Announcement 2023-2024, this development marks a significant milestone in the world of artificial intelligence and machine learning. By integrating Nvidia’s cutting-edge AI technology with Microsoft Azure’s cloud-based infrastructure, the duo aims to revolutionize the way businesses approach AI and ML.

The partnership between Nvidia and Microsoft brings together two industry leaders in the field of AI, with a shared goal of making machine learning more accessible, scalable, and secure. This integration has far-reaching implications for various industries, from healthcare and finance to retail and manufacturing, and has the potential to transform the way businesses operate and innovate.

Introduction to NVIDIA and Microsoft Azure ML Integration

NVIDIA and Microsoft Azure have announced a significant integration of their technologies to revolutionize the field of machine learning (ML) and artificial intelligence (AI). This partnership brings together the strengths of both companies to provide a powerful platform for developers, researchers, and organizations to build, train, and deploy ML models at scale. The integration aims to simplify the process of ML development, reduce costs, and improve the overall efficiency of AI-powered applications.

The integration of NVIDIA’s computing and deep learning capabilities with Microsoft Azure’s cloud infrastructure provides a robust platform for large-scale ML workloads. This collaboration enables developers to leverage NVIDIA’s GPU-accelerated computing and Microsoft Azure’s scalable cloud resources to build and train complex ML models.

The Goals and Benefits of the Partnership

The primary goals of the NVIDIA and Microsoft Azure integration include:

- Faster ML model development and deployment

- Improved model accuracy and performance

- Increased scalability and flexibility

- Reduced costs and complexity

- Enhanced collaboration and innovation

By achieving these goals, the partnership aims to accelerate the adoption of AI and ML in various industries, such as healthcare, finance, and retail.

The Technologies Involved

The integration of NVIDIA and Microsoft Azure technologies involves the following components:

- NVIDIA GPU-accelerated computing

- Microsoft Azure cloud infrastructure

- Microsoft Azure Machine Learning (AML) service

- NVIDIA NGC (Network for Goodware) platform

These technologies work together to provide a seamless and efficient ML development experience, from data preparation to model deployment.

Benefits for Developers and Organizations

The NVIDIA and Microsoft Azure integration offers several benefits for developers and organizations, including:

- Access to scalable cloud resources and high-performance computing capabilities

- Ability to leverage NVIDIA’s GPU-accelerated computing for faster ML model training and deployment

- Use of Microsoft Azure Machine Learning (AML) service for automated ML workflows and model management

- Integration with NVIDIA NGC platform for secure and efficient ML model deployment

>

NVIDIA and Microsoft Azure have a long history of collaboration, dating back to the early days of artificial intelligence (AI) and machine learning (ML) in the cloud. This partnership has been instrumental in shaping the future of AI and ML, enabling businesses and organizations to leverage the power of the cloud for accelerating innovation and driving digital transformation.

Their collaboration has been a key factor in the growth of the cloud-based AI and ML ecosystem, with a particular focus on deep learning workloads. By integrating NVIDIA’s AI computing hardware and software with Microsoft Azure’s cloud infrastructure, they have created a powerful combination that enables users to train, deploy, and manage AI models at scale.

The partnership has also led to the development of several tools and services, including the NVIDIA Deep Learning SDK, which is now a part of the Microsoft Azure Machine Learning platform. This SDK provides a comprehensive set of tools and frameworks for building, training, and deploying deep learning models.

Key Milestones and Achievements

The collaboration between NVIDIA and Microsoft Azure has been marked by several key milestones and achievements, including:

- NVIDIA Quadro GPUs Supported on Azure: In 2012, NVIDIA announced that its Quadro GPUs would be supported on Microsoft Azure, enabling users to run graphics-intensive workloads on the cloud. This was a significant milestone in the collaboration, as it marked the first time that NVIDIA’s GPUs were available on a cloud platform.

- Microsoft Azure Deep Learning VMs: In 2015, Microsoft Azure introduced Deep Learning VMs, which integrated NVIDIA’s Tesla GPUs with Azure’s cloud infrastructure. This virtual machine (VM) was specifically designed for deep learning workloads and provided users with a powerful platform for training and deploying AI models.

- NVIDIA Deep Learning SDK on Azure: In 2017, NVIDIA announced that its Deep Learning SDK would be supported on Microsoft Azure, enabling users to build, train, and deploy deep learning models on the cloud. This was a significant milestone in the collaboration, as it marked the first time that NVIDIA’s SDK was available on a cloud platform.

- Microsoft Azure Machine Learning: In 2019, Microsoft Azure launched Machine Learning, a fully managed cloud-based service for building, training, and deploying machine learning models. NVIDIA’s AI computing hardware and software are integrated with this service, enabling users to leverage the power of the cloud for accelerating innovation and driving digital transformation.

The collaboration between NVIDIA and Microsoft Azure has had a significant impact on the industry, enabling businesses and organizations to leverage the power of AI and ML for driving digital transformation. By providing a comprehensive set of tools and services for building, training, and deploying AI models, they have empowered users to accelerate innovation and drive business growth.

As the world becomes increasingly digital, the need for AI and ML will only continue to grow. With NVIDIA and Microsoft Azure’s collaboration, businesses and organizations will have access to the power of the cloud for accelerating innovation and driving digital transformation.

Applications and Use Cases of NVIDIA and Microsoft Azure ML Integration

The integration of NVIDIA and Microsoft Azure technologies offers a wide range of applications and use cases across various industries, enabling businesses to leverage the power of machine learning and artificial intelligence. This fusion of innovation and expertise can drive transformation, enhance decision-making, and unlock new opportunities for growth.

The integration of NVIDIA and Microsoft Azure technologies is particularly beneficial in industries that rely heavily on data analysis, computer vision, and natural language processing. Some of the key industries that can benefit from this integration include:

Data-Intensive Industries

Data-intensive industries such as finance, healthcare, and retail can leverage the power of machine learning to analyze large datasets, identify patterns, and make data-driven decisions. The combination of NVIDIA and Microsoft Azure technologies can enable real-time data processing, advanced analytics, and predictive modeling, allowing businesses to stay ahead of the competition.

- Enhanced risk management: Using machine learning algorithms to analyze financial transactions and identify potential fraudulent activities.

- Personalized medicine: Applying computer vision and natural language processing to develop personalized treatment plans for patients.

- Customer segmentation: Analyzing customer behavior and preferences to develop targeted marketing campaigns.

Visual Computing and AI

Visual computing and AI applications such as computer vision, robotics, and autonomous vehicles can benefit from the integration of NVIDIA and Microsoft Azure technologies. This combination can enable real-time processing of large datasets, advanced computer vision, and precise control over robotic systems.

“The fusion of NVIDIA and Microsoft Azure technologies has enabled us to develop intelligent systems that can analyze complex visual data and make decisions in real-time.”

- Automated inspection: Using computer vision to inspect products for defects and anomalies on the production line.

- Autonomous driving: Applying advanced computer vision and machine learning algorithms to enable self-driving vehicles to navigate complex road scenarios.

- Robotics: Enabling robots to perform complex tasks with precision and accuracy using computer vision and machine learning.

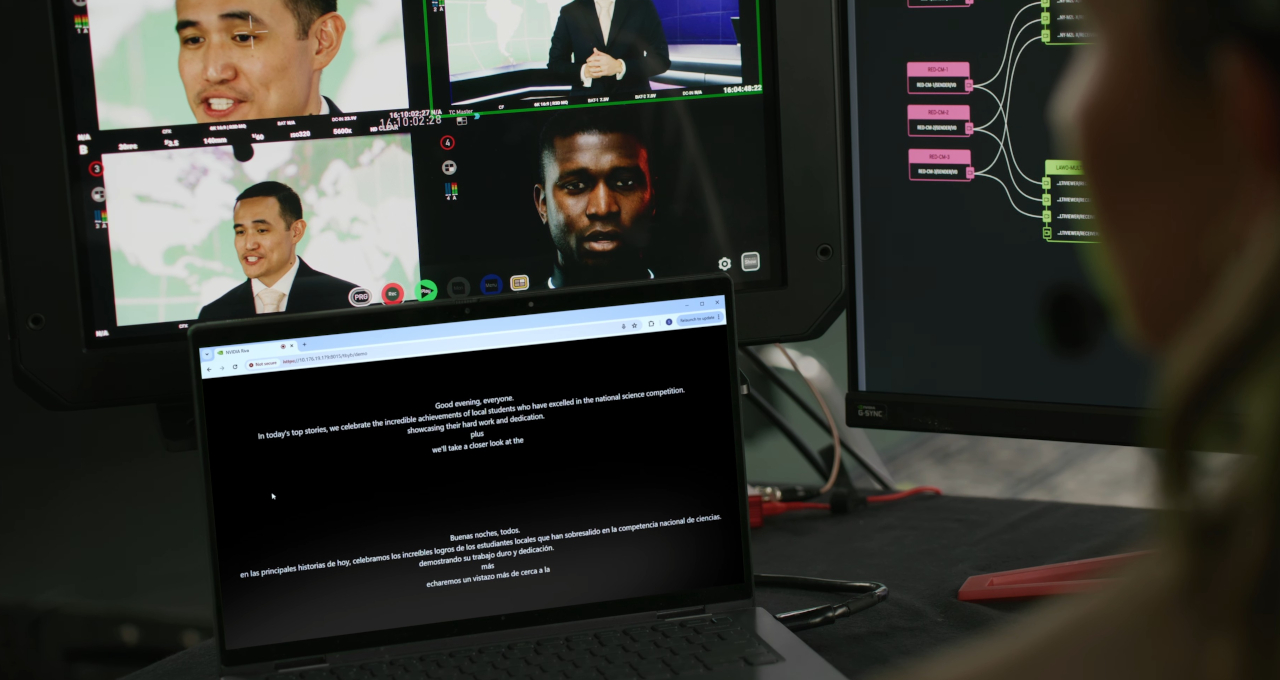

AI-Powered Education

AI-powered education platforms can leverage the integration of NVIDIA and Microsoft Azure technologies to develop personalized learning experiences, adaptive curricula, and intelligent tutoring systems.

- Personalized learning: Using machine learning algorithms to develop customized learning plans tailored to individual students’ needs and abilities.

- Automated grading: Applying natural language processing and computer vision to automate grading and feedback for assignments and exams.

- Intelligent tutoring: Developing AI-powered tutoring systems that can provide real-time support and guidance to students.

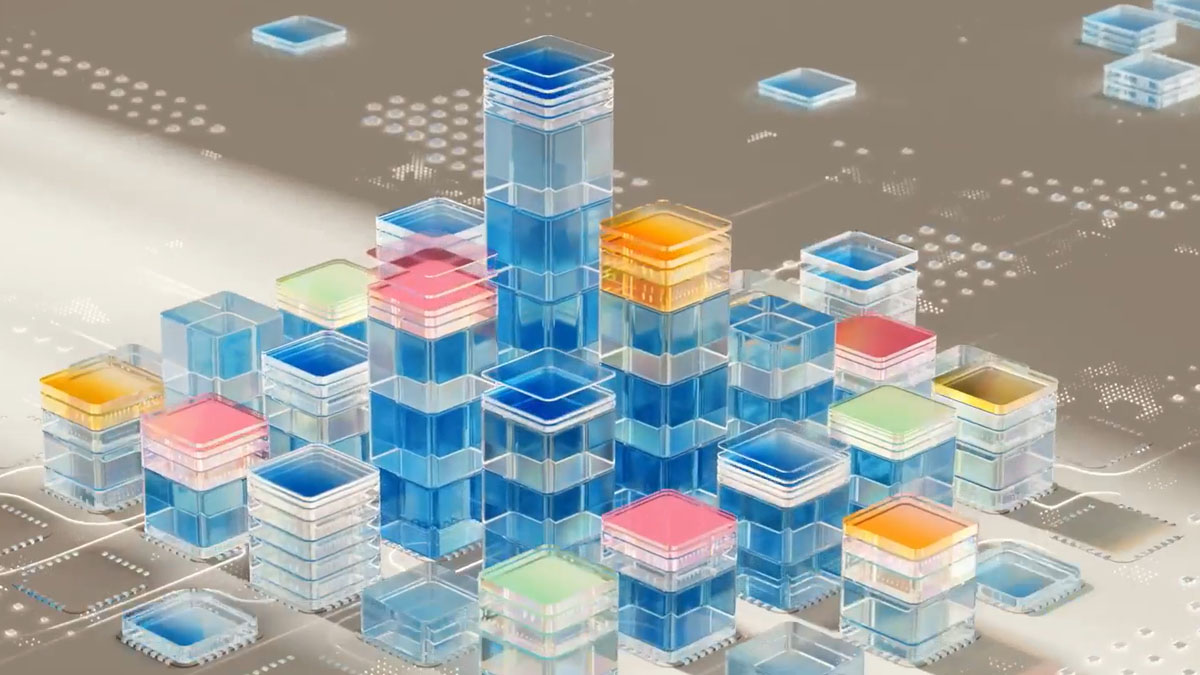

Architecture and Technical Overview of NVIDIA and Microsoft Azure ML Integration

At the heart of the NVIDIA and Microsoft Azure ML integration lies a robust technical architecture that enables seamless collaboration between the two platforms. This architecture empowers users to develop, train, and deploy machine learning models at scale, while also providing a secure and scalable infrastructure for data ingestion, processing, and storage.

Technical Components

The technical components of the NVIDIA and Microsoft Azure ML integration include:

A container-based architecture that allows users to deploy and manage machine learning models in a flexible and scalable manner.

An orchestration system that enables the automated deployment, scaling, and management of machine learning workflows.

A networking infrastructure that provides secure and high-performance communication between the NVIDIA GPU-accelerated platforms and the Microsoft Azure ML service.

Furthermore, the integration leverages the following key technologies to enable a seamless experience:

* Containers: Docker containers are used to package machine learning models and deploy them on NVIDIA GPU-accelerated platforms.

* Orchestration: Kubernetes is used to automate the deployment, scaling, and management of machine learning workflows.

* Networking: Azure Network Service Management is used to provide secure and high-performance communication between the NVIDIA GPU-accelerated platforms and the Microsoft Azure ML service.

A container-based architecture is at the heart of the integration, with orchestration and networking components facilitating seamless communication and collaboration between the NVIDIA GPU-accelerated platforms and the Microsoft Azure ML service. The container orchestration system ensures that machine learning models are properly deployed, scaled, and managed, while the networking infrastructure provides secure and high-performance communication between the platforms.

Key Technologies

The NVIDIA and Microsoft Azure ML integration utilizes the following key technologies to enable a seamless experience:

* Containerization: Docker containers are used to package machine learning models and deploy them on NVIDIA GPU-accelerated platforms.

* Orchestration: Kubernetes is used to automate the deployment, scaling, and management of machine learning workflows.

* Networking: Azure Network Service Management is used to provide secure and high-performance communication between the NVIDIA GPU-accelerated platforms and the Microsoft Azure ML service.

Containerization provides a flexible and scalable way to deploy machine learning models, while orchestration ensures that these models are properly managed and scaled to meet the needs of the workload. The networking infrastructure provides the necessary security and performance to ensure seamless communication between the platforms.

Benefits

The technical architecture of the NVIDIA and Microsoft Azure ML integration provides numerous benefits to users, including:

* Improved scalability: The container-based architecture and orchestration system enable seamless scaling of machine learning workloads.

* Enhanced security: The networking infrastructure provides secure and high-performance communication between the NVIDIA GPU-accelerated platforms and the Microsoft Azure ML service.

* Increased flexibility: The containerization system provides a flexible way to deploy machine learning models, while the orchestration system enables automated deployment and management of workloads.

The technical architecture of the integration enables improved scalability, enhanced security, and increased flexibility for users. The container-based architecture and orchestration system enable seamless scaling of machine learning workloads, while the networking infrastructure provides secure and high-performance communication between the platforms.

Performance Benefits and Scalability of NVIDIA and Microsoft Azure ML Integration

The integration of NVIDIA and Microsoft Azure ML provides significant performance benefits and scalability, enabling organizations to tackle complex machine learning (ML) workloads with unprecedented efficiency and reliability. This collaboration empowers users to leverage the processing power of NVIDIA GPUs and the scalability of Azure’s cloud infrastructure, resulting in improved model training times, enhanced accuracy, and increased productivity.

Expected Performance Benefits

The NVIDIA and Microsoft Azure ML integration delivers several performance benefits, including:

The integration of NVIDIA and Microsoft Azure ML enables users to leverage the power of NVIDIA GPUs to accelerate ML model training, resulting in significant reductions in training times.

Improved performance allows for faster experimentation and model iteration, enabling data scientists to respond rapidly to changing business requirements and competitor activity.

Scalability and Reliability

Several key factors contribute to the scalability and reliability of the NVIDIA and Microsoft Azure ML integration:

Scaled-out Architecture

The integration enables a scaled-out architecture, where multiple NVIDIA GPUs can be easily deployed and managed across a vast number of machines in the Azure cloud.

This scalable architecture allows for the seamless addition of more resources as needs arise, accommodating large-scale ML workloads and ensuring smooth performance.

High-Density Computing

The NVIDIA and Microsoft Azure ML integration provides high-density computing, allowing for the efficient utilization of resources in the cloud.

Users can easily scale up or down depending on the needs of their ML workloads, optimizing costs and ensuring optimal performance.

Comparison with Traditional ML Architectures, Nvidia microsoft azure machine learning integration announcement 2023 2024

In comparison to traditional ML architectures, the NVIDIA and Microsoft Azure ML integration offers several advantages:

Faster Training Times

Traditional ML architectures often rely on central processing units (CPUs) for training, which can be slower and less efficient compared to the use of NVIDIA GPUs.

The integration of NVIDIA and Microsoft Azure ML enables users to bypass traditional CPU-based architectures, resulting in significantly faster training times and improved productivity.

Autoscaling and Self-Servicing

Traditional ML architectures often require manual scaling, which can lead to inefficiencies and increased costs.

The Nvidia and Microsoft Azure ML integration offers autoscaling and self-servicing capabilities, simplifying the process of adapting to changing workload demands and ensuring optimal resource utilization.

Security and Compliance Considerations for NVIDIA and Microsoft Azure ML Integration

The integration of NVIDIA and Microsoft Azure Machine Learning (ML) brings together cutting-edge hardware and software capabilities to enable seamless and secure machine learning workflows. To maintain the integrity of sensitive data and applications, NVIDIA and Microsoft Azure have implemented robust security and compliance features, ensuring the highest level of protection for users and their sensitive information.

The

Security Features

of NVIDIA and Microsoft Azure ML Integration include:

- Encryption: All data transmitted and stored within the NVIDIA and Microsoft Azure ML Integration is encrypted using industry-standard protocols, safeguarding sensitive information from unauthorized access.

- Access Control: Users are authenticated and authorized to access specific resources and applications within the integration, ensuring that only authorized personnel can access sensitive data and applications.

- Data Loss Prevention (DLP): Advanced DLP capabilities detect and prevent sensitive data from being copied or transmitted outside of the integration, protecting against data breaches and compliance risks.

- Sandboxing: Isolated sandbox environments provide a secure testing ground for machine learning models, preventing damage to production environments and reducing the risk of data compromise.

The

Compliance Features

of NVIDIA and Microsoft Azure ML Integration include:

- GDPR Compliance: The integration is designed to meet the requirements of the General Data Protection Regulation (GDPR), ensuring that users can process and manage personal data responsibly and with transparency.

- HIPAA Compliance: NVIDIA and Microsoft Azure ML Integration adhere to the Health Insurance Portability and Accountability Act (HIPAA) requirements, ensuring that healthcare data is protected and secured.

- SOX Compliance: The integration meets the requirements of the Sarbanes-Oxley Act (SOX), providing a framework for the secure management of financial data and transactions.

The

Azure Active Directory (Azure AD) Integration

provides an additional layer of security, allowing users to authenticate and authorize access to the NVIDIA and Microsoft Azure ML Integration using their existing Azure AD credentials. This integration enables seamless Single Sign-On (SSO) capabilities, simplifying the access management process for users.

The

Azure Policy Enforcement

ensures that the NVIDIA and Microsoft Azure ML Integration complies with the organization’s security policies and standards, providing a centralized framework for policy management and enforcement. This enables users to define custom policies and assign them to specific resources and applications within the integration.

In addition to these security and compliance features, NVIDIA and Microsoft Azure ML Integration provides a robust auditing and logging mechanism, capturing all security-related events and activities within the integration. This comprehensive logging and auditing capability ensures that users have a clear record of all security-related events, enabling effective incident response and forensics analysis.

The NVIDIA and Microsoft Azure ML Integration also supports multi-factor authentication (MFA), providing an additional layer of security for users and their sensitive data. With MFA, users are required to provide a second form of verification, such as a fingerprint, smart card, or one-time password, in addition to their Azure AD credentials.

In summary, the security and compliance features of NVIDIA and Microsoft Azure ML Integration provide a robust and secure environment for users to develop, deploy, and manage machine learning models. With industry-standard encryption, access control, DLP, sandboxing, and compliance features, users can be confident that their sensitive data and applications are protected and secured.

Roadmap for Future Development and Integration of NVIDIA and Microsoft Azure

As we continue to push the boundaries of innovation in the field of artificial intelligence and machine learning, we would like to offer a glimpse into the exciting future that lies ahead for the integration of NVIDIA and Microsoft Azure. With a shared vision of accelerating AI adoption and empowering developers, researchers, and businesses to unlock new possibilities, our roadmap is designed to bring forth cutting-edge technologies, services, and collaborations that will revolutionize the way we work and live.

Planned Features and Upgrades

In the near future, we plan to introduce several key features and upgrades that will further enhance the NVIDIA and Microsoft Azure integration. Some of these include:

-

AI Model Compression and Transfer Learning:

We will be introducing tools and techniques that enable developers to compress and transfer AI models across different frameworks and architectures, significantly reducing model complexity and increasing deployment efficiency.

-

Real-time Data Analytics and Visualization:

Our integration will provide real-time data analytics and visualization capabilities, enabling users to gain faster insights and make more informed decisions with enhanced data-driven intelligence.

-

Autonomous Systems and Robotics:

We will be focusing on developing and integrating advanced autonomous systems and robotics capabilities that will transform industries and revolutionize the way we interact with technology.

-

Explainability and Transparency:

We recognize the importance of transparency and explainability in AI decision-making processes. Our integration will include advanced tools and techniques to provide actionable insights and enhance trust in AI-driven applications.

Future Roadmap for Development and Expansion

Our future roadmap for development and expansion is centered around several key areas, including:

-

Expansion of Cloud Native Applications:

We plan to expand our support for cloud-native applications, enhancing the scalability, reliability, and performance of our integration for a wide range of use cases.

-

Integration with Edge Computing Platforms:

Our integration will be expanded to support edge computing platforms, enabling real-time data processing and analysis at the edge of the network.

-

Artifacts and Model Serving:

We will be introducing advanced tools and techniques for artifact and model serving, ensuring seamless model deployment and management across a wide range of applications.

Potential Opportunities for Additional Services and Collaborations

The integration of NVIDIA and Microsoft Azure presents a wide range of opportunities for additional services and collaborations. Some potential areas for collaboration include:

-

Rainier and Deep Learning Inference:

We will be collaborating with researchers and developers to further enhance and optimize the acceleration of deep learning inference on various frameworks and architectures.

-

Quantum Computing and AI:

Our integration will include support for quantum computing and AI, enabling researchers to explore the intersection of these emerging technologies and unlock new possibilities.

-

Autonomous Vehicle Development:

We will be working closely with automotive companies and research institutions to develop and integrate advanced autonomous driving capabilities.

Final Review

In conclusion, the Nvidia Microsoft Azure Machine Learning Integration Announcement 2023-2024 represents a major leap forward in the field of AI and ML, offering businesses unprecedented opportunities for growth and innovation. As the partnership continues to evolve and mature, we can expect to see even more exciting developments and breakthroughs in the coming years.

FAQ Explained: Nvidia Microsoft Azure Machine Learning Integration Announcement 2023 2024

What are the key features of Nvidia Microsoft Azure Machine Learning Integration?

The integration offers a range of key features, including real-time data processing, automatic model selection, and multi-language support. It also provides a unified platform for developing, deploying, and managing machine learning models.

How does Nvidia Microsoft Azure Machine Learning Integration improve AI and ML capabilities?

The integration enhances AI and ML capabilities by providing faster, more accurate, and more scalable solutions. It also enables businesses to leverage the power of Nvidia’s GPUs and Microsoft Azure’s cloud infrastructure to drive innovation and growth.

What are the potential applications of Nvidia Microsoft Azure Machine Learning Integration?

The integration has far-reaching implications for various industries, including healthcare, finance, retail, and manufacturing. It can be used to develop predictive models, improve decision-making, and drive business growth.

Is Nvidia Microsoft Azure Machine Learning Integration secure?

Yes, the integration offers robust security features, including data encryption, access controls, and monitoring and auditing tools. It also complies with various industry regulations and standards.

What is the future roadmap for Nvidia Microsoft Azure Machine Learning Integration?

The partnership plans to continue evolving and innovating, with a focus on developing new features, services, and use cases. It will also explore opportunities for additional services and collaborations.