With signal processing for machine learning at the forefront, this concept opens a window to a vast array of applications where digital signals are transformed, analyzed, and optimized to improve the performance of machine learning models. Signal processing techniques like filtering, feature extraction, and noise reduction are critical in data preprocessing for machine learning models, enabling them to learn from complex, noisy data.

The applications of signal processing for machine learning are diverse and span across various domains, including audio analysis, image and video processing, medical imaging, and time series analysis. In this context, the Artikel will cover foundational concepts, signal transformations, machine learning architectures, deep learning, and special considerations for signal processing in image, audio, and time series analysis.

Foundations of Signal Processing for Machine Learning

Signal processing plays a crucial role in machine learning by providing a bridge between data acquisition, feature extraction, and predictive modeling. It is the backbone of various applications in areas such as computer vision, speech recognition, and natural language processing. Effective signal processing techniques are essential for extracting relevant information from complex data sets, which is vital for making accurate predictions and driving informed decision-making.

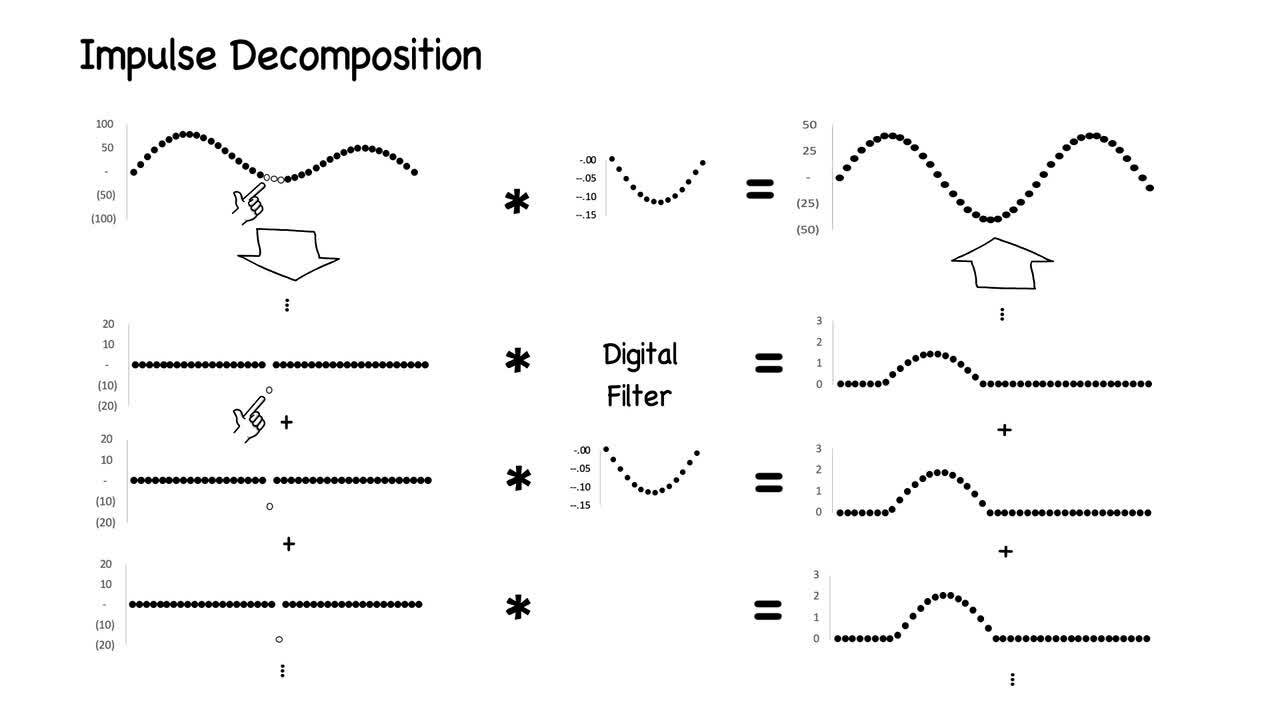

Concepts of Digital Signal Processing

The digital signal processing (DSP) field is centered around the manipulation and analysis of discrete-time signals. It involves various algorithms and techniques for filtering, transforming, and characterizing different types of signals. A fundamental concept in DSP is the discrete Fourier transform (DFT), which is used for decomposing signals into their frequency components. The DFT is often employed in signal processing applications such as data compression, noise reduction, and feature extraction.

Signal processing transforms raw signal into useful information.

Role of Signal Processing in Data Preprocessing

Signal processing techniques are vital in data preprocessing for machine learning models. This involves noise reduction, filtering, feature extraction, and scaling. By applying these techniques, raw data can be cleansed of irrelevant information and transformed into a suitable format for model training. This is particularly important when dealing with noisy or high-dimensional data sets.

Data Preprocessing Steps: Signal Processing in Action

- Main Steps: Noise reduction, filtering, feature extraction, and scaling.

- Noise Reduction: Removal of high-frequency components to avoid interference in model performance.

- Filtering: Selection of desired frequency components for analysis by eliminating irrelevant signals.

- Feature Extraction: Isolation of critical aspects of the signal that hold predictive value.

- Scaling: Standardization of the dataset to maintain consistency across features.

Data preprocessing techniques are used to ensure high-quality data for model training, including noise reduction via filtering and feature extraction using transforms.

Common Signal Processing Techniques used in Machine Learning

Some crucial techniques include:

Noise Reduction in Machine Learning

- Median Filtering: Removes salt-and-pepper noise by replacing pixels with neighboring mean value.

- Averaging Filter: Combines multiple versions of a signal by averaging neighboring values with the input image.

Noise reduction plays a significant role in extracting accurate information from signals for decision-making.

Machine Learning Filtering

- Savitzky-Golay Filter: A non-linear filter with polynomial smoothing that helps remove noise while preserving the original shape of the signal.

- Butterworth Filter: A type of IIR (Infinite Impulse Response) filter used for smoothing and removing noise.

Feature Extraction Techniques

- Discrete Wavelet Transform (DWT): Decomposes a signal into its frequency components using multiple resolutions.

- Independent Component Analysis (ICA): Separates mixed signal into independent sources with distinct characteristics.

Applications where Signal Processing is Crucial in Machine Learning

Applications that require high accuracy and reliability often depend on signal processing for data preprocessing and feature extraction. This includes speech recognition, medical imaging analysis, and predictive maintenance of industrial equipment.

Signal processing plays a vital role in extracting meaningful information from complex data sets, making high-quality data available for analysis.

Signal Transformations

Signal transformations play a crucial role in signal processing for machine learning. They enable us to analyze and extract meaningful features from signals, ultimately facilitating improved model performance and accuracy. In this section, we will explore various transform techniques used in signal processing and their applications in machine learning.

Discrete Fourier Transform (DFT) and Fast Fourier Transform (FFT)

The Discrete Fourier Transform (DFT) is a fundamental concept in signal processing, which represents a function as a weighted sum of sinusoids. However, computing the DFT directly can be computationally expensive. As a solution, the Fast Fourier Transform (FFT) is an efficient algorithm for computing the DFT, which reduces the computational complexity from O(n^2) to O(n log n).

The FFT is widely used in machine learning for tasks such as spectral analysis, signal filtering, and feature extraction.

- The FFT is particularly useful when dealing with stationary signals or periodic signals within a fixed interval of sampling.

- FFT enables efficient computation of frequency domain representations, making it an essential tool for tasks like power spectral density estimation and filter design.

- As an FFT example, consider a real-world signal processing task: power grid frequency analysis. The FFT helps engineers diagnose and troubleshoot electrical supply issues by analyzing frequency components.

However, it’s worth noting that DFT and FFT can be affected by the sampling frequency and signal duration, especially for non-stationary or non-periodic signals.

Short-Time Fourier Transform (STFT)

The Short-Time Fourier Transform (STFT) is an extension of the FFT, which can handle non-stationary signals by dividing them into smaller, overlapping segments. The STFT applies a window function, often a Hanning or Hamming window, to each frame of the signal, and then computes the DFT of each frame.

- The STFT is typically used for tasks such as speech recognition, music analysis, and seismic signal processing, where non-stationarity is inherent.

- The choice of the correct window function and frame size can impact STFT results and computational efficiency.

- By analyzing STFT outputs, audio engineers can visualize the temporal-spectral characteristics of music samples and create customized effects or sound effects by filtering or modifying frequency components within a specific window frame.

However, as with any transform, the choice of parameters must be carefully selected to suit the specific application.

Designing Custom Transforms for Machine Learning

In many cases, standard transforms like DFT, FFT, or STFT might not fully capture the underlying data structure. In such scenarios, custom transforms can be designed to better suit the problem at hand.

- For example, wavelet transforms can be tailored to represent signals with localized frequencies and durations, useful for tasks such as image compression or fault detection.

- Other machine learning-specific transforms, like Independent Component Analysis (ICA), can be designed to capture statistically independent components from mixed signals.

Designing custom transforms requires understanding of the underlying data properties and the machine learning task at hand. By creating bespoke transform techniques, researchers can unlock novel insights and improve model performance.

The choice of transform technique heavily depends on the characteristics of the signal, the computational resources available, and the specific objectives of the machine learning task.

Machine Learning Signal Processing Architectures

![[ASEAN Webinar] 🧠Deep Learning and Machine Learning for 📶Signal ... [ASEAN Webinar] 🧠Deep Learning and Machine Learning for 📶Signal ...](https://event.techsource-asia.com/hubfs/Banner-DLMLSignalProcessing.png#keepProtocol)

In this section, we will delve into the exciting realm of machine learning signal processing architectures, where the realms of signal processing and machine learning converge. Convolutional neural networks (CNNs) and recurrent neural networks (RNNs) are two popular architectures that have revolutionized the field of signal processing and machine learning.

Convolutional Neural Networks (CNNs)

CNNs are a type of neural network designed to process data with grid-like topology, such as images. In the context of signal processing, CNNs can be used for tasks like image denoising, image classification, and object detection. The key components of a CNN include convolutional layers, pooling layers, and fully connected layers. Convolutional layers apply filters to small regions of the input data, pooling layers downsample the data, and fully connected layers classify the output.

- Convolutional layers: These layers apply filters to small regions of the input data, scanning the input image in both horizontal and vertical directions. This process allows the network to detect features like edges, lines, and shapes.

- Polling layers: These layers downsample the output of the convolutional layer, reducing the spatial dimensions of the feature maps. This process helps reduce the number of parameters and computational complexity.

- Max-pooling: This is a type of pooling layer that selects the maximum value across each filter, reducing the dimensionality of the feature maps.

- Flatten layer: This layer is used to flatten the output of the convolutional and pooling layers, preparing it for the fully connected layers.

Recurrent Neural Networks (RNNs)

RNNs are a type of neural network designed to process sequential data, such as time series signals or speech. In the context of signal processing, RNNs can be used for tasks like speech recognition, time series forecasting, and signal processing. The key components of an RNN include recurrence, activation functions, and hidden states.

- Recursion: RNNs use recursion to allow information to flow over time, allowing the network to learn patterns and relationships in sequential data.

- Activation functions: Activation functions, such as sigmoid or ReLU, are used to introduce non-linearity in the network, allowing it to learn more complex relationships in the data.

Time-Frequency Representations

Time-frequency representations are a way to represent signals in both time and frequency domains. In machine learning, time-frequency representations can be used to analyze and classify signals. Popular time-frequency representations include spectrograms, scalograms, and wavelet spectra.

Time-frequency representations can be used to visualize and understand the behavior of signals in both time and frequency domains, enabling machine learning models to learn more robust and informative features.

Integration of Signal Processing Blocks into Existing Machine Learning Pipelines

Integrating signal processing blocks into existing machine learning pipelines can enhance the accuracy and robustness of machine learning models. This can be achieved by incorporating signal processing techniques, such as filtering, denoising, and feature extraction, into the data preprocessing stage. By doing so, machine learning models can learn from more robust and informative features, leading to improved performance and accuracy.

- Data preprocessing: Signal processing techniques, such as filtering and denoising, can be used to clean and preprocess the input data, improving the accuracy and robustness of machine learning models.

Signal Processing in Deep Learning

Deep learning, a subset of machine learning, has become a crucial aspect of signal processing in recent years. It has enabled the development of more accurate and efficient models for signal classification, regression, and other tasks. In signal processing, deep learning is used to extract features from raw signal data, allowing for better pattern recognition and prediction.

Deep Learning Architectures in Signal Classification

Deep learning architectures, such as convolutional neural networks (CNNs) and recurrent neural networks (RNNs), have shown great promise in signal classification tasks. These architectures are particularly useful for tasks that involve large amounts of data, such as image or audio classification.

- CNNs are commonly used for image classification tasks, where the convolutional layers extract features from the images and the fully connected layers perform the classification.

- RNNs are often used for sequential data, such as audio or speech recognition, where the recurrent layers capture the temporal relationships in the data.

In both cases, the deep learning architecture learns to extract relevant features from the data that are used for classification.

Deep Learning Architectures in Signal Regression

Deep learning architectures can also be used for signal regression tasks, such as predicting the future values of a signal based on past observations. This can be particularly useful in applications such as stock price prediction or weather forecasting.

For example, a CNN can be used to predict the future values of a signal by learning to extract features from the past observations and using these features to make predictions.

This approach can be particularly useful when the underlying relationship between the input and output signals is complex and difficult to model using traditional regression techniques.

Challenges in Applying Deep Learning to Signal Processing

Despite the potential benefits of deep learning in signal processing, there are several challenges that need to be addressed. One of the main challenges is the lack of labeled data, which is often required to train deep learning models.

- Data augmentation techniques, such as rotation and flipping, can be used to increase the size of the training dataset and improve the performance of the model.

- Transfer learning, where a pre-trained model is fine-tuned on the target dataset, can be used to leverage the knowledge gained from larger datasets and improve the performance of the model.

- Ensemble methods, such as bagging and boosting, can be used to combine the predictions of multiple models and improve the performance of the model.

These strategies can help to alleviate the challenges associated with deep learning in signal processing and improve the performance of the models.

Computational Efficiency in Deep Learning

Another challenge in deep learning is computational efficiency. Deep learning models can be computationally intensive, particularly when dealing with large datasets.

For example, a CNN with over 100 million parameters can be computationally expensive to train.

To address this challenge, various strategies can be employed, such as using GPU acceleration, model pruning, and knowledge distillation.

Future Directions in Deep Learning for Signal Processing

Deep learning is a rapidly evolving field, and new techniques and architectures are being developed continually. Some potential future directions in deep learning for signal processing include:

*

Graph Neural Networks

Graph neural networks (GNNs) are a type of deep learning architecture that can be used to model complex relationships between data points. In signal processing, GNNs can be used to model the relationships between different signal features.

For example, a GNN can be used to model the relationships between different image textures and colors.

*

Explainable Deep Learning

Explainable deep learning is a research area that focuses on developing techniques to interpret and understand the decisions made by deep learning models. In signal processing, explainable deep learning can be used to provide insights into why a particular model is making a particular classification or prediction.

For example, an explainable model can be used to provide insights into why a certain image is being classified as a particular object.

These are just a few examples of potential future directions in deep learning for signal processing. As the field continues to evolve, we can expect to see new and innovative applications of deep learning in signal processing.

Signal Processing for Image and Video Analysis: Signal Processing For Machine Learning

Signal processing plays a crucial role in image and video analysis, enabling tasks such as object detection, tracking, and recognition. The use of signal processing algorithms allows for image enhancement, de-noising, and compression, which are essential for various applications, including surveillance, medical imaging, and video conferencing.

Object Detection and Tracking

Object detection and tracking are critical components of image and video analysis. Signal processing algorithms, such as the Histogram of Oriented Gradients (HOG) and Convolutional Neural Networks (CNNs), are used to detect and track objects within images and videos. These algorithms can be applied to various applications, including self-driving cars, surveillance systems, and medical imaging.

- The HOG algorithm extracts features from an image, such as edges and shapes, to detect objects.

- CNNs can learn to recognize objects within images and videos, enabling object detection and tracking.

- Other algorithms, such as the Scale-Invariant Feature Transform (SIFT), are used for image matching and object recognition.

Image Enhancement and De-noising

Image enhancement and de-noising are essential tasks in image and video analysis. Signal processing algorithms, such as the Fast Fourier Transform (FFT) and the Wiener filter, are used to enhance image quality and remove noise. These algorithms can be applied to various applications, including medical imaging, surveillance, and remote sensing.

- The FFT can be used to enhance image quality by filtering out noise and artifacts.

- The Wiener filter can be used to de-noise images by removing Gaussian and impulsive noise.

Image Compression

Image compression is a crucial task in image and video analysis. Signal processing algorithms, such as the Discrete Cosine Transform (DCT) and the Discrete Wavelet Transform (DWT), are used to compress images while preserving their quality. These algorithms can be applied to various applications, including remote sensing, medical imaging, and surveillance.

- The DCT can be used to compress images by transforming them into the frequency domain.

- The DWT can be used to compress images by decomposing them into different frequency bands.

Differences between 2D and 3D Signal Processing

Two-dimensional (2D) and three-dimensional (3D) signal processing are used for image and video analysis, respectively. 2D signal processing is used for still images, while 3D signal processing is used for videos. The main differences between 2D and 3D signal processing are:

- 2D signal processing is used for still images, while 3D signal processing is used for videos.

- 2D signal processing typically uses algorithms such as the HOG and SIFT, while 3D signal processing uses algorithms such as the 3D DWT and 3D DCT.

Use of Transfer Learning for Image and Video Processing Tasks

Transfer learning is a technique used in deep learning to take a pre-trained network and fine-tune it for a new task. This technique has been widely used in image and video processing tasks, enabling the use of pre-trained networks for tasks such as object detection, tracking, and recognition.

Conclusion

Signal processing plays a crucial role in image and video analysis, enabling tasks such as object detection, tracking, and recognition. The use of signal processing algorithms allows for image enhancement, de-noising, and compression, which are essential for various applications. The differences between 2D and 3D signal processing are critical to understanding the appropriate algorithms and techniques for each task. Transfer learning is a powerful technique used in deep learning to take a pre-trained network and fine-tune it for a new task.

Signal Processing for Medical Imaging

Signal processing plays a crucial role in medical imaging, enhancing the quality and accuracy of diagnostic images. Magnetic Resonance Imaging (MRI) and Computed Tomography (CT) scans are two of the most widely used imaging modalities in the medical field.

These modalities produce high-resolution images, but they often suffer from noise, artifacts, and variations in patient anatomy and equipment, making it challenging to obtain high-quality images. Signal processing algorithms are employed to overcome these limitations, improving image quality and diagnostic accuracy.

Image Enhancement, Signal processing for machine learning

Image enhancement is a critical aspect of medical imaging, as it enables clinicians to detect abnormalities and make accurate diagnoses. Signal processing algorithms can be used to enhance image quality by reducing noise, sharpening edges, and improving contrast.

* Noise reduction techniques, such as Gaussian filtering and wavelet denoising, can be employed to remove random variations in pixel intensity, resulting in clearer images.

* Edge detection algorithms, like Canny and Sobel operators, can be used to highlight anatomical structures and boundaries, making it easier to identify abnormalities.

* Contrast enhancement techniques, such as histogram equalization and CLAHE (Contrast Limited Adaptive Histogram Equalization), can be applied to improve the visibility of subtle differences in tissue composition.

Denoising

Denoising is the process of removing noise from images, which can be caused by various factors, including instrument artifacts, patient movement, and tissue properties.

* Gaussian noise, also known as white noise, can be reduced using techniques such as averaging, median filtering, or wavelet denoising.

* Rician noise, often encountered in MRI images, can be mitigated using noise reduction algorithms, such as homomorphic filtering or adaptive Wiener filtering.

* Poisson noise, common in low-intensity images, can be dealt with by using Poisson noise models, where noise reduction is done using a Poisson-Gaussian mixture model.

Compression

Compression is crucial in medical imaging, as it enables the efficient storage and transmission of high-resolution images. Various compression techniques, such as lossy and lossless compression, can be applied to medical images.

* Lossy compression, like JPEG (Joint Photographic Experts Group), can be used to reduce the file size of images while sacrificing some image quality.

* Lossless compression, such as LZ77 and DEFLATE, can be employed to reduce the file size of images without compromising image quality.

* Wavelet-based compression, such as JPEG 2000, can be used to achieve high compression ratios while preserving image quality.

Key Challenges

Despite the advances in signal processing, several challenges remain in applying these algorithms to medical imaging data.

* Patient variability: Different patients have unique anatomy, which can lead to variations in image quality and diagnostic accuracy.

* Equipment differences: Variations in equipment settings, such as field strength and gradient strength, can also impact image quality.

* Limited training data: The availability of training data can be limited, making it challenging to develop and validate algorithms.

Strategies to Improve Performance

Several strategies can help improve the performance of signal processing algorithms in medical imaging.

* Data augmentation: Adding synthetic data to the training set can help increase the diversity of the training data and improve the generalizability of the algorithms.

* Transfer learning: Using pre-trained models and fine-tuning them on smaller datasets can help adapt to changing conditions and improve performance.

* Ensemble methods: Combining the predictions of multiple models can help improve the robustness and accuracy of the diagnostic process.

Closure

In this comprehensive Artikel, we have explored the fundamental concepts of signal processing for machine learning, discussed signal transformations, machine learning architectures, and specialized applications in audio, image, and time series analysis. By understanding these concepts and their applications, data scientists, engineers, and researchers can better design and optimize their machine learning models to improve performance, efficiency, and accuracy.

FAQ Explained

Q: What is signal processing in machine learning?

A: Signal processing in machine learning refers to the techniques used to transform, analyze, and optimize digital signals to improve the performance of machine learning models.

Q: Why is signal processing important in machine learning?

A: Signal processing is essential in machine learning as it enables data scientists to preprocess complex, noisy data, filtering out noise and extracting relevant features that improve model performance.

Q: What are some common signal processing techniques used in machine learning?

A: Some common signal processing techniques include noise reduction, filtering, feature extraction, and data augmentation.

Q: How does signal processing relate to deep learning?

A: Signal processing is closely related to deep learning as it allows deep learning models to learn from complex, noisy data, improving their performance and efficiency.

Q: What are some specialized applications of signal processing in machine learning?

A: Some specialized applications of signal processing in machine learning include audio analysis, image and video processing, medical imaging, and time series analysis.