As the Statquest Illustrated Guide to Machine Learning takes center stage, this opening passage beckons readers into a world crafted with expert knowledge, ensuring a reading experience that is both absorbing and distinctly original.

Within this comprehensive guide, readers will navigate the fundamental concepts of machine learning, its applications, and the various types: supervised, unsupervised, and reinforcement learning. Real-world examples will illustrate the possibilities and limitations of machine learning, providing a solid foundation for further exploration.

Introduction to Machine Learning

Machine learning is an artificial intelligence (AI) subfield that allows systems to learn from data and improve their performance on a task without being explicitly programmed. This is akin to how humans learn, where exposure to experiences and repetitive practice allow us to learn and refine our skills. Just like how you might learn a new language by constantly speaking it, machine learning algorithms learn by analyzing large datasets and adapting to the patterns they discover.

Fundamental Concepts of Machine Learning

Machine learning encompasses three primary types: supervised, unsupervised, and reinforcement learning. Each of these methods relies on the data provided to make predictions or decisions. When we consider the fundamental concepts of machine learning, we must also consider the data it’s based on.

In supervised learning, the data is labeled, ensuring that the machines can associate the desired outcomes with the given inputs. This type of learning is often used in image classification, natural language processing, and predicting continuous outcomes. For instance, self-driving cars use a mix of computer vision and machine learning to predict the movements of other cars.

- Sales Forecasting

- Image Recognition

- Predicting Customer Churn

In sales forecasting, the algorithm is trained to predict future sales based on historical data. Using this information, companies can adjust their production levels, inventory management, and marketing strategies to meet customer demands.

In image recognition, the algorithm is trained to recognize patterns and objects in images. This technology is used in applications such as facial recognition, object detection in self-driving cars, and medical imaging analysis.

Predicting customer churn involves using historical data to identify patterns and behaviors that lead to customers switching to another service provider. This allows companies to offer targeted promotions and improve customer retention.

Types of Machine Learning

Machine learning is categorized into three primary types based on the nature of input data and the desired outcome. Each of these types has its unique applications and is used in various industries.

-

Supervised Learning

Supervised learning involves training the machine learning model using labeled data. The algorithms learn to associate the input data with the correct output based on the provided labels. This type of learning is typically used in image classification, natural language processing, and forecasting. An example of supervised learning would be a facial recognition system that’s trained using images of known individuals with labels indicating what they are.

-

Unsupervised Learning

Unsupervised learning trains algorithms on unlabeled data, allowing them to discover patterns and relationships that might not be immediately apparent. This type of learning is often used in clustering, segmentation, and anomaly detection. For instance, in finance, unsupervised learning can help detect unusual transaction patterns, flagging suspicious activities.

-

Reinforcement Learning

-

Automated Customer Service

-

Healthcare Diagnosis

-

Financial Risk Management, The statquest illustrated guide to machine learning

- Example of one-hot encoding and label encoding:

Suppose we have a categorical variable ‘Color’ with 3 possible values: ‘Red’, ‘Blue’, ‘Green’. One-hot encoding would result in three binary vectors:

["Red" -> [1, 0, 0]]

["Blue" -> [0, 1, 0]]

["Green" -> [0, 0, 1]]

Label encoding would result in:

["Red" -> 1]

["Blue" -> 2]

["Green" -> 3] - Easy to interpret: Decision trees provide a visual representation of the decision-making process, making it easy to understand and interpret the model.

- Handling missing values: Decision trees can handle missing values in the data by ignoring the missing values during the splitting process.

- Handling non-linear relationships: Decision trees can handle non-linear relationships by recursively splitting the data into smaller subsets.

- Overfitting: Decision trees can suffer from overfitting, where the model becomes too complex and fails to generalize well to new data.

- Sensitive to outliers: Decision trees can be sensitive to outliers, where a single outlier can significantly affect the model’s performance.

- Improved accuracy: Random forests can improve the accuracy of the model by combining the predictions from multiple trees.

- Reducing overfitting: Random forests can reduce overfitting by averaging the predictions from multiple trees.

- Handling high-dimensional data: Random forests can handle high-dimensional data by selecting a random subset of features for each tree.

- Computational complexity: Random forests can be computationally expensive, especially when dealing with large datasets.

- Difficulty in interpreting: Random forests can be difficult to interpret, as the model is an ensemble of multiple trees.

- Good generalization: SVMs can generalize well to new data, especially when the classes are linearly separable.

- Handling non-linear relationships: SVMs can handle non-linear relationships by using a kernel function to map the data into a higher-dimensional space.

- Robust to outliers: SVMs can be robust to outliers, as the model is based on the distance between the samples and the hyperplane.

- Sensitivity to hyperparameters: SVMs can be sensitive to hyperparameters, such as the kernel function and regularization parameter.

- Long training time: SVMs can take a long time to train, especially when dealing with large datasets.

- Choose an initial set of centroids.

- Assign each data point to the cluster with the closest centroid.

- Update the centroid of each cluster to be the mean of its assigned data points.

- Repeat steps 2 and 3 until convergence or a stopping criterion is met.

- Easy to implement, especially when k is small.

- Scalable to large datasets, as each data point only needs to be compared with the centroids.

- Robust to noise and outliers, especially when k is large.

- Sensitive to initial centroid placement, as it can lead to local optima.

- No guarantee of producing a unique or meaningful clustering solution.

- Agglomerative clustering starts with each data point as its own cluster and iteratively merges the closest pairs until a stopping criterion is met.

- Divisive clustering begins with all data points in a single cluster and iteratively splits the clusters until a stopping criterion is met.

- Providing a hierarchical representation of the data, allowing for exploration at different levels of granularity.

- Sensitivity to local structure, capturing subtle patterns in the data.

- Higher computational cost compared to k-means, especially for large datasets.

- More challenging to interpret and visualize the results.

- Compute the covariance matrix of the data.

- Find the eigenvectors of the covariance matrix, which represent the principal axes.

- Sort the eigenvectors by their corresponding eigenvalues in descending order.

- Choose the top k eigenvectors to form the new coordinate system.

- Reducing the dimensionality of the data, making it more tractable for analysis.

- Preserving the relationships between the data points, especially the variance.

- No guarantee of retaining the original data distribution or relationships.

- Sensitivity to outliers and noise, which can affect the eigenvector computation.

- Learn from large datasets without being explicitly programmed

- Handle complex relationships between variables

- Make predictions and decisions based on patterns in the data

- Difficulty in understanding how they make their decisions

- Requires large amounts of training data

- Prone to overfitting

- Recognize patterns in large amounts of data

- Make predictions or decisions based on complex relationships between variables

- Improve accuracy in classification or regression tasks

- Image classification: Neural networks can be used to classify images into their respective categories, such as animals, vehicles, or buildings.

- Natural language processing: RNNs can be used to process and understand natural language, enabling applications such as chatbots and language translation.

- Predictive maintenance: Neural networks can be used to predict when equipment is likely to fail, enabling proactive maintenance and reducing downtime.

- Image recognition: Neural networks are used in facial recognition systems, self-driving cars, and medical imaging systems.

- NLP: RNNs are used in chatbots, language translation systems, and sentiment analysis.

- Predictive maintenance: Neural networks are used in industries such as manufacturing, healthcare, and finance to predict equipment failure and optimize maintenance schedules.

- Mean Squared Error (MSE)

- Mean Absolute Error (MAE)

- R-Squared (R²)

- Accuracy

- Complexity

- Interpretability

- Accuracy

- Complexity

- Interpretability

- Random Forest Algorithm: This algorithm trains multiple decision trees on different subsets of the dataset and combines their predictions to improve the accuracy of the model.

- Gradient Boosting Algorithm: This algorithm trains multiple weak models on different subsets of the dataset and combines their predictions to improve the accuracy of the model.

- AdaBoost Algorithm: This algorithm trains multiple decision trees on the same dataset and combines their predictions using a weighted sum. The weights used in the sum are determined by the performance of each decision tree on the training dataset.

- Gradient Boosting Algorithm: This algorithm trains multiple weak models on the same dataset and combines their predictions using a weighted sum. The weights used in the sum are determined by the performance of each weak model on the training dataset.

- Stacking Algorithm: This algorithm trains multiple models on the same dataset and combines their predictions using a meta-model. The meta-model is trained on the predictions of the multiple models, rather than the original dataset.

- Convolutional Neural Networks (CNNs): These networks use convolutional and pooling layers to learn complex patterns in images.

- Recurrent Neural Networks (RNNs): These networks use recurrent layers to learn complex patterns in sequential data.

- Generative Adversarial Networks (GANs): These networks use a generator and a discriminator to learn complex patterns in data.

- Requirements Gathering:

- Determine the project scope

- Identify the target audience

- Define the expected outcomes

- Engage with stakeholders

- Formulate a project proposal

- Data Collection:

- Determine the type of data required

- Collect data from various sources

- Clean and preprocess the data

- Analyze the data for insights

- Type of Data:

- Structured data (e.g., tables, databases)

- Semi-structured data (e.g., CSV files, JSON files)

- Unstructured data (e.g., text, images)

- Python Libraries:

- Scikit-learn

- TensorFlow

- PyTorch

- IDEs:

- Visual Studio Code

- PyCharm

- Encryption: Use secure encryption protocols to protect data both in transit and at rest.

- Access controls: Implement strict access controls to ensure only authorized personnel have access to sensitive data.

- Secure data storage: Store data in a secure environment, protected from unauthorized access and breaches.

- Compliance with regulations: Familiarize yourself with relevant regulations and ensure compliance to avoid data breaches.

- Data analysis: Examine the dataset for signs of bias, such as uneven representation of certain groups or attributes.

- Statistical testing: Use statistical methods to test for bias, such as fairness metrics and sensitivity analysis.

- Human oversight: Regularly review and assess model performance to detect and address biases.

Reinforcement learning enables machines to learn through trial and error by interacting with an environment and receiving rewards or penalties. This learning is typically used in robotics, autonomous vehicles, and game playing. Consider a robot learning to navigate a new space by receiving rewards for correct movements and penalties for obstacles.

The following table summarizes the types of machine learning:

| Type | Description | Example Application |

| — | — | — |

| Supervised | Training on labeled data | Facial recognition |

| Unsupservised | Training on unlabeled data | Anomaly detection |

| Reinforcement | Learning through trials and rewards | Autonomous vehicles |

Real-world Applications of Machine Learning

Machine learning has numerous real-world applications in various industries, including but not limited to:

Automated customer service systems use machine learning to provide personalized assistance and resolve customer complaints in a timely manner. For instance, chatbots and virtual assistants are programmed to understand and respond to user queries based on patterns in the data.

Machine learning in healthcare diagnosis enables the analysis of medical images and patient data to predict the likelihood of disease or identify high-risk patients. For instance, algorithms can detect abnormalities in MRI scans to diagnose conditions such as tumors or cysts.

In financial risk management, machine learning algorithms analyze market trends, economic indicators, and customer data to predict potential losses and suggest strategies for mitigation. This approach helps organizations optimize their risk exposure and make informed investment decisions.

Data Preprocessing: The Statquest Illustrated Guide To Machine Learning

Data preprocessing is the initial step in the machine learning pipeline, where raw data is cleaned, transformed, and formatted to be used for model training. This process involves handling missing data, normalizing and standardizing features, and encoding categorical variables.

Handling Missing Data

Missing data can arise due to various reasons such as non-response, data collection errors, or data degradation over time. There are several methods to handle missing data: mean imputation, median imputation, and deletion.

Mean imputation involves replacing missing values with the mean of the respective feature. This method is commonly used when the data distribution is symmetric and the mean is a good representation of the data.

Mean = (Sum of all values) / (Number of non-missing values)

Median imputation is another method where missing values are replaced with the median of the respective feature. This method is used when the data distribution is skewed or the median is a better representation of the data.

Deletion involves removing rows or columns containing missing values. This method is used when the missing values are sparse and removing them doesn’t significantly affect the data.

Data Normalization and Standardization

Data normalization and standardization are techniques used to scale and transform features to a common range. Normalization involves scaling features to a specific range, usually between 0 and 1, to prevent features with large ranges from dominating the model.

Standardization, on the other hand, involves scaling features to have a mean of 0 and a standard deviation of 1. This technique helps models learn more efficiently and improves the convergence of optimization algorithms.

Encoding Categorical Variables

Categorical variables are features that have a limited number of distinct values. Encoding categorical variables involves transforming these values into numerical representations to be used in models.

One-hot encoding is a method where each category is represented by a binary vector. For example, if a category has 3 possible values, the one-hot encoding would result in a 3-element vector with a 1 at the index of the specific value and 0s elsewhere.

One-hot encoding example:

| Value | One-hot Encoding |

| — | — |

| A | [1, 0, 0] |

| B | [0, 1, 0] |

| C | [0, 0, 1] |

Label encoding is another method where each category is represented by a unique numerical value. This method is simpler than one-hot encoding but can lead to data leakage if the target variable is highly correlated with the categorical variable.

Supervised Learning Algorithms

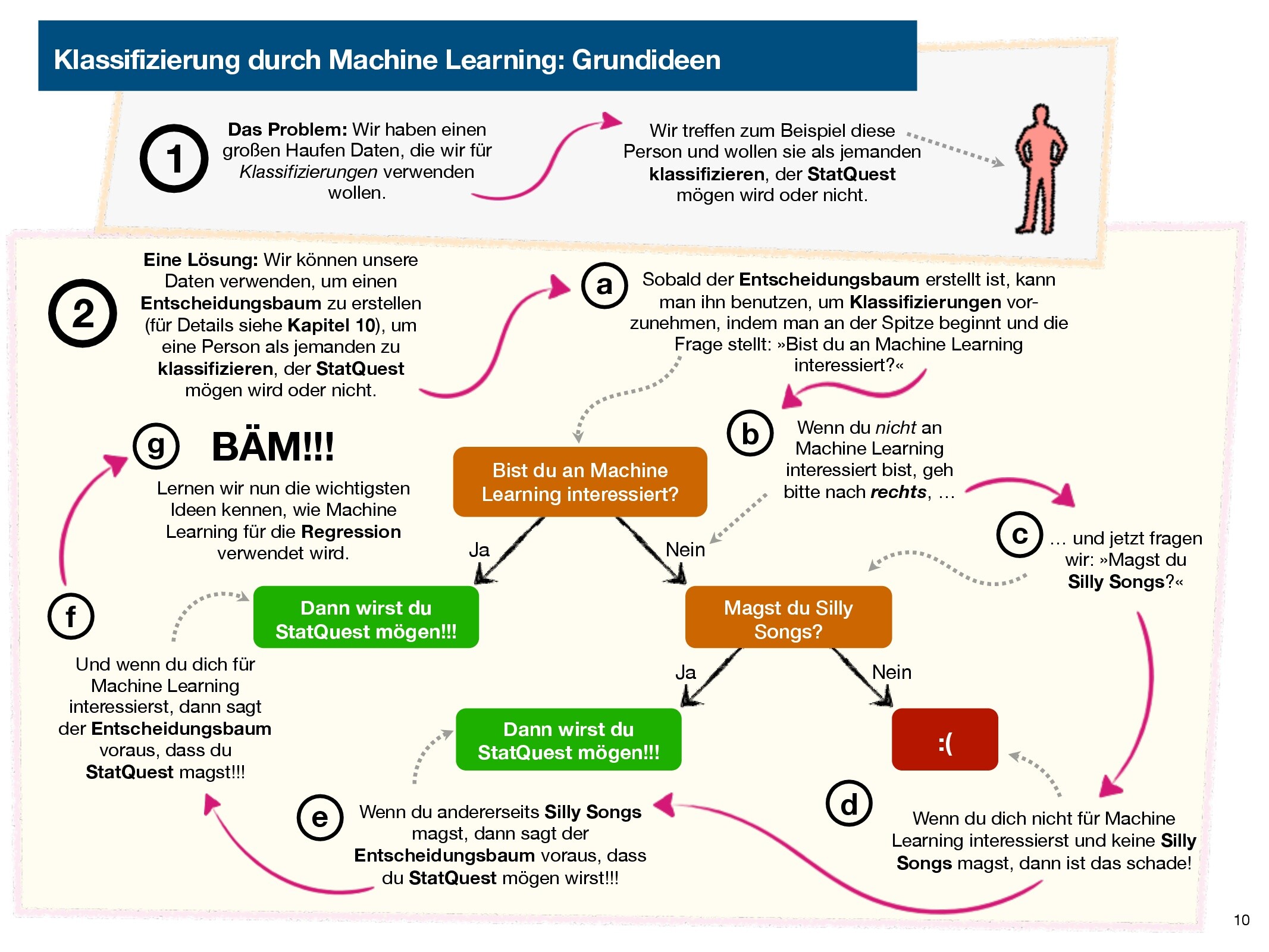

Supervised learning is a type of machine learning where the algorithm is trained on labeled data, where the correct output is already known. This enables the model to learn the relationship between inputs and outputs, allowing it to make predictions on new, unseen data. Supervised learning algorithms are widely used in various fields, including image classification, natural language processing, and recommendation systems. In this section, we will explore three popular supervised learning algorithms: decision trees, random forests, and support vector machines.

Decision Trees

A decision tree is a supervised learning algorithm that works by recursively partitioning the data into smaller subsets based on the features. It starts by choosing the best feature to split the data, and then recursively splits the data into smaller subsets until a stopping criterion is met. The decision tree model makes predictions by traversing the tree from the root node to the leaf node, where the predicted output is determined by the majority vote of the samples in the leaf node.

Decision trees are often used for classification problems, but can also be used for regression problems.

The advantages of decision trees include:

However, decision trees also have some disadvantages, including:

Random Forests

A random forest is an ensemble learning algorithm that combines multiple decision trees to improve the model’s performance. It works by training multiple decision trees on random subsets of the data, and then averaging the predictions from each tree to produce the final output. The random forest model can handle missing values, outliers, and high-dimensional data, making it a popular choice for many applications.

Random forests are often used for classification and regression problems.

The advantages of random forests include:

However, random forests also have some disadvantages, including:

Support Vector Machines

A support vector machine (SVM) is a supervised learning algorithm that works by finding the hyperplane that maximally separates the classes in the feature space. It works by solving a quadratic programming problem to find the optimal hyperplane that minimizes the error and maximizes the margin between the classes. The SVM model can handle non-linear relationships by using a kernel function to map the data into a higher-dimensional space.

SVMs are often used for classification problems, but can also be used for regression problems.

The advantages of SVMs include:

However, SVMs also have some disadvantages, including:

Unsupervised Learning Algorithms

In the realm of machine learning, unsupervised learning algorithms are designed to uncover hidden patterns and relationships within data without any prior knowledge of the correct output. This approach allows for the discovery of new insights and understanding of complex data distributions.

Clustering Algorithms: K-Means and Hierarchical Clustering

Unsupervised learning algorithms can be broadly categorized into clustering and dimensionality reduction techniques. Clustering algorithms, in particular, are useful for grouping similar data points into meaningful categories. Two popular clustering algorithms are k-means and hierarchical clustering.

K-Means Clustering

K-means clustering is an iterative algorithm that partitions the data into k clusters, where each cluster is represented by a centroid or mean value. The algorithm works as follows:

K-Means clustering can be formalized as: min ∑k C=1 ∑xi ∈ C ||xi − μC||2, where xi represents a data point, C denotes a cluster, and μC denotes the centroid of cluster C.

The k-means algorithm has several advantages, including:

However, k-means also has some disadvantages:

Examples of scenarios where k-means clustering would be used include:

– Customer segmentation: grouping customers based on their buying behavior and demographics.

– Image compression: clustering similar images together to reduce storage space.

Hierarchical Clustering

Hierarchical clustering, on the other hand, builds a hierarchy of clusters by merging or splitting existing clusters. This approach can be visualized as a dendrogram, which represents the cluster tree. There are two main types of hierarchical clustering: agglomerative and divisive.

Hierarchical clustering has the advantage of:

However, it also has some disadvantages:

Examples of scenarios where hierarchical clustering would be used include:

– Gene expression analysis: grouping genes based on their expression levels within different tissues.

– Network community detection: identifying clusters of highly interconnected nodes in a network.

Dimensionality Reduction: Principal Component Analysis (PCA)

Principal Component Analysis (PCA) is a dimensionality reduction technique that transforms the data into a new coordinate system with the axes representing the principal components. This approach captures most of the variance in the data along the first few axes.

PCA has the advantage of:

However, it also has some disadvantages:

Examples of scenarios where PCA would be used include:

– Image feature extraction: reducing the dimensionality of image data to improve classification performance.

– Stock portfolio optimization: capturing the underlying relationships between different stocks and reducing the dimensionality of the data.

Neural Networks

Neural networks represent a significant leap in machine learning. Essentially, they’re designed to mimic the human brain’s structure, comprising interconnected nodes (neurons) that process and transmit information. This complex network of nodes enables machines to learn from data without being explicitly programmed, revolutionizing the field of artificial intelligence.

Feedforward Neural Networks

Feedforward neural networks are one of the most basic types of neural networks. The flow of information is linear, moving only from the input layer to the hidden layers and finally to the output layer. Each node processes the information, applies a non-linear transformation (activation function), and passes the result to the next layer.

A simple example of a feedforward neural network is a multi-layer perceptron, which can be thought of as a series of connected layers of neurons. The first layer, the input layer, receives the data, while the last layer, the output layer, produces the result. In between, there can be any number of hidden layers.

Convolutional Neural Networks

Convolutional neural networks (CNNs) are designed to handle image and video data. They use a series of convolutional and pooling layers to extract features from the input data. The convolutional layers apply filters to the data to identify features, and the pooling layers reduce the dimensionality of the data.

The key advantage of CNNs is their ability to learn spatial hierarchies of features from images, enabling them to recognize objects at different scales and orientations. A simple example of a CNN is a LeNet-5 network, which is a type of convolutional neural network that uses convolutional and pooling layers to classify handwritten digits.

Recurrent Neural Networks

Recurrent neural networks (RNNs) are designed to handle sequential data. They use a series of recurrent connections to allow inputs at different timestamps to be dependent on each other.

RNNs are particularly useful for natural language processing, where the relationships between words in a sentence are often sequential. A simple example of an RNN is a simple recurrent neural network, which uses a single recurrent layer to predict the next word in a sentence given the previous words.

Benefits and Challenges of Neural Networks

The benefits of neural networks are numerous, including the ability to:

However, neural networks also have several challenges, including:

Scenarios Where Neural Networks Would Be Used

Neural networks are particularly useful in scenarios where there is a need to:

Examples of such scenarios include:

Real-World Applications of Neural Networks

Neural networks have a wide range of real-world applications, including:

Model Evaluation and Selection

Model evaluation and selection are crucial steps in the machine learning workflow. They ensure that the chosen model is accurate, reliable, and generalizable to unseen data. In this context, we’ll delve into the importance of model evaluation, the key metrics used for comparison, and strategies for selecting the optimal model.

Metrics for Model Evaluation

When evaluating a machine learning model, we typically use a set of metrics to measure its performance. These metrics are essential for understanding how well the model is doing on a particular task. Here are some common metrics used in model evaluation:

Mean Squared Error (MSE) is a metric used to measure the average difference between predicted and actual values. It’s calculated as the sum of the squared differences between predicted and actual values, divided by the number of samples. The formula for MSE is:

MSE = Σ(y_predicted – y_actual)^2 / n

Mean Absolute Error (MAE) is another metric used to measure the average difference between predicted and actual values. It’s calculated as the sum of the absolute differences between predicted and actual values, divided by the number of samples. The formula for MAE is:

MAE = Σ|y_predicted – y_actual| / n

R-Squared (R²), also known as the coefficient of determination, measures the proportion of variance in the dependent variable that’s explained by the independent variable(s). It’s calculated as 1 – (sum of squared residuals / sum of squared total variability). The formula for R² is:

R² = 1 – (SS_res / SS_tot)

In addition to these metrics, we also use cross-validation to evaluate our model’s performance. Cross-validation involves splitting our dataset into training and validation sets, training our model on the training set, and evaluating its performance on the validation set. This process is repeated multiple times, with different subsets of the data used for training and validation each time. By averaging the performance metrics across all iterations, we get a more accurate estimate of our model’s performance on unseen data.

Overfitting Detection and Hyperparameter Tuning

Overfitting occurs when a model is too complex, leading it to fit the noise in the training data rather than the underlying patterns. To detect overfitting, we can plot the training and validation accuracy (or loss) over time. If we see a significant difference in the accuracy between the two, it may indicate overfitting.

Hyperparameter tuning involves adjusting the model’s parameters to optimize its performance. This is typically done using techniques like grid search or random search, where we try different combinations of hyperparameters and select the combination that yields the best performance.

Comparing Models

When comparing models, we need to consider several factors, including:

We also need to decide how to select the best model for a given problem. This involves weighing the trade-offs between different models and selecting the one that’s most suitable for the task at hand.

Selecting the Optimal Model

When selecting the optimal model, we need to consider several factors, including:

We can use various metrics to compare the performance of different models. By selecting the model with the best performance, we can ensure that our model is accurate, reliable, and generalizable to unseen data.

Advanced Techniques

Advanced techniques in machine learning are essential for solving complex problems and improving the accuracy of models. In this section, we will discuss three advanced techniques: ensemble methods, transfer learning, and deep learning.

Ensemble Methods

Ensemble methods combine the predictions of multiple models to improve the overall accuracy of the model. This is done by training multiple models on the same dataset and then combining their predictions using techniques such as bagging, boosting, and stacking.

Bagging, short for Bootstrap Aggregating, involves training multiple models on different subsets of the dataset and then combining their predictions. This helps to reduce overfitting and improve the robustness of the model. Bagging can be implemented using the Random Forest algorithm, which trains multiple decision trees on different subsets of the dataset.

For example, let’s say we have a dataset of images of cats and dogs, and we want to train a model to classify them. We can train multiple decision trees on different subsets of the dataset, and then combine their predictions to improve the accuracy of the model.

Boosting

Boosting involves training multiple models on the same dataset and combining their predictions using a weighted sum. The weights used in the sum are determined by the performance of each model on the training dataset. Models that perform poorly on the training dataset are given higher weights, while models that perform well are given lower weights. This helps to improve the accuracy of the model by focusing on the models that perform poorly on the training dataset.

For example, let’s say we have a dataset of images of cats and dogs, and we want to train a model to classify them. We can train multiple decision trees on the same dataset, and then combine their predictions using a weighted sum. The weights used in the sum are determined by the performance of each decision tree on the training dataset.

Stacking

Stacking involves training multiple models on the same dataset and combining their predictions using a meta-model. The meta-model is trained on the predictions of the multiple models, rather than the original dataset. This helps to improve the accuracy of the model by combining the strengths of multiple models.

For example, let’s say we have a dataset of images of cats and dogs, and we want to train a model to classify them. We can train multiple decision trees on the same dataset, and then combine their predictions using a meta-model. The meta-model is trained on the predictions of the multiple decision trees, rather than the original dataset.

Transfer Learning

Transfer learning involves using a pre-trained model as a starting point for a new model. This helps to speed up the training process and improve the accuracy of the model, by leveraging the knowledge learned from the pre-trained model. Fine-tuning a pre-trained model involves adjusting the weights of the model to fit the new dataset.

For example, let’s say we have a dataset of images of cats and dogs, and we want to train a model to classify them. We can use a pre-trained model, such as VGG16, as a starting point for our model. We can fine-tune the weights of the pre-trained model to fit our dataset, rather than training a new model from scratch.

VGG16 is a pre-trained model that has been trained on a large dataset of images.

Deep Learning

Deep learning involves using neural networks with multiple layers to learn complex patterns in data. This helps to improve the accuracy of the model by leveraging the strengths of multiple layers.

For example, let’s say we have a dataset of images of cats and dogs, and we want to train a model to classify them. We can use a convolutional neural network (CNN) with multiple layers to learn complex patterns in the images.

Convolutional Neural Networks (CNNs) are a type of neural network that are particularly well-suited to image classification tasks.

Implementing Machine Learning Projects

A machine learning project begins with a clear understanding of the problem statement and the gathering of requirements. This involves defining the project scope, identifying the target audience, and determining the expected outcomes. The subsequent steps of data collection, feature engineering, and model building are all crucial for ensuring that the solution meets the project’s objectives. In this chapter, we will delve into the project planning and development process, and explore the various tools and technologies available for implementing machine learning projects.

Project Planning

Project planning is an indispensable step in the machine learning pipeline. It commences with requirements gathering, which entails identifying the problem statement, defining the project scope, and determining the expected outcomes. This process involves stakeholder engagement, data analysis, and the formulation of a project proposal. A well-planned project ensures that the solution meets the project’s objectives and that resources are allocated efficiently.

A clear problem statement and well-defined project scope are essential for ensuring that the solution meets the project’s objectives.

Data Collection

Data collection is a critical step in the machine learning pipeline. It involves determining the type of data required, collecting data from various sources, and preprocessing the data for analysis. There are several types of data that can be used in machine learning, including structured data (e.g., tables, databases), semi-structured data (e.g., CSV files, JSON files), and unstructured data (e.g., text, images).

Structured data is organized in a specific format, while semi-structured data has some organization, and unstructured data lacks a predefined format.

Selecting the Right Tools and Technologies

The choice of tools and technologies for a machine learning project depends on various factors, including the project scope, the type of data, and the expertise of the team. Some popular Python libraries and frameworks for machine learning include Scikit-learn, TensorFlow, and PyTorch. An Integrated Development Environment (IDE) can also be used to facilitate project development.

The choice of library depends on the project requirements and the expertise of the team.

Popular IDEs for Machine Learning

IDEs can be used to facilitate project development by providing features such as code completion, debugging, and project organization. Some popular IDEs for machine learning include Visual Studio Code and PyCharm.

IDEs can improve productivity by providing features such as code completion and debugging.

Ethical Considerations and Responsible Use

In the realm of machine learning, a subtle yet crucial aspect often gets overlooked – ethics. As models become increasingly sophisticated, the risk of unintended consequences grows. It’s essential to acknowledge the responsibility that comes hand-in-hand with these technological advancements.

Data Privacy and Security

Data privacy and security are two sides of the same coin. When it comes to machine learning, securing sensitive data is of paramount importance. This includes safeguarding personal information, medical data, and financial records, among others. Imagine a scenario where sensitive data falls into the wrong hands – it could lead to catastrophic consequences. To prevent such a scenario, it’s crucial to implement robust security measures, such as encryption, access controls, and secure data storage. Complying with regulations like GDPR, HIPAA, and CCPA ensures that organizations prioritize data protection.

Algorithmic Bias and Detection

Algorithmic bias is a stealthy enemy that can creep into machine learning models without warning. It’s a situation where a model is trained on biased data or has design flaws, leading to biased outcomes. Imagine a recommendation engine that consistently suggests the same products to a specific group of users – it’s time to take a closer look. Detecting algorithmic bias requires a combination of human oversight, data analysis, and statistical testing. By recognizing these biases, we can make informed decisions to improve the fairness and accuracy of our models.

Explainable AI and Model Auditing

Explainable AI (XAI) is a subset of machine learning that focuses on providing clarity and transparency into the decision-making process of complex models. By using techniques like feature importance and partial dependence plots, XAI enables us to understand how models arrive at their conclusions. Imagine having the ability to ask your model, “Hey, what led you to recommend this product?” and receiving a clear, concise answer. Model auditing, on the other hand, involves evaluating a model’s performance, identifying areas for improvement, and ensuring it meets business requirements. By combining XAI and model auditing, we can build trust in our models and make informed decisions.

Using techniques like SHAP and LIME, we can provide feature importance and partial dependence plots to gain insights into the decision-making process.

During model auditing, we can identify areas for improvement, such as model performance, feature engineering, and data preprocessing.

Last Word

This guide represents a culmination of knowledge, bringing together key concepts, terminology, and expert advice. By the end of this journey, you’ll possess a deeper understanding of machine learning, its benefits, and its potential risks. Whether you’re a seasoned professional or a beginner, the Statquest Illustrated Guide to Machine Learning will equip you with the essentials to succeed in this rapidly evolving field.

FAQ Section

What is machine learning?

MACHINE learning is a type of artificial intelligence that enables systems to learn from data, making predictions or decisions based on patterns and trends without being explicitly programmed.

What are the types of machine learning?

The main categories of machine learning are supervised, unsupervised, and reinforcement learning. Supervised learning involves training on labeled data, unsupervised learning focuses on discovery of patterns in unlabeled data, and reinforcement learning involves learning through trial and error.

What is the importance of data preprocessing?

Data preprocessing is a crucial step in machine learning that involves handling missing data, normalizing features, and encoding categorical variables to prepare the data for modeling.