This URL has been excluded from the Wayback Machine. sets the stage for a story about the complex relationships between website owners, archivists, and the digital preservation efforts that are often left unchecked. As we delve into the reasons behind URL exclusion, we will explore the consequences that impact website archiving and preservation.

From copyright claims to robot.txt exclusion, we will examine the types of URLs excluded from the Wayback Machine and the causes that lead to this exclusion. Our discussion will also touch on the implications of exclusion on digital preservation efforts and research access to historical online content. As website owners, understanding the importance of website archiving and preservation methods will guide us toward strategies for making our sites more archivable.

Wayback Machine Exclusion Types and Causes

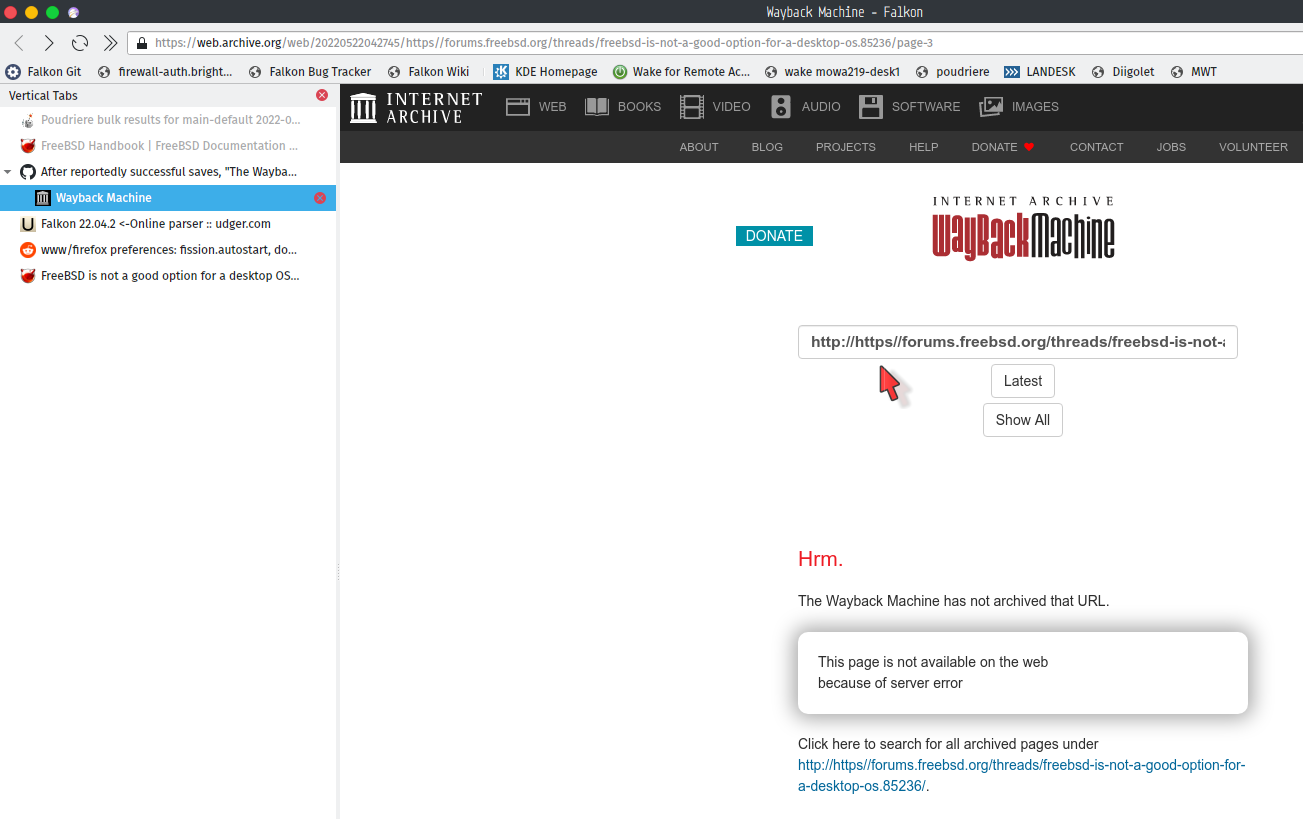

The Wayback Machine, a digital archive maintained by the Internet Archive, excludes certain URLs from its database due to various reasons. Understanding these exclusion types and causes is essential for ensuring that website content is properly preserved and accessed for research and historical purposes.

There are several types of URLs excluded from the Wayback Machine. These include websites with:

Copyright Claims

Websites with copyrighted content may be excluded from the Wayback Machine if their owners submit a DMCA (Digital Millennium Copyright Act) takedown notice or a copyright infringement claim. This is done to protect the intellectual property rights of website owners and prevent unauthorized reproduction of copyrighted materials.

DMCA takedown notices can be submitted through the Internet Archive’s Copyright Removal Form.

Robot.txt Exclusion

Website owners can manually exclude their site from the Wayback Machine by adding a robots.txt file with specific instructions. The robots.txt file is a text file that communicates with web crawlers and search engines, telling them which areas of a website should not be crawled or indexed.

For example, a website owner can add the following code to their robots.txt file to exclude the /private folder from web crawling:

Disallow: /private/

Paywall and Subscription-Based Content

Some websites require users to log in or pay for access to certain content. The Wayback Machine may exclude these websites if their access restrictions are difficult to circumvent or violate the terms of service.

Prioritized Involuntary Exclusions, This url has been excluded from the wayback machine.

Websites with high traffic or sensitive information may be voluntarily excluded from the Wayback Machine by their owners. This is often done to prevent unauthorized access to confidential data or intellectual property.

- A website with high traffic may choose to exclude itself from the Wayback Machine to prevent overwhelming the archive’s resources.

- Websites handling sensitive information, such as financial data or confidential communications, may also choose to exclude themselves to prevent potential security breaches.

Voluntary Exclusions vs. Involuntary Exclusions

Voluntary exclusions are done by website owners, often due to copyright or security concerns. Involuntary exclusions, on the other hand, occur when web archiving is prevented by technical limitations or access restrictions.

- Voluntary exclusions are usually done through DMCA takedown notices or robots.txt files.

- Involuntary exclusions can result from paywall protection, high traffic, or restricted access to sensitive information.

Technical and Policy Aspects of Exclusion: This Url Has Been Excluded From The Wayback Machine.

The Wayback Machine exclusion mechanism is a critical component of Web archiving, enabling web content owners to exclude certain URLs from being crawled and archived by the Wayback Machine. This raises important technical and policy considerations.

The technical aspects of the Wayback Machine exclusion mechanism involve the use of Robots Exclusion Protocols (REP) and meta tags. These mechanisms allow web content owners to specify which URLs should be excluded from crawling and archiving. The REP protocol utilizes a file (robots.txt) that resides in the root directory of a website and contains directives that specify which sections of the site should be crawled and which should be excluded. Meta tags, on the other hand, are embedded in the HTML code of a web page and can be used to exclude specific URLs from crawling and archiving.

Technical Aspects of Exclusion

-

The Robots Exclusion Protocol (REP) is a file (robots.txt) that resides in the root directory of a website and contains directives that specify which sections of the site should be crawled and which should be excluded.

-

Meta tags can be used to exclude specific URLs from crawling and archiving.

-

The Wayback Machine exclusion mechanism utilizes HTTP header directives to exclude specific URLs from crawling and archiving.

Policy and Legal Implications of Exclusion

The policy and legal implications of URL exclusion are critical considerations, as they impact the balance between web archiving and intellectual property rights. Web content owners have a right to exclude their content from being crawled and archived, but this must be balanced against the public interest in preserving web content for historical and research purposes.

Comparison of Technical and Policy Aspects of Exclusion

| Technical Aspect | Policy and Legal Implication |

|---|---|

| The Robots Exclusion Protocol (REP) is used to exclude specific URLs from crawling and archiving. | Web content owners have a right to exclude their content from being crawled and archived, but this must be balanced against the public interest in preserving web content for historical and research purposes. |

| Meta tags can be used to exclude specific URLs from crawling and archiving. | The use of meta tags to exclude content must be transparent and comply with applicable laws and regulations. |

| The Wayback Machine exclusion mechanism utilizes HTTP header directives to exclude specific URLs from crawling and archiving. | The use of HTTP header directives to exclude content must comply with applicable laws and regulations, including those related to intellectual property rights. |

Overall, the technical and policy aspects of URL exclusion are complex and multifaceted, requiring careful consideration of the balance between web archiving and intellectual property rights.

Alternatives and Workarounds for Excluded URLs

The Wayback Machine’s exclusion of certain URLs can be a limitation for researchers and users seeking to access archived web content. Luckily, there are alternatives and workarounds that can be used to circumvent these restrictions.

Archiving and Preservation Tools

There are several alternatives to the Wayback Machine for website archiving and preservation. While these tools may not provide the same comprehensive coverage as the Wayback Machine, they can still be useful for specific purposes or for filling gaps in coverage.

- Internet Archive’s Web Crawls: The Internet Archive conducts regular web crawls, which can be used to access archived versions of websites that are not in the Wayback Machine’s collection.

- AwayBack: AwayBack is a web archiving service that allows users to create customized crawls of websites and archives the results.

Workarounds for Accessing Excluded URLs

While these tools and services can be useful for accessing excluded URLs, there are also some workarounds that can be employed to access the content:

- Request a full crawl: If you need access to a specific URL, you can request a full crawl of the website from the Internet Archive.

- Use a web scraping service: Web scraping services can be used to extract data from websites that are not in the Wayback Machine’s collection.

- Request access through the library of Congress: The Library of Congress provides access to certain web archives that are not available through the Wayback Machine.

External Tools and Services for Archiving Websites

While the Wayback Machine is a popular tool for archiving websites, there are also many other tools and services that can be used to preserve web content. Some of these tools and services include:

- Heritrix: Heritrix is an open-source web archiving tool that can be used to crawl and archive websites.

- Pandora: Pandora is a web archiving tool that allows users to create customizable crawls of websites and archives the results.

- Archivematica: Archivematica is an open-source digital preservation tool that can be used to archive and preserve web content.

Last Point

As we conclude this discussion on the exclusion of URLs from the Wayback Machine, we are left with a deeper understanding of the complex interplay between website owners, archivists, and the preservation of our digital heritage. The consequences of exclusion are far-reaching, impacting not only website archiving but also research access to our collective online history. By understanding the reasons behind exclusion and the importance of website archiving and preservation, we can take steps to ensure that our online presence endures for generations to come.

FAQ Corner

What is the Wayback Machine and why is it important for website archiving?

The Wayback Machine is a digital archive that crawls and stores website content, providing a snapshot of the web at a particular point in time. It is essential for preserving our online heritage and allowing us to access historical content.

Can I request my URL to be included in the Wayback Machine if it has been excluded?

Yes, you can request your URL to be re-scanned and restored to the Wayback Machine. Please visit the Internet Archive’s website for instructions on how to do so.

Are there any alternatives to the Wayback Machine for website archiving and preservation?

Yes, there are alternatives to the Wayback Machine, including Internet Archive’s other preservation projects and external tools and services.

What are the consequences of excluding a URL from the Wayback Machine?

The consequences of excluding a URL from the Wayback Machine include reduced accessibility to historical online content, potential loss of cultural significance, and hindrances to research and analysis.