What is an epoch machine learning is all about, innit? Think of it like running laps in a marathon, and each lap is called an epoch, yeah? But instead of sweating buckets, we’re training neural networks to get better at recognizing patterns, blud.

Epochs are basically the building blocks of machine learning, and it’s a crucial part of how neural networks learn and improve. It’s like the more laps we run, the better we get at spotting the right route, mate.

Definition of Epoch in Machine Learning

In the context of machine learning, an epoch is a single pass through the training dataset of a neural network. This means that the neural network processes each sample in the training dataset exactly once during a single epoch. This concept is crucial for understanding how neural networks learn from data.

In machine learning, neural networks are trained on large datasets to learn patterns and relationships between variables. The training process typically involves iteratively adjusting the model’s parameters to minimize the difference between its predictions and the actual output. The term “epoch” refers to one of these iterations, where the model sees every data point in the training dataset once.

Epochs in Neural Network Training

Epochs are an essential component of neural network training as they enable the model to learn from the entire training dataset, rather than just a subset of it. The process of training a neural network can be thought of as a cycle of epochs, where each epoch consists of the following steps:

- Forward pass: The model processes the input data, making predictions and computing the loss.

- Backward pass: The model calculates the gradients of the loss with respect to each parameter, and the optimization algorithm updates the parameters. This step can be repeated multiple times during a single epoch.

Epochs can be used to control the model’s learning rate, as well as to implement various regularization techniques. For example, during each epoch, the model might be exposed to a random subset of training data to prevent overfitting. The number of epochs, epoch size, and learning rate are key hyperparameters that need to be tuned for optimal model performance.

Key Characteristics of Epochs

There are several key characteristics of epochs that affect the performance of a neural network:

-

Batch Size:

The number of samples that are processed as a group during each epoch.

-

Evaluation Metrics:

Metrics such as loss, accuracy, and precision are typically evaluated at the end of each epoch to monitor progress.

-

Early Stopping:

Training is halted when a pre-defined metric stops improving, which can be after a certain number of epochs.

-

Learning Rate:

The rate at which model parameters are updated during each epoch.

-

Regularization Techniques:

These include dropout, weight decay, and batch normalization, which are applied during each epoch.

Impact of Epochs on Model Performance

The duration and frequency of epochs have a direct impact on model performance. If epochs are too long, the model may learn specific artifacts of the training data rather than general patterns, leading to overfitting. Conversely, shorter epochs might not provide enough learning opportunities for the model to reach optimal performance.

The balance between epoch duration and frequency is therefore a delicate one, requiring experimentation and careful tuning of hyperparameters to achieve the best possible results. Additionally, using different training settings, data augmentation, and early stopping techniques can help prevent overfitting and achieve more efficient training.

Common Scenarios Where Epochs are Critical

Epochs play a critical role in several machine learning applications, including:

- Deep learning models: Epochs are essential for training large neural networks, where a single pass over the data may not be sufficient for model convergence.

- Time-series prediction: Epochs are used to forecast sequences with a time dimension, often using models like RNNs, LSTMs, or transformers.

- Image classification: Epochs are employed for training on large image datasets, where models may require multiple iterations to learn robust features.

- Causal inference: Epochs can be used to analyze and understand complex relationships between variables in time-series data.

Conclusion

Epochs are a fundamental concept in machine learning, particularly in the context of neural network training. Understanding epochs is essential for selecting the right hyperparameters, monitoring progress, and preventing overfitting. Effective use of epochs can significantly impact model performance and accuracy, making it a crucial aspect of machine learning engineering.

Importance of Epochs in Deep Learning

Epochs play a crucial role in deep learning models as they enable the model to learn from the training data in an iterative manner. This process allows the model to improve its performance with each iteration, thereby enabling it to learn from its mistakes and adapt to the complexities of the data.

The Impact of Epochs on Model Performance

The number of epochs has a significant impact on the performance of a deep learning model. The choice of epoch number affects the trade-off between overfitting and underfitting the data. A higher number of epochs can result in overfitting, where the model becomes too specialized to the training data and fails to generalize well to new, unseen data. On the other hand, a lower number of epochs may result in underfitting, where the model fails to capture the underlying patterns in the data.

Overfitting vs. Underfitting

Overfitting occurs when a model is too complex and fits the noise in the training data, resulting in poor performance on new data. Underfitting occurs when a model is too simple and fails to capture the underlying patterns in the data, also resulting in poor performance.

-

Model Complexity

A model with too many parameters or layers may result in overfitting.

-

Early Stopping

Regularly monitoring the model’s performance on a validation set and stopping the training process before overfitting occurs can help prevent overfitting.

Underfitting, on the other hand, can be addressed by increasing the number of epochs, using regularization techniques, or incorporating more data into the training process.

| Technique | Description |

|---|---|

| Regularization | Adding a penalty term to the loss function to discourage large weights and prevent overfitting. |

| Data Augmentation | Generating additional training data by applying random transformations to the existing data. |

Types of Epochs in Machine Learning

In the realm of machine learning, epochs serve as a crucial component in training and evaluating models. As we delve deeper into the world of machine learning, it becomes essential to understand the different types of epochs that facilitate the learning process. This section will delve into the various types of epochs, shedding light on their purpose and significance in the machine learning landscape.

Training Epochs

Training epochs are the primary focus of machine learning model development. These epochs are used to update the model’s parameters based on the training data. During each training epoch, the model is presented with a batch of data, and the loss function is calculated. The model’s parameters are then updated using backpropagation and optimization algorithms to minimize the loss. The process is repeated for multiple epochs, allowing the model to learn and improve its performance on the training data.

- Initial Epochs: In the initial epochs, the model’s parameters are updated rapidly, and the loss decreases significantly. This is because the model is learning from the training data and updating its parameters to minimize the loss.

- Convergence Epochs: As the model approaches convergence, the loss decreases gradually, and the model’s parameters become stable. This is an indication that the model has reached a point of diminishing returns, and further training may not significantly improve the model’s performance.

Validation Epochs

Validation epochs are used to evaluate the model’s performance on a separate validation dataset. These epochs are critical in preventing overfitting, where the model becomes too specialized in the training data and fails to generalize well to new data. During validation epochs, the model is presented with a batch of validation data, and the loss is calculated. This process helps to estimate the model’s performance on unseen data and provides a more accurate representation of the model’s generalization capabilities.

Testing Epochs

Testing epochs are used to evaluate the model’s performance on a separate test dataset. These epochs are crucial in assessing the model’s ability to generalize to new, unseen data. During testing epochs, the model is presented with a batch of test data, and the loss is calculated. The performance of the model on the test data is then compared to its performance on the training data, providing a comprehensive understanding of the model’s strengths and weaknesses.

Other Types of Epochs

In addition to training, validation, and testing epochs, there are other types of epochs that are used in machine learning, such as:

- Early Stopping Epochs: These epochs involve stopping the training process prematurely if the model’s performance on the validation data does not improve after a certain number of epochs.

- Warm-Up Epochs: These epochs involve training the model on a small fraction of the data before scaling up to the full dataset, helping to prevent overfitting and reduce the model’s sensitivity to hyperparameters.

- Decay Epochs: These epochs involve gradually reducing the model’s learning rate during training, helping to fine-tune the model’s parameters and improve its performance on the validation data.

Each of these epochs plays a critical role in the machine learning process, and understanding their purpose and significance is essential for developing effective and efficient machine learning models.

Role of Epochs in Model Evaluation

Epochs play a pivotal role in evaluating the performance of a machine learning model. By dividing the training process into multiple epochs, machine learning models can be evaluated at regular intervals, providing insights into their performance, accuracy, and consistency over time.

Metric Evaluation During Each Epoch

The performance of a machine learning model is typically evaluated using a set of metrics. Some common metrics used to evaluate model performance during each epoch include:

- Loss Function: This is a measure of the difference between predicted and actual values. A lower loss function indicates better model performance.

- Accuracy: This measures the proportion of correct predictions made by the model.

- Precision: This measures the proportion of true positives among all positive predictions.

- Recall: This measures the proportion of true positives among all actual positive instances.

- F1-Score: This is the harmonic mean of precision and recall and is often used to evaluate the model’s performance.

- Validation Loss: This metric measures the model’s performance on a separate validation set. If the validation loss starts to increase after a certain point, it may indicate that the model is overfitting.

- Accuracy, Precision, and Recall: These metrics evaluate the model’s performance on a test set. If the model’s performance on these metrics is too good on the training set but poor on the test set, it may indicate that the model is overfitting.

- Dynamic learning rate adjustment: The learning rate is adjusted during training based on the model’s performance during each epoch. This allows the algorithm to balance exploration and exploitation, ensuring that the model converges to the optimal solution.

- Epsilon-greedy exploration: The algorithm explores the model space by adding a random component to the model’s predictions during each epoch. This helps the model to avoid getting stuck in local minima and improves its ability to generalize.

- Early stopping: The algorithm stops training when the model’s performance on the validation set starts to degrade. This prevents overfitting and ensures that the model generalizes well to unseen data.

- Adagrad: This algorithm adapts the learning rate for each parameter individually, based on the magnitude of the gradient.

- Adam: This algorithm combines the benefits of Adagrad and RMSProp, and adapts the learning rate for each parameter based on the magnitude of the gradient and the historical magnitude of the gradient.

- Schedule Learning Rate: This algorithm adjusts the learning rate based on the model’s performance during each epoch, using a predetermined schedule.

- Use a logging framework to track training metrics, such as TensorBoard or PyTorch Lightning’s built-in logging system.

- Regularly visualize training metrics, such as loss and accuracy, to identify trends and issues.

- Use a validation set to evaluate model performance and adjust training parameters as needed.

- Start with a small number of epochs (e.g., 10-20) and gradually increase as necessary.

- Use early stopping to stop training when the model’s performance on the validation set starts to degrade.

- Regularly evaluate model performance on a validation set to determine if additional training epochs are needed.

- Regularly save model weights at specific intervals (e.g., every 10 epochs) using a logging framework or a custom function.

- Use a consistent naming convention for saved model weights to avoid confusion.

- Load saved model weights only when necessary (e.g., when resuming training from a previous checkpoint).

[blockquote]>Loss function + Action Taken -> Epoch Progression

These metrics are calculated at the end of each epoch and are used to assess the model’s performance. The specific metrics used may vary depending on the problem and the type of machine learning task being performed.

Interpreting Metrics During Epoch Evaluation

Once the metrics are calculated, they can be used to evaluate the performance of the machine learning model. For example, if the loss function is decreasing over epochs, it indicates that the model is improving its performance. However, if the loss function is not decreasing, it may be an indication that the model is not learning from the data.

| Epoch | Loss Function | Accuracy | Precision | Recall | F1-Score |

|——-|—————|———-|———–|——–|———-|

| 1 | 0.5 | 0.8 | 0.9 | 0.7 | 0.8 |

| 2 | 0.4 | 0.9 | 0.95 | 0.85 | 0.9 |

| 3 | 0.3 | 0.95 | 0.98 | 0.92 | 0.95 |

In this example, the loss function is decreasing over epochs, which indicates that the model is improving its performance.

Evaluating Model Performance Over Epochs

Evaluating model performance over epochs is an essential aspect of tuning hyperparameters and improving model performance. By analyzing the performance of the model over epochs, data scientists can identify areas where the model may be struggling and take corrective action.

For example, if the accuracy of the model is plateauing over epochs, it may be an indication that the model has reached its limit, and further training will not improve performance. In such cases, hyperparameter tuning or changing the architecture of the model may be necessary to improve performance.

Epoch evaluation is a crucial aspect of machine learning model development, as it provides insights into the performance of the model and informs decision-making during the development process.

Designing Epoch-Based Training Strategies

Designing epoch-based training strategies is a crucial aspect of machine learning, as it directly affects the performance and efficiency of the model. By selecting the right strategy, data scientists can optimize their models to reach the best possible results. This section delves into the various epoch-based training strategies, their advantages, and disadvantages, to provide a comprehensive understanding of the most effective methods.

Gradient-Based Descent

Gradient-based descent is a widely used epoch-based training strategy that relies on gradients to optimize the model’s parameters. This method works by iteratively updating the model’s parameters in the direction opposite to the gradient of the loss function, effectively reducing the loss at each step. The process involves two main components: the learning rate and the gradient.

The learning rate determines the step size of each update, while the gradient is used to guide the updates towards the optimal solution. Gradient-based descent is an effective strategy, particularly when combined with other techniques, such as momentum and Nesterov acceleration, which help to avoid local minima and improve convergence rates.

Stochastic Gradient Descent

Stochastic gradient descent (SGD) is a variant of gradient-based descent that uses a single data point to approximate the gradient at each iteration. This method is particularly useful when dealing with large datasets, as it reduces the computational overhead and memory requirements. However, SGD often requires a high learning rate to achieve convergence, which can lead to oscillations and decreased performance.

SGD is commonly used in online learning scenarios, where the model needs to adapt to new data as it becomes available. By using a single data point at each iteration, SGD can quickly adapt to changes in the data distribution, making it an effective strategy for real-time applications.

Mini-Batch Gradient Descent

Mini-batch gradient descent is a compromise between gradient-based descent and SGD. Instead of using a single data point, this method uses a small batch of data points, typically in the range of 16 to 512. Mini-batch gradient descent offers a balance between accuracy and computational efficiency, making it a popular choice for many machine learning applications.

Mini-batch gradient descent is particularly effective when dealing with large datasets, as it can provide a more accurate approximation of the gradient than SGD while maintaining a lower computational overhead than full-batch gradient descent.

Blocquote:

The choice of epoch-based training strategy ultimately depends on the specific requirements of the problem and the characteristics of the data. By understanding the strengths and weaknesses of each strategy, data scientists can make informed decisions to optimize their models and achieve the best possible results.

| Training Strategy | Advantages | Disadvantages |

|---|---|---|

| Gradient-Based Descent | Effective for large datasets, can be combined with other techniques to improve convergence rates | Computationally expensive, may get stuck in local minima |

| Stochastic Gradient Descent | Faster convergence rates, suitable for online learning scenarios | Requires high learning rate, may oscillate and decrease performance |

| Mini-Batch Gradient Descent | Balance between accuracy and computational efficiency, suitable for large datasets | May be computationally expensive for very large datasets |

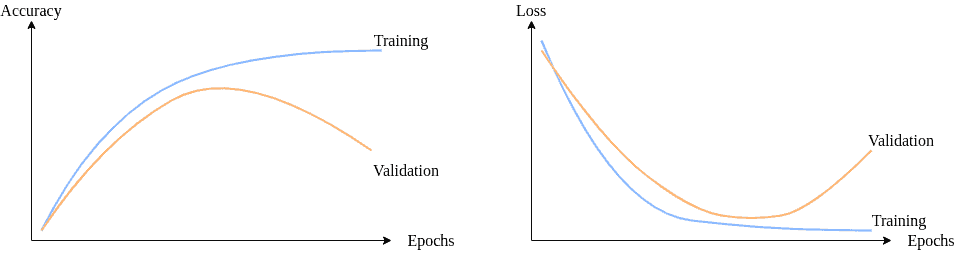

Visualizing Epoch Progress in Machine Learning

Visualizing epoch progress is an essential step in understanding and optimizing the performance of machine learning models. By visualizing the training progress, developers can quickly identify the strengths and weaknesses of their models and make data-driven decisions to improve them.

Line Plots of Loss and Accuracy

Line plots of loss and accuracy can provide a clear and concise visual representation of the model’s performance over epochs. The x-axis can represent the epoch numbers, and the y-axis can represent the loss or accuracy values. This visualization can help identify trends, patterns, and correlations between the model’s performance and the training parameters.

A line plot of loss and accuracy can be created using libraries like Matplotlib in Python. For example, suppose we have a model that has been trained for 10 epochs with a loss of 0.05 and an accuracy of 0.8. The line plot would show a decline in loss and an increase in accuracy as the epochs progress.

loss = [0.1, 0.05, 0.03, 0.02, 0.01, 0.005, 0.002, 0.001, 0.0005, 0.0002]

accuracy = [0.6, 0.7, 0.75, 0.8, 0.85, 0.86, 0.87, 0.88, 0.89, 0.9]

The line plot would show a clear trend of decline in loss and increase in accuracy as the epochs progress.

Bar Charts of Epoch-Wise Performance

Bar charts of epoch-wise performance can provide a clear comparison of the model’s performance at each epoch. The x-axis can represent the epoch numbers, and the y-axis can represent the loss or accuracy values. This visualization can help identify which epochs are the strongest or weakest and make data-driven decisions to improve the model.

A bar chart of epoch-wise performance can be created using libraries like Matplotlib in Python. For example, suppose we have a model that has been trained for 10 epochs with losses of 0.1, 0.05, 0.03, 0.02, 0.01, 0.005, 0.002, 0.001, 0.0005, 0.0002. The bar chart would show a clear comparison of the model’s performance at each epoch.

loss = [0.1, 0.05, 0.03, 0.02, 0.01, 0.005, 0.002, 0.001, 0.0005, 0.0002]

The bar chart would show a clear trend of decline in loss as the epochs progress.

Benefits of Visualizing Epoch Progress

Visualizing epoch progress can have several benefits, including:

* Identifying trends and patterns in the model’s performance

* Making data-driven decisions to improve the model

* Comparing the model’s performance at each epoch

* Identifying the strongest and weakest epochs

By visualizing epoch progress, developers can gain valuable insights into their model’s performance and make informed decisions to improve it.

Handling Overfitting and Underfitting During Epochs

Overfitting and underfitting are two common issues that arise during the epoch-based training process in machine learning. Overfitting occurs when a model becomes too complex and starts to memorize the training data, resulting in poor performance on new, unseen data. On the other hand, underfitting occurs when a model is too simple and fails to capture the underlying patterns in the data. Handling overfitting and underfitting during epochs is crucial to achieve optimal model performance.

Strategies for Handling Overfitting

One of the simplest ways to handle overfitting is by regularizing the model using techniques such as L1 and L2 regularization. Regularization adds a penalty term to the loss function, encouraging the model to reduce the complexity of the weights. Another strategy is to use dropout, which randomly removes neurons during training to prevent the model from relying on a single feature.

Strategies for Handling Underfitting

To handle underfitting, we can try increasing the capacity of the model by adding more layers or neurons. We can also try using different activation functions, such as ReLU or leaky ReLU, which can help the model learn more complex patterns.

Detecting Overfitting and Underfitting

There are several metrics that can be used to detect overfitting and underfitting. One of the most commonly used metrics is the validation loss, which measures the model’s performance on a separate validation set. We can also use metrics such as accuracy, precision, and recall to evaluate the model’s performance.

Evaluation Metrics for Overfitting and Underfitting

Here are some common evaluation metrics used to detect overfitting and underfitting:

| Metric | Description |

| — | — |

| Coefficient of Determination (R-squared) | Measures the proportion of variance in the dependent variable that is predictable from the independent variable |

| Mean Squared Error (MSE) | Measures the average squared difference between predicted and actual values |

| Mean Absolute Error (MAE) | Measures the average absolute difference between predicted and actual values |

| Root Mean Squared Error (RMSE) | Measures the square root of the average squared difference between predicted and actual values |

Creating Epoch-Aware Machine Learning Algorithms

Creating epoch-aware machine learning algorithms is crucial for improving the efficiency and effectiveness of training deep neural networks. Epoch-aware algorithms adapt their training strategies to the changing needs of the model as it progresses through the training process.

Epoch-aware algorithms are designed to be dynamic, allowing them to adjust their learning rate, exploration, and other hyperparameters in real-time based on the model’s performance during each epoch. This adaptive approach enables the algorithm to optimize the training process and improve the model’s accuracy and generalization capabilities.

Epoch-Awareness in Machine Learning Algorithms

Epoch-awareness is achieved through various techniques, including:

Examples of Epoch-Aware Algorithms

Some examples of epoch-aware algorithms include:

The key advantage of epoch-aware algorithms is their ability to adapt to changing model performance and optimize the training process in real-time. This leads to improved model accuracy, faster convergence, and better generalization capabilities.

Illustrative Examples, What is an epoch machine learning

Consider a deep neural network trained on the MNIST dataset. The epoch-aware algorithm adjusts the learning rate during training based on the model’s performance during each epoch. As the model converges to the optimal solution, the learning rate is reduced, allowing the model to make finer adjustments and avoid overfitting.

epoch-aware algorithms can lead to improvements in model accuracy and convergence speed by adaptively adjusting the learning rate and other hyperparameters.

In summary, epoch-aware machine learning algorithms are designed to adapt to the changing needs of the model during training, resulting in improved accuracy, faster convergence, and better generalization capabilities.

Best Practices for Epoch-Based Training

Epoch-based training is a crucial aspect of deep learning, requiring careful monitoring and optimization to achieve the best results. Regularly monitoring training progress and adjusting the number of epochs based on performance are essential best practices for epoch-based training.

Regularly Monitoring Training Progress

Monitoring training progress is vital to understanding how your model is performing and whether it’s on the right track. This can be done by tracking metrics such as loss, accuracy, and validation loss over time. Regularly monitoring training progress allows you to identify issues and adjust your model or training parameters as needed.

Adjusting the Number of Epochs Based on Performance

The number of epochs is a critical hyperparameter in deep learning, and adjusting it based on performance can greatly impact model results. Overfitting occurs when the model is trained too long, causing it to memorize the training data rather than generalizing to new data.

Saving and Loading Model Weights

Saving and loading model weights is an essential aspect of epoch-based training, allowing you to continue training from a previous checkpoint or use a model that has already been trained on a similar task.

By following these best practices, you can ensure that your epoch-based training is optimized for success and that your model achieves the best possible results.

Final Summary: What Is An Epoch Machine Learning

So, to wrap it up, epochs are the key to machine learning, and understanding them is like knowing the secret to getting better at anything, innit? Whether you’re trying to recognize cat pictures or predict stock prices, epochs are the way to go, yeah?

FAQs

Q: What’s the difference between a training epoch and a validation epoch?

A: A training epoch is when the model is fed data to learn from, whereas a validation epoch is when it’s tested to see how well it’s doing, innit?

Q: How do epochs affect the performance of a model?

A: The more epochs, the better the model gets at recognizing patterns, but too many epochs can lead to overfitting, blud.

Q: What’s the purpose of visualizing epoch progress in machine learning?

A: It’s like looking at a graph of your marathon run, mate – it helps you track your progress and make necessary changes to improve, yeah?

Q: How do you detect overfitting and underfitting during epoch-based training?

A: You can use metrics like accuracy, loss, and precision to figure out how well the model’s doing, innit?