Why is Wayback Machine so slow when loading pages, a question raised by many, as the internet’s vast library seems to be slowing down. With Wayback Machine, you get to travel back in time and explore the web’s past, but it’s not quite as quick as you’d hope, right? So, what’s causing this delay, and how can we improve the Wayback Machine’s performance?

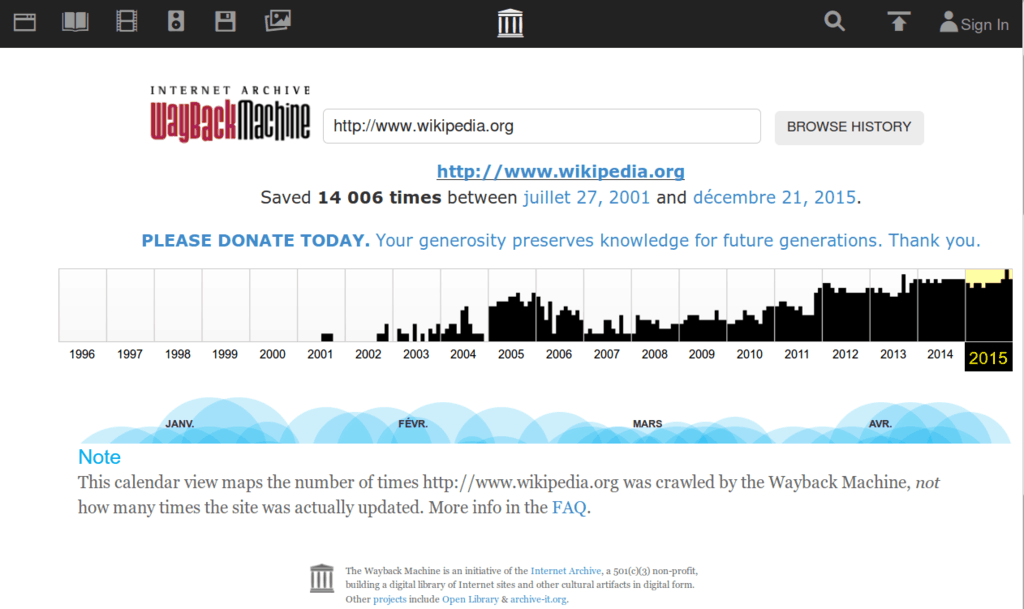

The Wayback Machine is the world’s largest digital archive, with over 360 billion web pages stored in its database. But, its massive size comes with a cost, as the machine struggles to keep up with the demands of internet traffic and computational resources.

Overview of the Wayback Machine: Why Is Wayback Machine So Slow

The Wayback Machine is an internet archive that has revolutionized the way we access and preserve online content. Imagine a vast library where you can go back in time and explore the web as it existed in the past.

The Wayback Machine is a digital archive developed by the Internet Archive, a non-profit organization that aims to preserve online content and make it accessible for future generations. This incredible resource allows users to browse over 450 billion web pages from the past 30 years, providing a unique glimpse into the ever-changing digital landscape.

Main Features and Functionalities

The Wayback Machine has several key features that make it an invaluable resource:

It is a digital archive that stores snapshots of websites and web pages as they existed at a particular point in time, providing a historical record of the web.

The archive uses web crawlers to crawl the web, extracting and saving content from millions of websites every day.

Users can access the archive and browse through the snapshots of websites, exploring how they have changed over time.

The Wayback Machine also provides tools for researchers, developers, and anyone interested in exploring the web’s past.

Purpose and Significance

The Wayback Machine serves several purposes that make it a crucial tool for the web:

Preservation of Online Content: The Wayback Machine helps preserve online content that may be lost or deleted over time, providing a historical record of the web’s evolution.

Research and Development: The archive offers a unique opportunity for researchers and developers to study the web’s past, identifying trends, patterns, and breakthroughs.

- Examples of how the Wayback Machine is used in research:

Examples of the Wayback Machine’s Use Cases

The Wayback Machine is used by individuals, organizations, and governments in a variety of ways:

- Examples of individual use:

| Organization/Entity | Reason for Use |

|---|---|

| Libraries and Archives | To preserve and digitize online content, ensuring its long-term accessibility and preservation. |

| Universities and Research Institutions | To support research and development, using the archive to inform their studies and investigations. |

| Government Agencies | To preserve online content related to historical events, policies, and regulations. |

Factors Contributing to Slow Performance

As the popularity and reliance on the Wayback Machine continue to grow, a number of factors can contribute to its slow performance. The machine’s vast repository of archived content, combined with the demands placed upon it by users, can lead to a range of issues that affect its speed and responsiveness. Among the primary factors contributing to slow performance are increased internet traffic, the demand for archived content, and the role of computational resources and hardware.

Increased Internet Traffic, Why is wayback machine so slow

Increased internet traffic plays a significant role in the slow performance of the Wayback Machine. With more users accessing the site, the machine’s servers are subjected to a substantial increase in requests and data transfers. This can lead to a situation where the machine becomes overwhelmed, resulting in slow loading times and decreased responsiveness. To put this into perspective, consider a scenario where millions of users attempt to access the Wayback Machine simultaneously, each requesting a different webpage or file. The machine’s servers must process these requests in a timely manner, which can be a daunting task, especially when dealing with a massive repository of archived content.

- Peak usage hours: The majority of users typically access the Wayback Machine during peak hours, often after working hours or on weekends, leading to increased traffic and slower performance.

- Sporadic increases: Unexpected spikes in traffic can also occur due to social media campaigns, online events, or other factors that suddenly raise awareness about the Wayback Machine.

- Rise of mobile devices: As people increasingly access the Wayback Machine through mobile devices, the traffic volume is expected to continue increasing, putting additional pressure on the machine’s resources.

Demand for Archived Content

The demand for archived content also contributes significantly to the slow performance of the Wayback Machine. As users increasingly rely on the machine for historical and archived content, the system must process an ever-growing number of requests. This can lead to a situation where the machine struggles to keep pace with demand, resulting in slow loading times and decreased responsiveness. To illustrate this, consider a scenario where a user requests an archived webpage from 2005, only to discover that the machine has not yet archived a version of the page. The user must then wait as the machine creates a new snapshot, which can be a time-consuming process.

- Rise in user requests: As more users become aware of the Wayback Machine’s capabilities, they are increasingly making requests for archived content, placing additional pressure on the system.

- Archiving new content: Creating new snapshots of archived content requires significant computational resources and can lead to slower performance.

- Historical content gaps: Gaps in the machine’s archival history can result in slower performance, as users are forced to wait for new snapshots to be created.

Computational Resources and Hardware

The role of computational resources and hardware also plays a significant part in the slow performance of the Wayback Machine. As the machine’s repository grows, so too must its computational capabilities in order to process the ever-increasing number of requests. However, upgrades to the machine’s hardware and infrastructure can be slow to happen, leading to a situation where the system struggles to keep pace with demand. Consider a scenario where the machine’s computational resources become overwhelmed, resulting in slow loading times and decreased responsiveness.

In a bid to mitigate these issues, the Internet Archive has invested heavily in upgrading its infrastructure and computational capabilities, including deploying new servers, improving network efficiency, and streamlining data transfer processes.

| Infrastructure Upgrade | Impact |

|---|---|

| Deploying new servers and improving network infrastructure | Improved response times and reduced latency |

| Upgrade to newer computational resources | Increased processing power and efficiency |

| Optimization of data transfer processes | Reduced data transfer times and improved responsiveness |

Technical Limitations

The Wayback Machine, despite its remarkable abilities, is not immune to limitations. The sheer complexity of web content, coupled with the vast and ever-growing nature of the internet, poses significant challenges for the machine’s performance. To better understand these limitations, it is essential to delve into the realm of web crawlers and the intricacies of archiving websites, particularly those with dynamic content.

The limitations of web crawlers in capturing and storing complex websites with dynamic content are multifaceted. One significant challenge lies in the nature of dynamic content, which often relies on JavaScript to load and update. Web crawlers, by their very design, are limited in their ability to accurately crawl and capture content generated by JavaScript-heavy websites. This is due to the fact that these crawlers typically rely on static HTML content, which is often altered or rendered dynamically by JavaScript.

Challenges of Archiving Websites with JavaScript-heavy Content

Web archiving is a complex task, especially when dealing with sites that extensively employ JavaScript. The primary issue arises from the fact that web crawlers struggle to replicate the dynamic loading and updates of JavaScript-based content. This limitation is exacerbated by the vast array of possible user interactions and the sheer variability of website designs.

- Web crawlers’ inability to accurately capture dynamic content leads to incomplete or inaccurate site captures.

- The complexity of JavaScript code often hinders crawlers’ ability to replicate user interactions and load content correctly.

- Websites’ heavy reliance on dynamically loaded content hinders crawlers from effectively indexing the site’s full content.

Importance of Website Optimization for Better Crawlers Performance

Optimizing websites for better crawlers performance can significantly enhance the overall quality of site captures. This involves implementing crawl-friendly design and content strategies that facilitate easier crawling and indexing. Effective optimization techniques can significantly improve the accuracy of site captures, resulting in a better archiving experience for users.

- Proper indexing of content using standardized metadata.

- Clear and consistent URLs for resources and content.

- Simplified loading of resources using methods like caching.

Comparison of the Wayback Machine to Other Web Archiving Technologies

While the Wayback Machine is a pioneering project in web archiving, other technologies also offer valuable tools and approaches to capturing and preserving web content. Some notable alternatives and their differences from the Wayback Machine are explored below.

Web archiving technologies vary in scope, design, and focus. Each approach presents unique advantages and challenges, with some better suited for specific use cases or content types.

Web archiving technologies vary in scope, design, and focus. Each approach presents unique advantages and challenges, with some better suited for specific use cases or content types.

| Approach | Main Focus | Key Differences |

|---|---|---|

| Internet Archive (Wayback Machine) | Massive scale, broad content coverage | Wide range of content types, vast user base |

| Selenium-based crawlers | Detailed user experience capture | Able to accurately capture dynamic content through user simulations |

| Page archival tools | High-fidelity page capture and rendering | Specialize in preserving the visual presentation and layout of web pages |

Data Management and Retrieval

The Wayback Machine stores a vast archive of web pages, which necessitates efficient data management and retrieval strategies to ensure seamless access to this treasure trove of internet history. A sophisticated system of indexing and metadata is crucial for pinpointing specific archived content within the vast repository.

Retrieving Archived Content

Retrieving archived content from the Wayback Machine involves a meticulous process that involves locating the desired web page, assessing its accessibility, and retrieving the relevant archival content. This process can become complicated due to the sheer volume of archived pages, making data management and retrieval a time-consuming and resource-intensive task.

The Wayback Machine uses a distributed caching system to speed up the retrieval process. This caching system is essentially a collection of servers that store frequently accessed archived pages in a readily accessible format. When a user requests a specific archived page, the Wayback Machine checks the distributed cache to see if the page is already available. If it is, the system can retrieve the page from the cache instead of going through the more laborious process of reconstructing the page from its archived data.

This caching system enables the Wayback Machine to significantly reduce the time it takes to retrieve archived content. However, it also means that if a cached copy of an archived page is lost or becomes outdated, the user may experience long delays or even be unable to access the page at all.

Data Compression and Storage

Data compression plays a vital role in storing archived web pages efficiently. The Wayback Machine employs cutting-edge data compression algorithms that minimize the storage requirements for archived content. These algorithms analyze the structure and contents of each web page, and then compress it using a combination of lossless and lossy compression techniques. Lossless compression retains the original data, while lossy compression discards some of the data to achieve a smaller file size.

The compressed archived content is then stored on the Wayback Machine’s servers. The server architecture is designed to accommodate the vast storage requirements, with servers organized into a hierarchical structure for easy management and retrieval. This setup allows the Wayback Machine to efficiently store and manage its extensive collection of archived web pages.

Importance of Indexing and Metadata

Indexing and metadata are indispensable for efficient content retrieval in the Wayback Machine. Indexing involves creating a comprehensive list of all the archived web pages that are stored within the system. This list serves as a reference point for the retrieval system, enabling users to search for specific web pages based on various criteria such as URL, date of archiving, or .

Metadata provides additional contextual information about each archived web page. It contains details such as the URL of the page, the date of archiving, and any relevant s or tags. By including metadata in the indexing process, the Wayback Machine can provide users with more specific and relevant search results, ensuring a faster and more accurate retrieval of archived content.

Data Storage and Retrieval Techniques

In addition to data compression, the Wayback Machine employs other data storage and retrieval techniques to optimize its performance. These techniques include:

– Hashing: This involves creating a unique digital fingerprint for each archived page, which enables the retrieval system to quickly locate the page even if it is not stored directly in the cache.

– Segmentation: This technique involves breaking down large archived pages into smaller segments, which can be stored and retrieved more efficiently.

– Content Distribution Networks (CDNs): The Wayback Machine uses CDNs to distribute archived content across multiple servers, reducing the load on individual servers and improving retrieval speeds.

Efficient Content Retrieval

Efficient content retrieval is critical for the Wayback Machine’s success. The system relies on a combination of cutting-edge data compression algorithms, sophisticated data management techniques, and a robust caching system to ensure seamless access to its extensive collection of archived web pages. By leveraging these technologies, the Wayback Machine can provide users with a wealth of historical internet data, fostering a deeper understanding of the ever-changing digital landscape.

Best Practices for Users

:max_bytes(150000):strip_icc()/wayback-machine-about-789793170c704121b92d2eeefc1df402.png)

By following these best practices, webmasters can ensure their websites are optimized for the Wayback Machine crawlers, reducing the complexity of their JavaScript-heavy content and making it easier for the archive to retrieve and store their pages. This, in turn, will greatly improve the performance and accessibility of their archived content.

To optimize their websites for better Wayback Machine crawlers performance, users should focus on making their content more accessible and crawlable. One of the essential ways to do this is by adopting a mobile-first approach. This approach involves designing and developing websites that are responsive and provide a seamless user experience across various devices and screen sizes.

1. Implement Mobile-First Design

A mobile-first approach ensures that the most essential elements of a website are accessible and load quickly, even on slow network connections. This is crucial for search engines and crawlers like the Wayback Machine, which rely on websites to provide accurate and up-to-date information.

For example, a website that has implemented a mobile-first design would prioritize loading essential content such as text, images, and navigation menus before loading non-essential content like videos, animations, and ads. This not only improves the user experience but also helps crawlers like the Wayback Machine to quickly gather and store the website’s content.

2. Reduce JavaScript Complexity

JavaScript-heavy content can be a significant hurdle for the Wayback Machine crawlers. To make it easier for them to retrieve and store content, users should focus on minimizing JavaScript complexity. One way to do this is by using asynchronous loading of JavaScript files, which allows crawlers to access and crawl the website’s content even when JavaScript files are still loading.

For instance, a website that uses a JavaScript framework like React or Angular can implement a lazy loading feature, where only the necessary JavaScript files are loaded when a user interacts with the website. This not only improves the user experience but also makes it easier for the Wayback Machine to crawl and store the website’s content.

3. Content Organization and Labeling

The Wayback Machine archive relies heavily on website metadata and labels to correctly categorize and retrieve archived content. Users should ensure that their website’s content is organized in a logical and consistent manner, with clear and descriptive labels for each piece of content.

For example, a website that uses an e-commerce platform can implement a tagging system, where products are labeled with relevant s and categories. This not only improves the user experience but also makes it easier for the Wayback Machine to retrieve and store the website’s product information.

4. Use of Microformats and Structured Data

Microformats and structured data are markup languages that provide additional information about website content, allowing the Wayback Machine archive to better comprehend and store the website’s metadata. By implementing microformats and structured data on their website, users can provide the Wayback Machine with more accurate and detailed information about their content.

For instance, a website that uses Google’s Structured Data Markup Helper can mark up their content with schema.org vocabulary, providing the Wayback Machine with more detailed information about each piece of content, such as authorship, publication date, and review ratings.

By implementing these best practices, webmasters can ensure their websites are optimized for the Wayback Machine crawlers, reducing the complexity of their JavaScript-heavy content and making it easier for the archive to retrieve and store their pages. This, in turn, will greatly improve the performance and accessibility of their archived content, making it easier for users to find and access historical versions of their website.

End of Discussion

:max_bytes(150000):strip_icc()/InternetArchive-ba36454589b44b9fb65eb50c02adfa0e.jpg)

So, there you have it, folks! The slow performance of the Wayback Machine is due to a combination of factors, including increased internet traffic, demand for archived content, and hardware limitations. But, don’t worry, there are solutions on the horizon, from improving web crawlers technology to optimizing website content for better search results.

Questions Often Asked

Q: What’s the best way to speed up the Wayback Machine?

A: One simple way to speed up the Wayback Machine is to optimize your website’s content and structure, making it easier for crawlers to index and store. This includes using descriptive URLs, minimizing JavaScript use, and organizing your content in a logical and hierarchical manner.

Q: Can I manually add web pages to the Wayback Machine?

A: Unfortunately, no! The Wayback Machine uses specialized software to crawl and archive web pages. However, you can submit your website for archiving, and the team will do their best to incorporate it into the database.

Q: What happens if the Wayback Machine can’t archive a page?

A: If the Wayback Machine can’t archive a page, it’s usually due to technical issues, such as website instability or JavaScript-heavy content. In these cases, you can try resubmitting the URL or reaching out to the team for assistance.